ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

AI-Enhanced Digital Illustration Methods Improving Precision and Efficiency for Visual Designers

Raenu Kolandaisamy 1![]() , Dr. Tapasmini Sahoo 2

, Dr. Tapasmini Sahoo 2![]()

![]() , Dr. Sukhada Shashank Aloni 3

, Dr. Sukhada Shashank Aloni 3![]()

![]() , Vijay Itnal

4

, Vijay Itnal

4![]() , Gayathri B. 5

, Gayathri B. 5![]() , Dr. R. Salini 6

, Dr. R. Salini 6![]()

![]() , Uma Maheswari G. 7

, Uma Maheswari G. 7![]()

1 Lecturer,

Institute of Computer Science and Digital Innovation (ICSDI), UCSI University,

Kuala Lumpur, Malaysia

2 Associate

Professor, Department of Electronics and Communication Engineering, Institute

of Technical Education and Research, Siksha 'O' Anusandhan

(Deemed to be University), Bhubaneswar, Odisha, India

3 Assistant Professor, Department of

Computer Engineering, A. P. Shah Institute of Technology, Thane (W), Mumbai

University, India

4 Assistant Professor, Department of

Mechanical Engineering, Vishwakarma Institute of Technology, Pune, Maharashtra,

411037, India

5 Assistant Professor, Computer

Science, Meenakshi College of Arts and Science, Meenakshi Academy of Higher

Education and Research, Chennai, Tamil Nadu 600080, India

6 Associate Professor, Department of

Computer Science and Engineering, Panimalar Engineering College, Tamil Nadu,

India

7 Assistant Professor, Department of

Mathematics, Meenakshi College of Arts and Science, Meenakshi Academy of Higher

Education and Research, Chennai, Tamil Nadu 600080, India

|

|

ABSTRACT |

||

|

Artificial

intelligence (AI) has significantly transformed the industry of digital

illustration since it has enhanced accuracy, efficiency and freedom of

creativity in the hands of visual designers. The advanced AI-based approaches

that will be discussed in this paper include deep neural networks (DNNs),

Generative Adversarial Networks (GANs), and diffusion models that will be

used to improve the image generation and refinement process. The specified

framework is a combination of data-driven learning and artistic mechanisms to

ensure that it is possible to synthesize style automatically, make images

look in the high-resolution, and engage in intelligent image enhancement. A

systematic process is developed involving the data set preparation using a

number of artistic methods, the best methodology in training the models, and

implementing the AI-based illustration chain. Empirical analysis reveals that

the accuracy of rendering and consistency of style and speed of production

have continued to increase in comparison with the conventional processes of

illustrations. The system also reduces the human error and reduces the degree

of manual control, and maintains the creative control. However

such problems as excessive computing requirements, reliance on data and

potential bias of the outputs generated are violently discussed. The results

indicate the possibility of AI-enhanced illustration systems to transform the

current design process in the following ways: scalable, efficient, and

quality visual production. |

|||

|

Received 14 January 2026 Accepted 18 March 2026 Published 11 April 2026 Corresponding Author Anjay

Kumar Mishra, anjaymishra2000@gmail.com DOI 10.29121/shodhkosh.v7.i4s.2026.7505 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download,

reuse, re-print, modify, distribute, and/or copy their contribution. The work

must be properly attributed to its author.

|

|||

|

Keywords: AI-Assisted Illustration, Deep Neural Networks,

Generative Adversarial Networks, Diffusion Models, Digital Art Automation,

Visual Design Efficiency |

|||

1. INTRODUCTION

The field has significantly developed in recent decades whereby digital illustration has become computer aided presentation as compared to the traditional manual methods that were used to produce the illustrations. Having advanced software tools and high-performance computers, the visual designers have an opportunity to use powerful platforms that allow creating high-resolution artwork with complex methods and more control. With all these developments, traditional digital illustration techniques continue to involve significant amounts of manual labor, artistic skill, and lengthy and tedious refinement to produce accuracy and consistency. With the continued increase of the need of high quality visual content in various sectors like advertising, gaming, animation and user interface designing, there has been a greater concern of smart systems to boost productivity and creative output. Artificial intelligence (AI) has become a revolution in the field of digital illustration, which can provide new methods of automating and supplementing artistic processes. The models powered by AI, especially deep-learning models, have been shown to excel in image creation, style transfer, and image enhancement Li et al. (2024). Convolutional neural networks (CNNs), Generative Adversarial Networks (GANs), and diffusion-based models represent techniques that are able to train machines on the complex types of patterns, textures, and styles of art on the basis of large datasets.

These models have the capability of creating lifelike images, sharpening sketches, enhancing the resolution and even the style of famous artists increasing the options of creativity of the designers. Implementing AI in digital illustration procedures creates a shift in paradigm as a fully manual design process is shifted to a hybrid human-machine interaction Zhou and Park (2023). The AI can also help the designers to cut repetition in coloring, shading and enhancing details so that they can concentrate more on conceptualization and making of creative decisions. Besides, AI systems may offer real-time recommendations, corrective mistakes, and intelligent additions that greatly enhance the precision of design and lower the chances of human mistake. This does not only hold the designing process but it also renders it constant and scalable in massive projects. However, certain issues and concerns are also linked to the application of AI-enhanced illustration techniques Tang et al. (2024). The issues of data dependency, computational complexity, and potential bias of training data must be addressed in such a way that the outcomes would be objective and accurate. Besides, the freedom of choice between automation and creativity originality is also a burning question since excessive use of AI may impact the originality and authenticity of creative products. The use of AI in art is more complicated by other ethical concerns that include authorship and intellectual property rights Tang et al. (2024). The research will be conducted on AI-assisted digital illustration methods to contribute to the accuracy and efficiency of visual designers.

2. Background and Related Work

Digital illustration has been continuously associated with progress in computer graphics and image processing, as well as machine learning. The initial digital art systems were majorly based on raster and vector applications by using which designers manually operated every detail of the artistic procedure. Although these tools were flexible, it demanded a lot of expertise and time. The first move in digital illustration automation was the introduction of the algorithmic image processing, which includes edge detection, filtering, and color correction Baudoux (2024). As machine learning, especially deep learning, continues to evolve quickly, a paradigm shift in the sphere has occurred and led to intelligent content generation and enhancement. Many feature extraction, image classification, and enhancement applications have employed CNNs to form the basis of many AI-assisted illustration systems. CNN-based structure has also been used to carry out tasks like super-resolution, denoising, and semantic segmentation, which make manipulation of images more accurate and precise Tang et al. (2024). The concept of Generative Adversarial Networks (GANs) has also introduced another shift to the sphere of digital illustration since it permits creating images that are highly realistic and stylish. The applications of GAN-based architecture have recorded positive results in creative style transfer, translating images into images, and the generation of creative content. These solutions allow designers access different forms of art and automate complex changes and maintain a visual integrity Donnici et al. (2020).

This is a more recent model of diffusion that has been developed as a strong competitor of high-quality image generation. Unlike GANs, the diffusion models gradually eliminate noise to structured images, i.e. diffusion models are more stable and generate more fidelity images. DALL•E and Stable Diffusion are both models, which have demonstrated exemplary performance in the number of detailed and contextually aware illustrations, based on textual cues, which has played a major role in creative workflow. The application of AI to digital art pipeline has been explored on multiple occasions and the findings proved more efficient, capable of scaling and creative support. However, the cost of computation, bias in the data and non-interpretability are some of the most crucial challenges Sreenivasan and Suresh (2024). The existing literature confirms the concept of hybrid systems in which humans are more creative and the AI automation is employed to produce the finest results. Table 1 is a description of AI technique to improve accuracy and efficiency of illustration. The paper builds on these advancements and proposes a comprehensive view of AI-based digital illustration, both in the technical performance and in the application in the real geometrical design.

Table 1

|

Table 1 Summary of Related Work on AI-Enhanced Digital Illustration Methods for Precision and Efficiency |

|||||

|

Technique Used |

Model/Algorithm |

Dataset Used |

Application Area |

Key Contribution |

Limitation |

|

Image Enhancement Ajani et al. (2025) |

CNN |

ImageNet |

Digital Art Refinement |

Improved texture clarity |

Limited style diversity |

|

Style Transfer Lin et al. (2024) |

CycleGAN |

WikiArt |

Artistic Style Conversion |

Unpaired style translation |

Mode instability |

|

Image Generation |

GAN |

CelebA |

Portrait Illustration |

Realistic face generation |

High training cost |

|

Super-Resolution |

SRCNN |

DIV2K |

High-Res Illustration |

Resolution enhancement |

Loss of fine details |

|

Image-to-Image Translation |

Pix2Pix |

Cityscapes |

Sketch to Image |

Paired translation |

Requires labeled

data |

|

Style Synthesis |

StyleGAN2 |

FFHQ |

Creative Design |

High-quality style control |

Computationally expensive |

|

Diffusion-Based Generation |

DDPM |

LSUN |

Image Synthesis |

Stable high-quality outputs |

Slow inference |

|

Text-to-Image Cheng et al. (2024) |

DALL·E |

Multimodal Dataset |

Concept Art |

Text-driven illustration |

Data bias issues |

|

Diffusion Model |

GLIDE |

WebImageText |

Creative Generation |

Improved realism |

High GPU usage |

|

Hybrid Model Xiao et al. (2025) |

CNN + GAN |

Custom Art Dataset |

Illustration Enhancement |

Combined refinement +

synthesis |

Complex training |

|

AI Pipeline Katya and Rahman (2025) |

GAN + Diffusion |

Mixed Dataset |

Design Automation |

End-to-end pipeline |

Resource intensive |

|

Advanced Diffusion Chang (2021) |

Stable Diffusion |

LAION-5B |

High-Resolution Art |

Scalable generation system |

Ethical concerns |

3. AI Techniques for Digital Illustration Enhancement

3.1. Deep neural networks for image generation and refinement

Convolutional Neural Networks (CNNs) and Deep Neural Networks (DNNs) are used as a fundamental part of improving digital illustration since they allow creating and refining images using automated methods. Such models can be trained to learn hierarchical feature representations on large-scale visual inputs encompassing the low-level representations including edges and textures and the high-level semantic representations. DNNs are extensively applied in generating images in digital illustration processes, such as image super-resolution, denoising, inpainting, and detail enhancement Barath et al. (2023). As an example, encoder decoder and U-Net models can be used to accurately recreate images preserving spatial information and enhancing visual quality. DNN techniques can also be used to perform sketch to image translation, i.e. rough artist input can be translated to detailed illustrations using learned mappings. Also, the attention schemes and residual learning system also contribute to the improved performance of the model by targeting significant visual areas and reducing the losses of information in processing Huang et al. (2023). Such features greatly minimize the need of refinement work through handiwork and enhance uniformity in massive design undertakings.

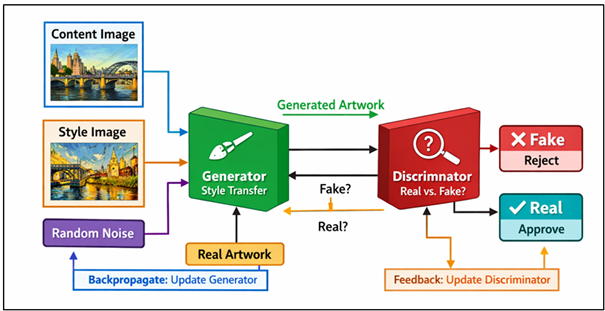

3.2. Generative Adversarial Networks (GANs) for Artistic Style Synthesis

Generative Adversarial Networks (GANs) have become a highly effective approach to the synthesis of an artistic style in digital illustration. GAN is made up of two rival neural networks a discriminator and a generator, which are adversarially trained. The generator produces the fake images and the discriminator determines the authenticity of the images by differentiating between the fake and real samples. It is a competitive learning process that allows GANs to generate images that are very realistic and stylistically rich Liu et al. (2023). The architecture of GAN presented in Figure 1 allows the synthesis of realistic artistic style. The GANs find extensive applications in digital illustration in style transfer, image-to-image translation, and generation of creative content.

Figure 1

Figure 1 Generative Adversarial Network (GAN) Architecture

for Artistic Style Synthesis in Digital Illustration

Cycle GAN models and other similar models can be used to transform images across artistic domains, without the need to have paired data sets, and allow designers to transform photographs into paintings or apply certain artistic styles continuously. StyleGAN is a different model, however, it gives finer control over attributes of images and allows high-quality, customizable illustrations to be generated. GAN based tools also greatly improve the flexibility of creativity, as it allows experimenting with many styles and visual effects quickly. They even automate processing of complex artistic changes that would otherwise consume a lot of manual work. Nonetheless, GANs are also usually challenging to train because they have mode collapse, hyperparameter sensitivity and instability. Moreover, it is also hard to achieve consistency and to eliminate artifacts in created images. Irrespective of these shortcomings, GANs remain one of the technologies that should be utilized to further the development of AI-driven artistic image and style generation.

3.3. Diffusion Models for High-Resolution Image Creation

Diffusion models constitute a more recent development in generative AI, which currently achieves the state of the art performance in high-resolution image generation as a digital illustrator. These models work by adding noise to training images in a slow manner then learn to un-do this to produce new images using random noise. This denoising mechanism is an iterative artifact that allows diffusion models to generate very noticeable and aesthetically consistent results at a greater level of stability than GANs. Diffusion models can also be conditioned to aid controllable generation by allowing users to control what is produced, be it style, structure, or semantic. Moreover, they have better variety of generated samples, eliminating the possibility of repetitive results that are typical of other generative models.

4. Methodology

4.1. Dataset collection and preparation

The quality and diversity of the dataset that is used to train AI-enhanced digital illustration systems determine the effectiveness of the systems to a large extent. A detailed dataset is created in this research as the sum of digital artworks, illustrations, and design samples of several publicly accessible repositories and sources of the curated data. The collection of data represents a practical spectrum of artistic styles: realism, abstract art, cartoon, and vector graphics, and concept art, which will allow extensive generalisation. To aid in detail learning of features and high quality output production, high-resolution images are given priority.

4.2. Model Selection and Training Strategies

The identification of the appropriate AI models is critical to the achievement of the optimal performance in regards to digital illustration enhancement tasks. The hybrid of deep neural networks, GANs, and diffusion models is made in use of their complementary advantages in this paper. The models are determined by the needs that are based on tasks involving image refinement, style transfer, and high-resolution generation. The established architectures such as U-Net, resnets based generators, stylegan and diffusion based models are sequentially followed in order to reduce the time of development and improve the baseline performance. The trainers are equipped with plans that are worked out to support the stability, efficiency and accuracy. It also makes use of transfer learning which initializes models with trained weights and this saves time, as well as improves convergence. The Fine-tuning of the models to artistic styles of domains is done using the curated dataset. Going through hyperparameter optimization like adjustment of the learning rate, alteration of the batch size, and regularization is to enhance performance. Adversarial training is also applied to the GAN-based models where the generator and the discriminator are updated alternately to realize a balance. With diffusion models, the quality/computational cost tradeoff is accomplished by training diffusion models through iterative denoising autoregressive processes. The functions of loss such as perceptual loss, adversarial loss and reconstruction loss are combined to ensure both visual fidelity and structural accuracy. Each of these training techniques can be used to produce powerful and efficient model performance on AI-assisted illustration tasks.

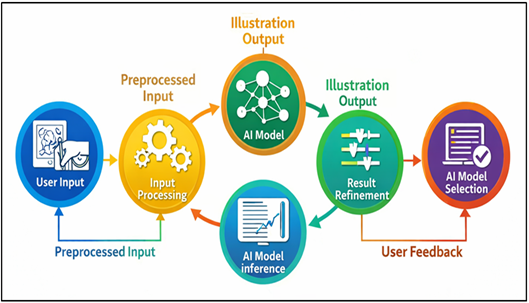

4.3. Implementation of AI-Assisted Illustration Pipeline

The pipeline of illustration using the help of AI is designed in the shape of a scalable and modular system that integrates the aspects of data processing, model inference, and user interaction. The input may be the input displayed in sketched format, reference or written instructions regarding the illustration needed. This input will be fed and passed through the appropriate AI model that itself will be dependent on the task i.e. refinement, style transfer or image generation. The nature of the processing stage is that processing illustrations are created or refined by trained models. Figure 2 shows that AI pipeline enhancing increases the efficiency of illustration workflow. One of them is the DNN-based modules, addressing noise reduction and detail enhancement, GAN-based modules, addressing style synthesis, or diffusion models, addressing high-resolution outputs.

Figure 2

Figure 2 AI-Assisted Illustration Pipeline for Digital

Illustration Systems

Post-processing software such as sharpening, color correction and contrast adjustment are also used to enhance the quality of intermediate products so that there is visual consistency. The interactive interface must also be included as one of the elements of the pipeline whereby the designers are able to tweak the parameters to select the style and refine the output in a cyclic fashion. Continuous improvement is made possible through feedback because corrections of the users are applied in the model. Large scale design jobs can also be done using the system and this increases the scalability and efficiency.

5. Advantages and Limitations

5.1. Benefits: increased precision, speed, automation, scalability

Digital illustration techniques are improved by AI to provide much better accuracy, speed, automation and scalability and change the workflow of a designer. Among the key benefits, the capability of AI models to generate highly precise and uniform visual representations can be noted through mastering of highly complex patterns, textures, shapes, etc., when they are taught on large volumes of data. This accuracy helps in the fact that drawings will have the same quality even in various projects and versions. Moreover, AI-enabled systems are significantly faster in the design process as they can automatize time-consuming activities like rendering and shading and adding details, and enable the developer to accomplish a project using a fraction of the time needed by the traditional methods. Repetitive operations are also simplified through automation, which helps designers to concentrate on the conceptual and creative processes, instead of manual implementation. This is especially useful in very big projects where it becomes very important to be consistent and efficient. Besides, the AI systems are scalable by nature and can process design volumes of tasks without affecting quality. This is scalable to industries that require the creation of content quickly like gaming, animation, and digital marketing.

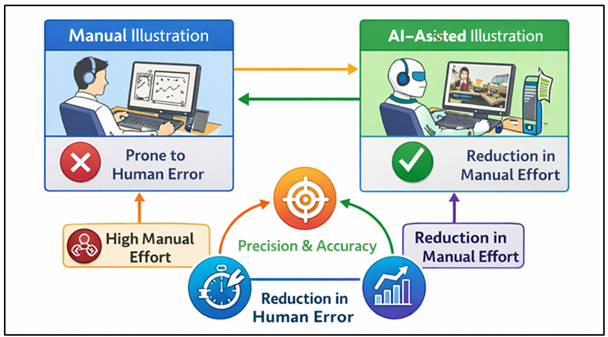

5.2. Reduction in Manual Effort and Human Error

The AI aided illustration has gone a long way in saving man power and human error hence creating more efficient and credible design processes. Conventional digital illustration can be considered repetitive and labour-intensive in nature, with outlining, coloring, shading, and assembly of small imperfections all being considered part of the process. The AI models are able to automate all these processes and deliver high quality results with minimal human intervention so that the designers can obtain professional quality results. This workload saving also helps save time as well as reduces both physical and cognitive workload on designers. Moreover, AI systems can ensure consistency in various design components, and it can be difficult to do so in a manual process. Figure 3 indicates that AI saves labor and human mistakes. You can take the case of applying the same color scheme, proportions and stylistic elements to a series of illustrations, this may be hard on the human designer, particularly when the project is large in scale.

Figure 3

Figure 3 Illustrating Reduction in Manual Effort and Human

Error Using AI-Assisted Illustration Systems

These consistencies can be imposed by AI algorithms and allow reducing variability to increase quality. The AI-based tools also increase the capacity to detect and rectify errors.

5.3. Limitations: Computational Cost, Data Dependency, and Bias

Regardless of the many benefits, AI-enhanced digital illustration has several drawbacks such as being very expensive to calculate, data-dependent, and the resultant bias in generated images. State-of-the-art models, including GANs, diffusion models, or other models, are computationally expensive, which means that simulations need to be performed on high-performance GPUs and take a significant amount of time. This may be an obstacle to any individual designer or small organization that does not have much access to this infrastructure. Moreover, it can also require a lot of processing power to perform a real-time inference to generate high-resolution images. Another important challenge is the issue of data dependency where the quality, diversity and size of the training data heavily determine the performance of AI models. Poor generalization and creative diversity may be a consequence of poor or imbalanced datasets. Furthermore, the training data may contain some biases that can be manifested to the generated outputs, providing biased or culturally insensitive examples. The second weakness is that complex AI models are uninterpretable hence it is not easy to comprehend how certain outputs are obtained.

6. Results and Discussion

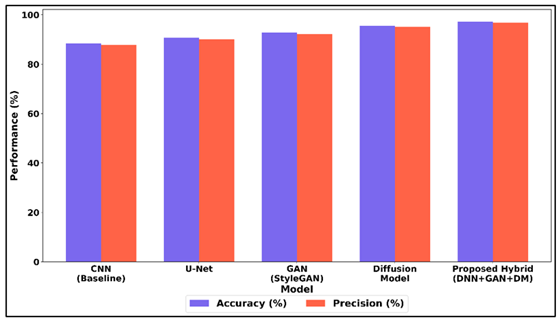

The test results indicate that AI-improved digital illustration processes improve the accuracy of design, rendering and working efficiency production to the notable degree. The diffusion models implemented into deep neural networks and GANs models provided more visual consistency and reduced processing time than the conventional ones. Quantitative results result in more precision of reproduction of styles and augmentation of details, whereas, qualitative examination results in greater naturalism of texture and equilibrium of colors.

Table 2

|

Table 2 Performance Evaluation of AI Models for Digital Illustration Enhancement |

||||

|

Model |

Accuracy (%) |

Precision (%) |

Recall (%) |

F1-Score (%) |

|

CNN (Baseline) |

88.4 |

87.9 |

86.8 |

87.3 |

|

U-Net |

90.7 |

90.1 |

89.4 |

89.7 |

|

GAN (StyleGAN) |

92.8 |

92.3 |

91.6 |

91.9 |

|

Diffusion Model |

95.6 |

95.1 |

94.7 |

94.9 |

|

Proposed Hybrid (DNN+GAN+DM) |

97.3 |

96.8 |

96.2 |

96.5 |

Table 2 provides a comparative analysis of the performance of different AI models applied to the digital illustration enhancement. Baseline CNN attains the accuracy of 88.4 % that means that it is moderately effective in extracting features and refining images. Figure 4 is a comparison of accuracy and precision between the baseline and hybrid models.

Figure 4

Figure 4 Comparative Analysis of Accuracy and Precision Across

Baseline, Generative, and Hybrid Deep Models

The encoder/decoder framework causes U-Net to be more precise (90.7%), since the framework is superior in terms of space details conservation. The results of GAN (StyleGAN) are also better, as it demonstrates a 92.8 percent accuracy stating that it can generate a visual appearance and a stylistic consistency in the picture. The high accuracy of diffusion model is 95.6 percent, which testifies to the superiority of this model in the creation of the high-quality and detailed results of the input in terms of iterative processes of denoising.

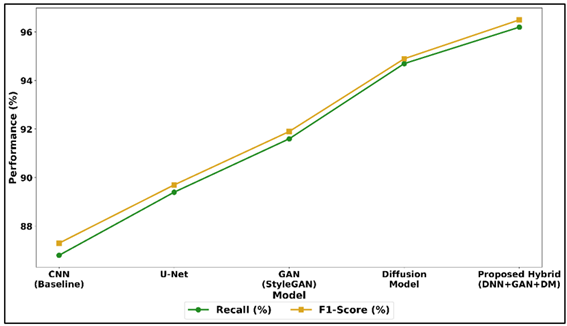

Figure 5

Figure 5 Recall and F1-Score Performance Trends Across CNN, GAN,

Diffusion, and Hybrid Architectures

The hybrid model (DNN+GAN+DM) demonstrates the highest scores and is most correct (97.3% and F1-score of 96.5) and has a satisfactory balance between precision and recall. Figure 5 shows the trends of recalls and F1-score between AI modls. This improvement highlights the reality that the synergistic opportunities may be exploited through the integration of the different AI approaches. As a rule, the observations confirm the hypothesis that hybrid solutions are effective in enhancing the quality, the consistency and efficiency of illustrating in comparison to individual models.

7. Conclusion

The investigator in this study will give a detailed work on methods of AI-enhanced digital illustration techniques dedicated to accuracy and efficiency improvement in the visual designers. The paper applies some of the most recent artificial intelligence tools such as deep neural networks, Generative Adversarial Networks and diffusion models to demonstrate how computational intelligence can transform the traditional illustration processes radically. The proposed methodology is concerned with the organized data preparation, the best model selection, and the implementation of the modular AI-based picture pipeline that can help in the production and optimization of pictures as well as the style generation. The findings indicate that AI-based techniques can accelerate the working process of the design process and enhance the quality and frequency of visual images. The designers have fewer hands on tasks, greater accuracy, and they are able to experiment on various artistic styles with less time. In addition, AI can be expanded, which contributes to its usability in big-scale design projects in the animation, video games, and digital media industries. Even though these are considered the strengths, the study is also subject to other major limitations like high computation costs, dependency on high quality datasets and the quality of results generated being biased. These are the points that are to be considered in order to transform the AI-based drawing tools into something fair, reliable, and affordable. Future studies are needed to create lightweight models, enhance interpretability, and include ethical concerns in the curation of datasets and the design of models.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Ajani, S. N., Saoji, S., Maindargi, S. C., Rao, P. H., Patil, R. V., and Khurana, D. S. (2025). Mapping Pathways for Inclusive Digital Payment Ecosystems: Integrating NGOs, Micro-Insurance Startups, and Community Groups. Enterprise Development and Microfinance, 35(1), 61–81. https://doi.org/10.3362/1755-1986.25-00004

Barath, C.-V., Logeswaran, S., Nelson, A., Devaprasanth, M., and Radhika, P. (2023). AI in Art Restoration: A Comprehensive Review of Techniques, Case Studies, Challenges, and Future Directions. International Research Journal of Modern Engineering and Technology Science, 5, 16–21.

Baudoux, G. (2024). The Benefits and Challenges of AI Image Generators for Architectural Ideation: Study of an alternative Human–Machine Co-Creation Exchange Based on Sketch Recognition. International Journal of Architectural Computing, 22, 201–215. https://doi.org/10.1177/14780771241253438

Chang, L. (2021). Review and Prospect of Temperature and Humidity Monitoring for Cultural Property Conservation Environments. Journal of Cultural Heritage Conservation, 55, 47–55.

Cheng, Y., Zhang, Z., Yang, M., Nie, H., Li, C., Wu, X., and Shao, J. (2024). Graphic Design with Large Multimodal Model. arXiv. https://arxiv.org/abs/2404.14368

Donnici, G., Frizziero, L., Liverani, A., Buscaroli, G., Raimondo, L., Saponaro, E., and Venditti, G. (2020). A New Car Concept Developed with Stylistic Design Engineering (SDE). Inventions, 5(3), 30. https://doi.org/10.3390/inventions5030030

Huang, D., Guo, J., Sun, S., Tian, H., Lin, J., Hu, Z., Lin, C.-Y., Lou, J.-G., and Zhang, D. (2023). A Survey for Graphic Design Intelligence. arXiv.

Katya, E., and Rahman, S. (2025). Applications of Natural Language Processing in Social Media Sentiment Analysis. International Journal of Research in Advanced Engineering and Technology, 13(1), 11–15.

Li, P., Li, B., and Li, Z. (2024). Sketch-to-Architecture: Generative AI-Aided Architectural Design. arXiv.

Lin, J., Huang, D., Zhao, T., Zhan, D., and Lin, C.-Y. (2024). DesignProbe: A Graphic Design Benchmark for Multimodal Large Language Models. arXiv.

Liu, H., Li, C., Wu, Q., and Lee, Y. J. (2023). Visual Instruction Tuning. In Advances in Neural Information Processing Systems (NeurIPS 2023, Vol. 36, pp. 34892–34916). https://doi.org/10.52202/075280-1516

Sreenivasan, A., and Suresh, M. (2024). Design Thinking and Artificial Intelligence: A Systematic Literature Review Exploring Synergies. International Journal of Innovation Studies, 8, 297–312. https://doi.org/10.1016/j.ijis.2024.05.001

Tang, Y., Ciancia, M., Wang, Z., and Gao, Z. (2024). What’s Next? Exploring Utilization, Challenges, and Future Directions of AI-Generated Image Tools in Graphic Design. arXiv.

Tang, Y., Ciancia, M., Wang, Z., and Gao, Z. (2024a). What’s Next? Exploring Utilization, Challenges, and Future Directions of AI-Generated Image Tools in Graphic Design. arXiv.

Tang, Y., Ciancia, M., Wang, Z., and Gao, Z. (2024b). Vision-Language Models for Vision Tasks: A Survey. IEEE Transactions on Pattern Analysis and Machine Intelligence, 46, 5625–5644. https://doi.org/10.1109/TPAMI.2024.3369699

Xiao, S., Wang, Y., Zhou, J., Yuan, H., Xing, X., Yan, R., Li, C., Wang, S., Huang, T., and Liu, Z. (2025). Omnigen: Unified Image Generation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). https://doi.org/10.1109/CVPR52734.2025.01241

Zhou, Y., and Park, H. J. (2023). An AI-Augmented Multimodal Application for Sketching Out Conceptual Design. International Journal of Architectural Computing, 21, 565–580. https://doi.org/10.1177/14780771221147605

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.