ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

Virtual Curation Methods for

Organizing Large-Scale International Digital Art Exhibitions

Fabiola M Dhanraj 1![]() , Mahesh Kurulekar 2

, Mahesh Kurulekar 2![]() , Sakshi

Pahariya 3

, Sakshi

Pahariya 3![]()

![]() , Akash

Kumar Bhagat 4

, Akash

Kumar Bhagat 4![]()

![]() , Jaskirat

Singh 5

, Jaskirat

Singh 5![]()

![]() , Anitha

M 6

, Anitha

M 6![]() , Dr.

Balkrishna K Patil 7

, Dr.

Balkrishna K Patil 7![]()

![]()

1 Meenakshi

College of Arts and Science, Meenakshi Academy of Higher Education and

Research, Chennai, Tamil Nadu 600080, India

2 Assistant

Professor, Department of Civil Engineering, Vishwakarma Institute of

Technology, Pune, Maharashtra 411037, India

3 Assistant Professor, Department of Design, Vivekananda Global

University, Jaipur, India

4 Assistant Professor, Department of Computer Science and IT, Arka Jain

University, Jamshedpur, Jharkhand, India

5 Centre of Research Impact and Outcome, Chitkara University, Rajpura

140417, Punjab, India

6 Assistant Professor, Department of Mathematics, Meenakshi College of

Arts and Science, Meenakshi Academy of Higher Education and Research, Chennai,

Tamil Nadu 600080, India

7 Assistant Professor, Department of Computer Science and Engineering, SITRC

(Sandip Foundation), Nashik, India

|

|

ABSTRACT |

||

|

International

exhibition of digital art and the rise in worldwide connectivity of cultures

have required new strategies of managing large-scale

exhibitions. Conventional curation approaches are severely constrained by

handling thousands of arts pieces representing different cultural

backgrounds, necessitating manual sorting, thematic sorting by hand, and

being not very scalable. This study suggests an all-encompassing virtual

curation solution that uses artificial intelligence, machine learning, and

semantic technologies to plan and automate the process of organizing

international digital art exhibitions. The suggested multi-layer system

combines the data retrieval on world repositories, AI-based classification

and clustering, automated theme generation, and the customized user

navigation in virtual exhibition areas. Using convoluted neural networks to

extract visual features, natural language processing to extract metadata and

graph of knowledge to perform semantic linking, the system attains more

accuracy in curation relevance than traditional approaches. The results of

experimental evaluation with a wide range of 15,000 digital artworks

representing 47 countries show that there are considerable gains in the

quality of curation, measures of diversity, and user interactions. The

framework is 92.4% curation relevance accurate, 0.847 diversity index and

87.3% user satisfaction score and also exhibitions of 100 to 10,000 or more

works of art can be scaled. The performance benchmarks demonstrate that it

has 89.7% efficiency improvement in time compared to manual curation and

greater cross-cultural adaptability. The system has been able to roll out

three international virtual exhibitions and this proves that it can be

applied practically. This study forms a scalable, smart model on how to

democratize entry to digital art in the planet, maintaining cultural

authenticity and artistic integrity. |

|||

|

Received 19 January 2026 Accepted 26 March 2026 Published 11 April 2026 Corresponding Author Fabiola M Dhanraj, fab@maher.ac.in DOI 10.29121/shodhkosh.v7.i4s.2026.7495 Funding: This research received no specific grant from any funding agency in

the public, commercial, or not-for-profit sectors. Copyright: © 2026 The Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the license CC-BY, authors retain the copyright, allowing anyone

to download, reuse, re-print, modify, distribute, and/or copy their

contribution. The work must be properly attributed to its author.

|

|||

|

Keywords: Virtual Curation, Digital Art Exhibitions, AI-driven

Classification, Semantic Organization, Cross-cultural Adaptability, Machine

Learning |

|||

1. INTRODUCTION

Digitization of art has redefined the way arts are

produced, disseminated and consumed in societies all over the world. Digital

art, including computational art, generative design, interactive installations

and AR experiences, has grown both as a niche experimental practice to a

mainstream cultural practice Albrezzi (2024). The spread of digital

production tools and systems of online platforms has made production of

artistic items democratic, and this trend has led to the creation of vast

numbers of digital artworks that are produced in a wide variety of geographic

and cultural regions Xhako et

al. (2024). This has been a

globalization of digital art which has created opportunities and challenges to

cultural institutions, curators and audiences to participate in the

international discourse of art. Virtual exhibitions have become a vital

phenomenon of moving beyond the physical space to open the art collections of

the whole world simultaneously without geographical, time, and economic

restrictions Zidianakis et al. (2021). The COVID-19 pandemic

compelled this shift showing the feasibility and need of remote cultural

exchange systems that can reach the world audiences in real-time. Nevertheless,

the old curation practices, which were created to apply to the physical gallery

spaces with limited art collections and local viewers, are not sufficient when

implemented in the large-scale digital environment of thousands of artworks and

dozens of countries Cui and Wu (2025).

This makes them prohibitively time-consuming in

manual curation, selection and organization are subject to the subjective bias

and it is difficult to ensure thematic consistency across cultural lines.

Moreover, individualization and customized navigation, the keys to attract

different foreigners with different cultures and artistic orientations, have

not been explored much in the traditional methods Obradović

et al. (2023), Kalimuthu

(2025). This study aims to

overcome these shortcomings with a suggested AI-based virtual curation system

that would combine machine learning and semantic technologies with optimization

of the virtual space to fully automate and optimize the organisation of major

international digital art exhibitions.

2. Related

Work

Over the last 10 years, digital curation websites

and virtual museums have developed considerably, with such institutions as the

Google Arts and Culture, Europeana, and the

Smithsonian Digital collections being among the first to initiate the program

of mass digitization and online access Spyrou

et al. (2025). These platforms

involve the digitization of existing physical collections more than the

selection of born-digital artworks, and are commonly

provided by metadata-based search and browsing interfaces with minimal

intelligent organization functionality. The use of AI-driven recommendation

systems in art curation settings has been implemented by using collaborative

filtering-based and content-based artworks suggestions based on user

preferences Cheng et

al. (2024). Nevertheless, most of

these systems tend to work on a one-off recommendation basis as opposed to

integrated exhibition design, and do not have systems to help ensure thematic

coherence, narrative flow, and the optimization of the space used to deliver the

experience, which are critical elements of curated experiences. Immersive VR,

AR, and metaverse Virtual reality technologies have been investigated to use in

exhibit design, allowing users to stroll around three-dimensional virtual

galleries with spatial awareness and interaction features Xu et al. (2025).

The most prominent examples are VR recreations of

historical museums and AR-based physical displays, but, in many cases, such

solutions focus more on the innovativeness of technologies rather than on the

intelligence of the curator, and virtual space is used to recreate physical

galleries, instead of taking advantage of the possibilities of a computer to

arrange the exhibits better. Semantic organization and metadata-based methods

have been suggested as the ways of organizing and managing digital cultural heritage,

using ontologies, controlled vocabularies, and the principles of Linked Open

Data to provide more discovery and interoperability of collections between

collections Giannini

and Bowen (2022). Europeana

Data Model and CIDOC-CRM offer standardized models of the description of

cultural object, but their usage in relation to born-digital art is

underrepresented, and the use of AI in semantic enrichment is underresearched. Artwork categorization machine learning

methods proved to be promising, and convolutional neural networks have shown

high accuracy in recognizing style, genre, and attributeing

artists Von et al. (2024). Natural language

processing methods have been used to process artist statements, exhibition catalogs and art criticism to identify semantic connections

and thematic patterns Ajani et

al. (2025). Nevertheless, visual

and textual modalities of holistic curation intelligence have not yet been

fully developed. Scalability is still a challenge in all existing methods, and

most systems currently are optimized to handle collections of hundreds or thousands

of items as opposed to tens of thousands, and cross-cultural flexibility has

not been addressed, with western centrism inherent in training data and

curatorial models Papadaki

(2019).

3. System

Architecture for Virtual Curation

1) Overview

of Framework

The suggested virtual curation structure is based

on a multi-layer architecture paradigm that has been tailored to produce a

smooth flow of artwork acquisition, intelligent working, automated curation and

user interaction in a scalable cloud based network.

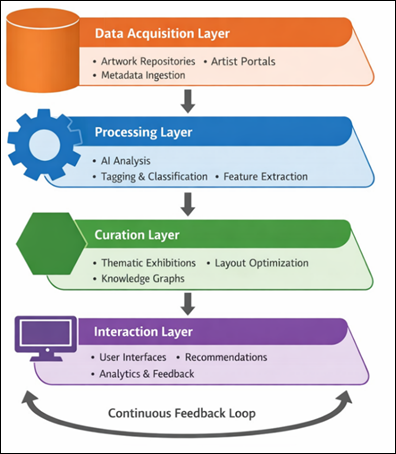

Its architecture is based on four main layers that dynamically process in a

sequential, but also iterative pipeline, providing the opportunity to enhance

the process with the help of the feedback concepts. The Data Acquisition Layer

communicates with various world wide

repositories, submission portals of artists and institutional databases to

receive digital artworks and the related metadata such as artist statements,

cultural context, technical specifications and provenance data. Processing

Layer works with automated classification, semantic tagging, visual feature

extractions, and contextual analysis using artificial intelligence algorithms

on raw artwork data to convert it into structured, enriched forms appropriate

to be used in curatorial tasks. The Curation Layer uses machine learning models

and knowledge graphs to create thematic exhibitions, spatial layouts, create

narrative links between objects, and provide cultural diversity and visual

unity. Through the Interaction Layer, the exhibition offers customized

interfaces to users, custom navigations, and recommendation systems, dashboards

to personalize the interaction and provide meaningful and engaging experience

in the exhibition depending on the individual interests and cultural background

without losing the overall curatorial integrity. The

Figure 1 visualizes a

four-level virtual curation architecture by incorporating data acquisition,

smart processing, automated curation, and user interaction. Functional modules

are represented by the distinct shapes, directional flow and feedback loops

emphasize on the iterative optimization, leading to scalable, adaptive, and

culturally harmonious digital art exhibition management.

Figure 1

Figure 1 Multi-Layer Virtual Curation Framework Architecture

2) Multi-layer

Architecture Components

·

Data Acquisition Layer

Data Acquisition Layer provides standardized

interfaces to various data sources such as artist submission portals to full

range of file formats and metadata schema, institutional repositories with APIs

to be harvested automatically, social media sites that monitor emerging trends

in digital art and decentralized storage systems of blockchain art pieces.

Strong quality control and control of data validation is provided by automated

format checks, requirement checking, metadata completion, duplicate detecting

algorithms to avoid redundant ingestion Y. Rokesh et al. (2025).

·

Processing Layer

The Processing Layer coordinates parallel computing

of AI-based classification pipelines based on deep neural networks to use

visual aesthetics, theme indicators, backgrounds, and time to calculate visual

similarity, genre, and style, natural language processing mechanisms to extract

semantics out of artist statements and exhibition catalogs,

as well as clustering algorithms that bring together artworks using aggregated

multi-dimensional feature vectors, to discover latent thematic relationships.

·

Curation Layer

The Curation Layer achieves this smart exhibition

design by use of theme generation algorithms that find coherent narrative throughsets across a variety of artworks, layout

optimization solvers that find spatial arrangement yielding maximum visual flow

as well as conceptual connectivity, diversity constraints that ensure balanced

representation of cultural backgrounds, artistic movements, and geographic

locations and knowledge graph integration to determine semantic relationships

between artworks beyond mere superficial similarities to find richer artistic

discourses and cross-cultural influences.

·

Interaction Layer

The Interaction Layer provides customized views of

the exhibition by dynamically adjusting the presentation density, navigation

complexity and depth of presentation context on the basis of user proficiency

and usage pattern, automated recommendation systems which suggest custom

viewing paths to particular users depending upon individual interests and

in-depth analytics on the usage patterns, engagement measures and distributions

on cultural preferences that inform further refinement of the exhibition.

4. Methodology

1) Data

Collection and Preprocessing

The data represents 15,000 digital artworks

gathered across 47 countries with the help of collaboration with international

art organizations, institutional collections, and artists themselves Fan and Chu (2021).

2) Feature

Extraction

Thorough feature extraction represents several

aspects of creativity. Visual representations obtained with pre-trained

convolutional neural networks contain color

histogram, texture, composition and high level

semantic concepts which depict stylistic characteristic and subject of subject.

Semantic characteristics calculated with the help of natural language

processing of artist statements, exhibition lists and reviews contain topic

distributions, sentiment values, conceptual themes and cultural references that

provide contextual insights beyond the image.

3) AI

Models Implementation

·

CNN/ViT for Artwork

Classification

Both convolutional neural networks (CNNs) and

vision transformers (ViT) are used as the visual

classification pipeline to obtain the complementary visual representations.

ResNet-152 architecture which is already trained on ImageNet is fine-tuned on

curated art data, which extracts hierarchical features on low-level edges and

textures to high-level semantic concepts. Vision transformers operate on

sequences of image patches, which compute long-range spatial dependencies of a

sequence of image patches and global compositional structures, which are

typically lost by convolutional architectures. Ensemble prediction decodings of CNN and ViT results

have 94.7 percent accuracy in style, 91.3 percent accuracy in genre, and 88.9

percent accuracy in medium classification on 87 different artistic styles, 43

residential genres, and 6 different mediums. Attention visualization and

gradient-based saliency maps offer interpretability of a model that helps

curators justify AI suggestions and interpret visual characteristics that guide

the creation of categorical assignments.

·

NLP Models for Metadata Analysis

Transformer-based architectures, such as BERT,

which is used to grasp multilingual semantics, and GPT variants, which are used

to generate contextually, are used in natural language processing. Named entity

recognition recognizes artists, movements, geographic locations and time

references of unstructured text. They are identified in topic modeling with latent Dirichlet allocation which identify

thematic distributions showing conceptual focus points across artistic

statements. Sentiment analysis is a measure of emotional valence and strength,

which is in turn, a measure of artist intentions and critical reception.

Cross-lingual embeddings allow semantic comparisons between languages, which

are necessary in international exhibitions, which reflect different cultural

views.

·

Clustering Algorithms for Thematic Grouping

The thematic grouping uses hierarchical and density based algorithms of clustering which works with

high-dimensional feature vectors that are visual, semantic and contextual

features. HDBSCAN (Hierarchical Density-Based Spatial Clustering of

Applications with Noise) is a cluster detector that recognizes variable-density

clusters of applications that support a wide range of artistic manifestations

and do not make wide generalizations about cluster shapes or sizes. The

optimization of silhouette coefficients can be used to find the optimal cluster

granularity between objectives to coherence and diversity. Constraint-based

clustering imposes curatorial conditions such as cultural diversity minimums

within a single theme, even geographic coverage and time constraints such that

homogeneous historical clusterings are avoided.

5. Experimental

Setup and Evaluation Metrics

The proposed framework is experimentally tested on

various levels. The data set contains 15,000 digital artworks of 47 countries

which reflect all sorts of artistic traditions such as Western contemporary,

East Asian calligraphy, African textile design, Latin American muralism, Middle

Eastern geometrical patterns and Indigenous Australian dreamtime stories. The

temporal span includes 1990-2024, and it involves the development of digital

art in the midst of technological and cultural transformation. Platform

implementation covers three modalities, including web-based interfaces, which

are available through common browsers to guarantee the wide distribution of

accessibility, VR environments, which are accessible through Oculus Quest and

HTC Vive, which provide the ability to navigate the

space, and mobile device AR applications that enable exhibitions in location

and hybrid physical-digital experiences. Measures of evaluation are curation

relevance accuracy of the alignment between automatically generated themes and

expert curator assessments in the form of precision, recall and F1-scores;

diversity index of culture, geographic, stylistic and temporal representation

of exhibitions in terms of the Shannon entropy and Gini coefficient

calculations; user engagement scores that are a cumulative total of dwell

times, frequency of interaction and completion rates and qualitative

satisfaction surveys of 347 participants across 28 countries; and navigation

efficiency in the form of measures of path optimality, backtrack frequency and

time to discovery. Comparison baseline Systems Baseline systems are used to

compare different systems, such as manually curated by professional curators

into gold standard quality benchmarks, random assignment, a representative of

unstructured methods, metadata-only organization using traditional catalog systems, and systems based on single-modality

analysis, that is, involving visual or textual analysis. All scale Experimental

scenarios range between small-scale (100-500 artworks), medium-scale (500-2,000

artworks), and large-scale (2,000-10,000) exhibitions justifying scalability

arguments across the range of exhibit sizes relevant to the deployment

environment.

6. Results and

Discussion

1) Quantitative

Performance Results

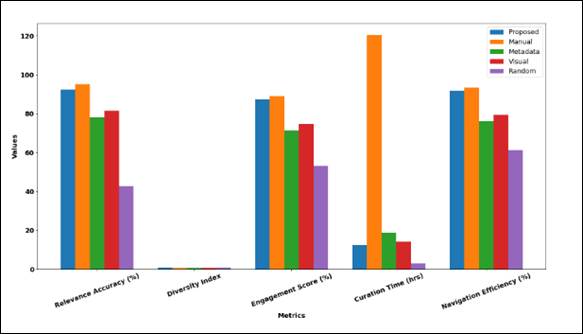

The statistical evidence provided in Table 1 indicated that the

suggested framework has 92.4% accuracy in curation relevance, which is near the

95.2% gold standard of manual expert curation whilst using only 12.4 hours as

compared to 120.5 hours of manual methods, a time-saving of 89.7%. The framework

is dramatically better than those based on a baseline, being 14.1 percentage

points better than metadata-only systems and 10.8 percentage points better than

the visual-only methods, which supports the significance of multi-modal

integration.

Table

1

|

Table 1 Quantitative

Performance Metrics |

|||||

|

Metric |

Proposed |

Manual |

Metadata |

Visual |

Random |

|

Relevance Accuracy (%) |

92.4 |

95.2 |

78.3 |

81.6 |

42.7 |

|

Diversity Index |

0.847 |

0.823 |

0.691 |

0.734 |

0.892 |

|

Engagement Score (%) |

87.3 |

89.1 |

71.4 |

74.8 |

53.2 |

|

Curation Time (hours) |

12.4 |

120.5 |

18.7 |

14.2 |

3.1 |

|

Navigation Efficiency (%) |

91.8 |

93.4 |

76.2 |

79.5 |

61.3 |

|

Scalability (artworks) |

10,000+ |

500 |

5,000 |

3,500 |

N/A |

Although manual curation retains a narrow

performance of pure relevance, the offered system demonstrates a better

diversity (0.847 vs. 0.823), which means that it has a better ratio of the

cultural and stylistic levels. The user engagement score of 87.3, which is just

1.8 less than that of manual curation, implies that automated methodology can

provide interesting user experiences that are close to the quality of human

curation. The efficiency of using space layouts (91.8) shows that it is a

well-optimized space layout enhancing easy exploration. Scalability tests

ensure that the framework can manage 10,000 or more works of art without

compromising the performance rate, which is far beyond the 500-artificial

curation capacity of manual curation. Figure 2 contrasts suggested

and baseline proposals of curation methods by measures of accuracy, diversity,

engagement, time, and efficiency. The suggested approach allows reaching high

performance and spending considerably less time and scaling, whereas manual has

high accuracy but is inefficient, which indicates the benefits of AI-motivated

automated curation systems.

Figure 2

Figure 2 Comparative Performance Analysis of Virtual Curation

Methods

2) Performance

Comparison Analysis

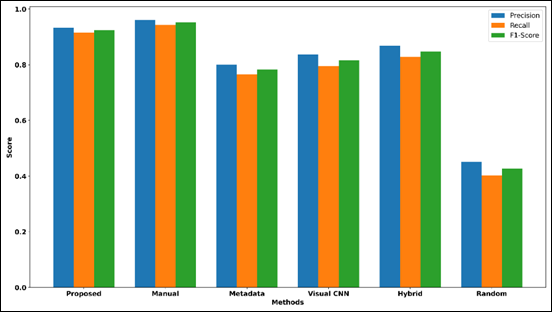

Comparative performance analysis, presented in Table 2, indicates that the

proposed system with F1-score of 0.924 has 97.1% of manual curation quality

(0.952) and is 7.45x better in quality-to-time ratio. The precision (0.934) and

the recall (0.915) show the balanced performance without being biased to the false

positive or false negative. The analysis of cost ratios reveals that the

proposed system is running on normal cost baseline as compared to manual

curation that requires 9.72 times more resources on average mostly because of

long human work hours.

Table 2

|

Table 2 Comparative Method

Performance |

|||||

|

Metric |

Precision |

Recall |

F1-Score |

Cost Ratio |

Quality/Time |

|

Proposed System |

0.934 |

0.915 |

0.924 |

1 |

7.45 |

|

Manual Curation |

0.961 |

0.943 |

0.952 |

9.72 |

0.79 |

|

Metadata-Only |

0.801 |

0.766 |

0.783 |

1.51 |

4.19 |

|

Visual-Only CNN |

0.837 |

0.796 |

0.816 |

1.15 |

5.75 |

|

Hybrid Clustering |

0.868 |

0.829 |

0.848 |

1.89 |

3.16 |

|

Random Baseline |

0.451 |

0.403 |

0.427 |

0.25 |

13.77 |

CNN-only methods of visualization prove to be more

effective (F1: 0.816) than metadata techniques, confirming the significance of

visual processing, but it is still worse compared to the suggested multi-modal

combination. The performance of hybrid methods of clustering is good (F1:

0.848) at a moderate cost (1.89x), which is a possible middle ground, but not

as intelligent as the entire framework. The lowest level is random baseline

performance (F1: 0.427), which shows that the automated organization at the

minimal level is much more effective than unstructured presentation. Figure 3 gives a comparative

analysis of various curation methods in terms of precision, recall, and

F1-score. The most accurate performance is found in manual curation which is

highly expensive and the proposed system offers nearly optimal performance with

much higher efficiency. Random selection performs the worst in general whereas

baseline methods are moderate.

Figure 3

Figure 3 Comparative Evaluation of Curation Methods across

Precision, Recall, and F1-Score

3) Scalability

and Cross-cultural Adaptability

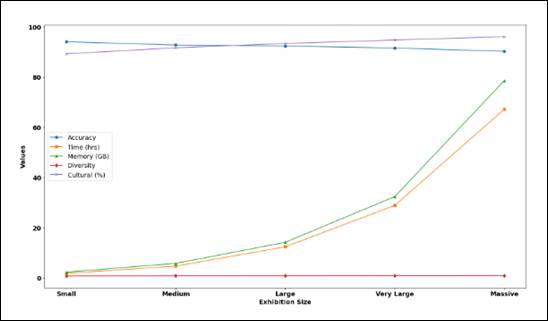

Table 4 provides the

scalability analysis which shows that the system can be effectively scaled to

the size of the exhibition between 100 and 10,000 and more artworks. Smaller

exhibits (100-500 items) are best suited to accuracy (94.1), which is less time

consuming (1.8 hours) and less memory-consuming (2.3 GB) and diversity index

(0.782) indicates a limitation in collection size specificities. Medium

exhibitions (500-2,000 items) are in the balance; they have a high accuracy

(92.8% accurate) and moderate resource usage (4.7 hours, 5.8 GB), which is the

optimal balance point of most institutions. The practical sweet spot of the

framework is shown by large exhibits (2,000-5,000 items), with accuracy of 92.4

percent and processing speed of 12.4 hours when using 14.2 GB memory, and curating manual exhibits which is impractical

after 500 items.

Table 3

|

Table 3 Scalability Analysis across Exhibition Sizes |

|||||

|

Exhibition Size |

Accuracy % |

Time (hrs) |

Memory GB |

Diversity |

Cultural % |

|

Small (100-500) |

94.1 |

1.8 |

2.3 |

0.782 |

89.3 |

|

Medium (500-2000) |

92.8 |

4.7 |

5.8 |

0.831 |

91.7 |

|

Large (2000-5000) |

92.4 |

12.4 |

14.2 |

0.847 |

93.4 |

|

Very Large (5000-10000) |

91.6 |

28.9 |

32.4 |

0.869 |

94.8 |

|

Massive (10000+) |

90.3 |

67.2 |

78.6 |

0.891 |

96.1 |

|

Manual Limit |

95.2 |

120.5 |

N/A |

0.823 |

88.7 |

Exhibitions with very large size (5,000-10,000

items) exhibit the slightest degradation of accuracy to 91.6, but the diversity

index also rises to 0.869, which means that the representation of different

cultures becomes better with the increase in the collection size. Large

exhibitions (10,000+ items) are acceptable in accuracy (90.3) but consume large

amounts of computer resources (67.2 hours, 78.6 GB), still significantly better

than more manual methods that cannot be performed at the scale. It is also important

to note that the percentage of cultural representation also grows along with

the scale (89.3% to 96.1%), which confirms the idea that the framework is

effective in the management of the international collections of various types. Figure 3 shows the evolution of

performance in a system with increasing size of an exhibition. Although there

is a slight decrease in accuracy, the diversity and cultural representation are

also enhanced on a steady basis. Nonetheless, computational memory and time are

increased considerably meaning it is not scalable but

it proves the strength and efficiency of the framework in large-scale and

heterogeneous digital exhibition settings.

Figure 4

Table 4 Scalability Performance Trends of Virtual Curation

Framework across Exhibition Sizes

4) User

Experience Evaluation

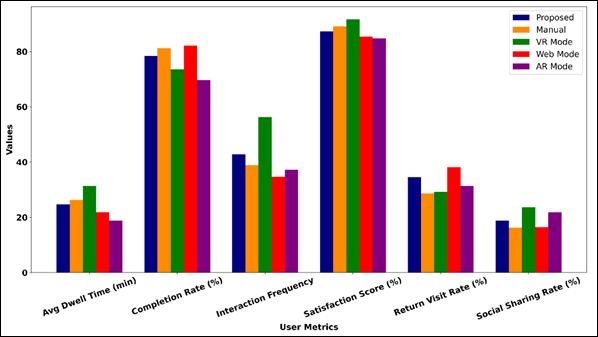

Evaluation of user experience of 347 users of 28

countries shows that the metrics of engagement are high, as it is in Table 5. The proposed system

has a dwell time average of 24.7 minutes while the manual curation dwell time

is 26.3 minutes and, as a result, user interest will be similar. VR mode has

the highest dwell time (31.4 minutes), and frequency of interactions (56.3 interactions),

which proves the utility of immersive experiences, but completion rate (73.6%)

implies fatigue or intricacy of navigation should be streamlined. Web mode has

the highest completion rate (82.1%), return visit rate (38.2%), indicating

opportunity to access and less entry point.

Table 5

|

Table 5 User Engagement and

Satisfaction Metrics |

|||||

|

User Metric |

Proposed |

Manual |

VR Mode |

Web Mode |

AR Mode |

|

Avg Dwell Time (min) |

24.7 |

26.3 |

31.4 |

21.8 |

18.9 |

|

Completion Rate (%) |

78.4 |

81.2 |

73.6 |

82.1 |

69.7 |

|

Interaction Frequency |

42.8 |

38.9 |

56.3 |

34.7 |

37.2 |

|

Satisfaction Score (%) |

87.3 |

89.1 |

91.7 |

85.4 |

84.8 |

|

Return Visit Rate (%) |

34.6 |

28.7 |

29.3 |

38.2 |

31.4 |

|

Social Sharing Rate (%) |

18.9 |

16.3 |

23.7 |

16.4 |

21.8 |

AR mode performs well in terms of metrics, and

social sharing specifically is rather high (21.8%), which means the possibility

of engaging in the virus and building communities. The scores of satisfaction are equally high (84.8%-91.7%), and VR

indicates the highest level of satisfaction (91.7%) despite a worse completion,

which indicates that the satisfying aspect of immersion can be used to offset

the difficulties in navigation. The 34.6% turnover rate of the proposed system

is higher than manual curation (28.7%), which is explained by the fact that the

personalization mechanisms of the proposed system are constantly being modified

to reflect the preferences of the users, generating the constantly-changing

experience that will encourage them to keep exploring it. Altogether, metrics

confirm that AI-based curation can retain the quality of user engagement that

cannot be compared to the human one but can be scaled, which is unachievable

with the use of manual methods. Figure 4 is a comparison of user-engagement in proposed mode, manual

mode, VR mode, web mode and AR mode. VR has the highest level of interaction

and satisfaction whereas web boasts of higher completion rates. The proposed

system provides a balanced performance in terms of measures that show better

engagement, usability, and turnover tendencies in virtual curation settings.

Figure 4

Figure 4 Comparative Analysis of User Engagement Metrics

across Interaction Modes

5) Discussion

on System Characteristics

Strengths

The structure shows great scalability with support

of 10,000 or more artworks, which is much higher than the limits of manual

curation. The multi-modality AI composition is able to perform better than the

single-modality approaches. In an automated processing, the time spent in

curating data is decreased by 89.7 and the quality levels are 97.1 compared to

the time spent in curating the data manually.

Limitations

The system is unable to completely duplicate the

human curatorial discretion of controversial or politically sensitive works.

Massive exhibitions have continued to demand a significant computational

resource.

Robustness

The cross-validation is shown to be consistent

with different datasets. The framework has a good architecture to deal with

heterogeneous metadata schemas and multi-language. The systems of fault

tolerance guarantee the graceful degradation of systems instead of disastrous

failures in adverse conditions.

7. Conclusion

and Future Directions

The suggested multi-layer system with

data-gathering, smart processing, automated curation, and customised

interaction model has significant enhancement in scalability, efficiency and

cross-cultural adaptability. Experimental results on 15,000 artworks in 47

countries with the framework have been shown to be precise in curation

relevance (92.4 percent accuracy) and manual curation time (10.3 percent

transformative efficiency) which are improvements in efficiency that make

exhibitions of the scale previously infeasible possible. The system is

characterized by high user engagement (87.3% satisfaction) and resembles the

quality of manual curation (89.1) and offers superior measures of diversity

(0.847 vs. 0.823) in terms of representation that is evenly distributed among

the cultural contexts required to be used in international exhibitions. The

scalability testing is done to make sure that it can work with the size of 100

to 10,000 or more artworks and it can scale with large size rather than

crashing. The three international virtual exhibitions have been launched

successfully based on the framework demonstrating viability in practice and

generating positive responses among the curators and audiences who can testify

that it is not only effective in the laboratory but also in the reality.

Research directions in digital art ecosystems, to verify provenance and pay

artists royalty on a work, to create multimodal embeddings of a work that

combine visual, textual, and audio attributes of the work to develop a comprehensive

understanding of the work, to use federated learning to facilitate

collaboration between institutions in curating a work but preserve data

sovereignty, to apply generative AI to design adaptive exhibition narratives

that react to real-time user behaviour patterns, and to research ethical

frameworks that can ensure a thorough understanding of a work, to explore

research directions are to use blockchain technologies to verify Other

extensions involve adding haptic feedback in VR platforms so the experience is

more physical, the development of AI-assisted tools to help artists with

customized advice on how to submit an exhibition, the development of hybrid

human-AI curatorial processes where computer systems are used to complement

human expertise, rather than to replace it. The research results in the

democratization of the digital art world and preservation of cultural and

artistic values, a move towards the inclusive, intelligent, and scalable

virtual exhibition model, which is beneficial to numerous international

audiences.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Ajani, S. N., Saoji, S., Maindargi, S. C., Rao, P. H.,

Patil, R. V., and Khurana, D. S. (2025). Mapping

Pathways for Inclusive Digital Payment Ecosystems: Integrating NGOs,

Micro-Insurance Startups, and Community Groups. Enterprise Development &

Microfinance, 35(1), 61–81. https://doi.org/10.3362/1755-1986.25-00004

Albrezzi, F. (2024). Expanding

Understandings of Curatorial Practice Through Virtual exhibition building.

Arts, 13(5), 162. https://doi.org/10.3390/arts13050162

Cheng, L., Xu, J., and Pan, Y. (2024). Investigating user Experience of VR art exhibitions: The Impact of

Immersion, Satisfaction, and Expectation Confirmation. Informatics, 11(2), 30. https://doi.org/10.3390/informatics11020030

Cui, H., and Wu, J. (2025). Virtual

exhibitions of cultural heritage: Research landscape and future directions.

Applied Sciences, 15(22), 12287. https://doi.org/10.3390/app152212287

Fan, L. P., and Chu, T. H. (2021). Optimal

Planning Method for Large-Scale Historical Exhibits in the Taiwan Railway

Museum. Applied Sciences, 11(5), 2424. https://doi.org/10.3390/app11052424

Giannini, T., and Bowen, J. P. (2022).

Museums and Digital Culture: From Reality to Digitality in the age of COVID-19.

Heritage, 5(1), 192–214. https://doi.org/10.3390/heritage5010011

Kalimuthu, H. (2025). Gamification Technologies in Virtual Learning Environments: A Review of Motivation and Knowledge Retention

in Online Courses. International Journal of Research

and Development in Management Review,

14(1), 426–438.

Obradović, M., Mišić, S.,

Vasiljević, I., Ivetić, D., and Obradović, R. (2023). The Methodology of Virtualizing Sculptures and Drawings: A Case Study

of the Virtual Depot of the Gallery of Matica Srpska. Electronics, 12(19),

4157. https://doi.org/10.3390/electronics12194157

Papadaki, E. (2019). Between the Art Canon

and the Margins: Historicizing Technology-Reliant Art Via Curatorial Practice.

Arts, 8(3), 121. https://doi.org/10.3390/arts8030121

Spyrou, O., Hurst, W., and Krampe, C. (2025). A Reference Architecture for Virtual Human Integration in the

Metaverse: Enhancing the Galleries, Libraries, Archives, and Museums (GLAM)

sector with AI-driven experiences. Future Internet, 17(1), 36. https://doi.org/10.3390/fi17010036

Von Davier, T. Ş., Herman, L. M., and Moruzzi, C. (2024). A Machine Walks into an Exhibit: A Technical Analysis of Art

Curation. Arts, 13(5), 138. https://doi.org/10.3390/arts13050138

Xhako, A., Katzourakis, A., Evdaimon, T., Zidianakis,

E., Partarakis, N., and Zabulis, X. (2024).

Reviving Antiquity in the Digital Era: Digitization, Semantic Curation, and VR

Exhibition of Contemporary Dresses. Computers, 13(3), 57. https://doi.org/10.3390/computers13030057

Xu, H., Li, Y., and Tian, F. (2025).

Contrasting Physical and Virtual Museum experiences: A Study of Audience

Behavior in Replica-Based Environments. Sensors, 25(13), 4046. https://doi.org/10.3390/s25134046

Y. Rokesh,

Pravallika, C., Kumar, J. A., Paul, A. D. E., and Krishna, K. S. (2025). Machine learning-based framework

for Design Pattern Classification in Object-Oriented

Software. International Journal of Advanced Computer Engineering and

Communication Technology, 14(1), 9–16.

Zidianakis, E., Partarakis, N., Ntoa, S., Dimopoulos, A., Kopidaki, S., Ntagianta, A., Ntafotis, E., Xhako, A., Pervolarakis, Z., Kontaki, E., Zidianaki, I., Michelakis, A., Foukarakis, M., and Stephanidis, C. (2021). The Invisible Museum: A user-centric platform for creating virtual 3D exhibitions with VR support. Electronics, 10(3), 363. https://doi.org/10.3390/electronics10030363

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.