ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

Computer Vision Systems for Documenting, Preserving, and Analyzing Traditional Visual Art Forms

Bipin Sule 1![]() , Shanthi V 2

, Shanthi V 2![]() , Qingchang Guo 3

, Qingchang Guo 3![]()

![]() ,

Meena Y R 4

,

Meena Y R 4![]()

![]() ,

Dr. Prabhat Kumar Sahu 5

,

Dr. Prabhat Kumar Sahu 5![]()

![]() ,

Dr. E. Archana 6

,

Dr. E. Archana 6![]()

![]()

1 Senior

Professor, Department of DESH, Vishwakarma Institute of Technology, Pune,

Maharashtra 411037, India

2 Professor,

Computer Science, Meenakshi College of Arts and Science, Meenakshi Academy of

Higher Education and Research, Chennai, Tamil Nadu 600080, India

3 Faculty of Education,

Shinawatra University, Bang Toei, Thailand

4 Associate Professor,

Department of Civil Engineering, Faculty of Engineering and Technology, JAIN

(Deemed-to-be University), Bengaluru, Karnataka, India

5 Associate Professor,

Department of Computer Science and Information Technology, Institute of

Technical Education and Research, Siksha 'O' Anusandhan (Deemed to be

University), Bhubaneswar, Odisha, India

6 Assistant Professor,

Department of CSE, Panimalar Engineering College, Tamil Nadu, India

|

|

ABSTRACT |

||

|

Traditional

visual art forms are highly essential in preserving culture but conventional forms

of documentation usually have shortcomings with regards to scalability,

accuracy and durability of access by the user. In the present paper, the

author provides a detailed computer vision-oriented system that is intended

to document, preserve and analyze traditional artworks through the use of

modern imaging and deep learning algorithms. The offered system incorporates

multi-phase pipeline that includes the steps of image capture, image

processing, semantics, and systematic storage that provides the opportunity

to archive high-fidelity digital images. The framework can be applied to

perform automated functions, e.g., style classification, damage detection,

and authenticity assessment with a high degree of precision, with the help of

convolutional neural networks (CNNs) and Vision Transformers (ViTs). This is

suggested to have an efficient data collection procedure and annotation

software incorporating images in museums, historical records and field

surveys and a unified labeling process to be identical. In comparison to the

traditional ways of documentation, the experimental data is shown to be

highly accurate, feature extraction quality and efficient analysis. Besides,

the system helps to use applications in digital restoration, cultural

analytics and heritage conservation planning. The proposed framework would

have given a high-scalable, intelligent, and reliable infrastructure of

securing the traditional visual art in the digital era and allow additional

computational analysis to the researchers and conservationists. |

|||

|

Received 12 January 2026 Accepted 08 March

2026 Published 11 April 2026 Corresponding Author Bipin

Sule, bipin.sule@vit.edu DOI 10.29121/shodhkosh.v7.i4s.2026.7487 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download, reuse,

re-print, modify, distribute, and/or copy their contribution. The work must

be properly attributed to its author.

|

|||

|

Keywords: Computer Vision, Cultural Heritage Preservation,

Deep Learning, Art Analysis, Digital Archiving |

|||

1. INTRODUCTION

The most valuable cultural resources are the traditional visual art forms (paintings, sculptures, murals, textiles and folk artifacts), the cultural artifacts that bear the history, social and aesthetic identities of the civilizations. This is however being overridden by the environmental degradation, old age, human activities, and poor ways of preservation in these forms of art. Conventional methods of documentation like manual photography, cataloguing and written descriptions are usually tedious, subjective and lacks a capability of recording subtle visual information. This has contributed to a growing need of complex, scalable, and precise technological solutions to ensure continuity of conservation and study of cultural heritage in future. In recent years, computer vision has evolved such that it has revolutionized the field of preservation of digital heritage by allowing the mechanism of automatic extraction, interpretation and administration of the visual data of a piece of artwork. Computer vision systems Computer vision systems refer to systems that process visual data by using advanced image processing and machine learning to calculate pattern, texture, color and structural data Marques (2023). The use of these functions allows them to be documented in high resolutions, objectively analyzed and stored in the artistic forms effectively, therefore, escaping many limitations of traditional approaches. In addition, deep-learning algorithms, such as Convolutional Neural Networks (CNNs) and Vision Transformers (ViTs) to a large extent have enhanced the activity of visual recognition and categorizing assignments in challenging fields of art Mecklenburg (2020). Computer vision in art preservation is also not applied in recording art works only. It is applicable in many other analytical tasks including identification of style, attribution, provenance and also in identifying damage. One such application is machine learning algorithm which can identify small stylistic patterns that would give distinction among different schools of art or identify irregularities that could indicate a forgery.

More so, multifaceted image restoring techniques make it possible to restore distorted or partially damaged paintings and, therefore, contribute to conservation. The capabilities are useful to support curators and historians, as well as to provide practical information to the interdisciplinary research in the art history, archaeology, and digital humanities field. Despite such developments, there remain certain challenges in the field of applying computer vision system to the analysis of traditional art. Light variation, occlusions, more complex textures, and the lack of marked data become a significant challenge to the model accuracy and generalization Liu (2020). In addition, we should take into consideration the ethical considerations which are related to the possession of data, cultural sensitivity and digital representation. To overcome such challenges, there are a need to have quality frameworks that combine vast datasets, standardized annotation processes, and, adaptive learning strategies. The paper will ensure that it comes up with a complete computer vision model of documenting, conserving, and analysis of traditional forms of art that are visual. The model integrates multi-stage processing pipeline, the state-of-the-art deep learning structure, and a systemic data management to offer high-performance outcomes in numerous applications Amany et al. (2022). The suggested system, which will contribute to filling the gap between technology and cultural heritage, is likely to provide scalable, intelligent, and sustainable solution to save the traditional art in the digital age and be able to perceive the art of expression more deeply with the help of computation.

2. Literature Review

2.1. Overview of traditional art documentation methods

The conventional art reporting processes have been based on manual art documentation methods of photography, sketching, written cataloging, and record-keeping in archives. High-resolution photography has long been used in museums and cultural institutions as a way to record visual images of artworks, usually accompanied by descriptive metadata such as information about the artists, history, materials and size Morlotti et al. (2024). Though such techniques are badly needed as a source of important documentation, it is subjective, lacks consistency of capture conditions, and cannot retain fine-grained structural and textual details. Moreover, paper archives and photographic films are also vulnerable to physical damages as a result of age, and this can be dangerous to the long-term preservation of data. Other techniques used by conservation experts include infrared imaging, ultraviolet fluorescence and X-ray radiography to analyze the underlying layers, pigments and structural integrity of the artworks Janković Babić (2024). Such methods can be used to gain a significant understanding, but they can be less accessible and scalable, and require special equipment and expertise. Besides, manual annotation and cataloging is a time-consuming and various subject to human error, decreasing efficiency in large-scale documentation endeavors.

2.2. Evolution of Computer Vision in Cultural Heritage

Cultural heritage Computerized vision the evolution of computer vision in cultural heritage has been characterized by the gradual transition of the naive image processing schemes to the advanced deep learning-based analytical schemes. The first techniques were primarily involved in low level image enhancement such as noise reduction, edge detectors or color correction that improved the aesthetic value of digitised artworks. Such techniques formed the foundation of the automated documentation but lacked the means of accomplishing high-level semantic interpretation of artistic content Bosco et al. (2021). Machine learning also encouraged researchers to combine feature related techniques such as Scale-Invariant Feature Transform (SIFT) and Histogram of Oriented Gradients (HOG) to target and identify objects in paintings besides to categorize patterns. Those methods allowed implementing preliminary tasks such as detecting motifs and similarities. However, there was a limitation on the quality of their performance as they were handcrafted in depicting features and being sensitive to the change in style, light and texture. Due to the introduction of deep learning and in particular Convolutional Neural Networks (CNNs), there has been a tremendous shift in the research field because they enable the extraction of features automatically and significantly improve the classification and recognition problems Okanovic et al. (2022). More recently, Vision Transformers (ViTs) and hybrid models were used to further increase the ability to model global contextual relations in images. Such advances have enabled the extension of the applications into style classification, attribution to artists, the identification of forgeries and restoration, so computer vision becomes a crucial instrument in present conservation of cultural heritage Janas et al. (2022).

2.3. Existing Datasets and Benchmarks for Art Analysis

A number of datasets have been presented over the years to assist in artistic classification, style recognition and restoration research. The notable ones are WikiArt, which has a variety of paintings but is sorted by the artist, style, and genre, and the Rijksmuseum dataset that offers high-resolution images and the metadata is very detailed Lee et al. (2022). These datasets have made it possible to experiment and benchmark on large scale machine learning and deep learning models. Besides general-purpose art datasets, other datasets have also been created to handle individual tasks, e.g. forgery detection, damage evaluation, and restoration. As an example, the datasets that include infrared and multispectral images enable the researchers to examine the structures and material compositions of works of art at the background level Dang et al. (2023). Nonetheless, there is a notable lack of datasets which are not affected by class imbalance, under-representation of traditional and indigenous forms of art, and informativeness of the quality of the annotation, which are some of the issues of many existing datasets. Table 1 is a comparison between methods, datasets, performance, limitations and future directions.

Table 1

|

Table 1 Comparative Related Work on Computer Vision for Art Documentation, Preservation, and Analysis |

|||||

|

Methodology |

Dataset Used |

Key Technique |

Application Area |

Limitations |

Future Scope |

|

Image Processing |

Museum Archives |

Edge Detection |

Art Documentation |

Limited feature depth |

Integrate deep learning |

|

ML-Based Hatwar (2025) |

WikiArt |

SVM + HOG |

Style Classification |

Handcrafted features |

Use CNN models |

|

Feature Extraction |

Rijksmuseum |

SIFT |

Pattern Recognition |

Sensitive to noise |

Robust feature learning |

|

Deep Learning Zhang et al. (2024) |

Custom Dataset |

CNN |

Damage Detection |

Small dataset size |

Data augmentation |

|

Hybrid ML |

WikiArt |

RF + Texture Features |

Artist Attribution |

Limited scalability |

Transformer models |

|

CNN-Based Tang et al. (2024) |

Multispectral Dataset |

ResNet50 |

Restoration |

High compute cost |

Lightweight models |

|

Transformer-Based |

WikiArt |

ViT |

Style Recognition |

Requires large data |

Hybrid models |

|

GAN-Based |

Synthetic + Real |

GAN |

Image Reconstruction |

Training instability |

Stable diffusion |

|

Hybrid DL |

Museum + Field Data |

CNN + LSTM |

Art Analysis |

Complex architecture |

Optimization techniques |

|

Multimodal Learning Huang (2024) |

Multispectral + RGB |

Fusion Models |

Authenticity Check |

Data fusion complexity |

Efficient fusion |

|

Vision Transformer |

Large-Scale Art Dataset |

ViT + Attention |

Style & Genre Analysis |

High training cost |

Model compression |

|

Hybrid CNN-ViT |

Diverse Art Dataset |

CNN + ViT |

Comprehensive Analysis |

Computational demand |

Real-time deployment |

3. Proposed Computer Vision Framework for Art Preservation

3.1. System architecture design

The suggested system architecture is a distributed, scalable, and modular framework that is to be efficient in meeting the complicated needs of documenting and analyzing the traditional visual art forms. The system will have four main layers namely data acquisition, preprocessing, analysis and storage with user interaction. The data acquisition layer incorporates high-resolution imaging equipment, such as the DSLR cameras, 3D scanners, and multispectral sensors, which are used to guarantee that the artwork feature, including the texture, color, and depth of the structure is well captured.

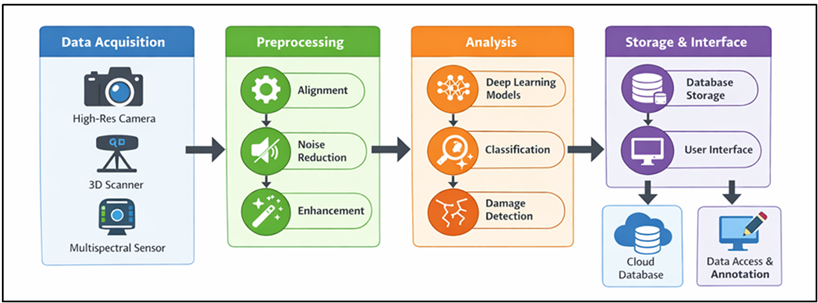

Figure 1

Figure 1 Multilayered System Architecture of Computer Vision Framework for Art Preservation

The preprocessing layer makes input data as standardized as possible by geometric alignment, illumination correction, and noise filtering to make sure that a wide variety of sources is consistent. Figure 1 demonstrates that acquisition, processing, analysis, and storage are integrated in layers of pipeline. The analysis layer is the heart of the architecture as it involves the sophisticated computer vision algorithms and deep learning models as a source of meaningful features, classification, and anomalies like damage or forgery. GPU-accelerated computation supports this layer so that it is possible to process large datasets efficiently. The storage and management layer accesses the data storage and management databases and digital repositories, which are also cloud-based to safely store the images, metadata, and analytical outputs so that they can be accessed and preserved over time.

3.2. Multi-Stage Pipeline (Capture, Enhancement, Analysis, Storage)

This is achieved by using a multi-step pipeline that is utilized to standardize the traditional artwork information so as to ensure high quality of preservation and analysis. The capture step is the first step that entails getting the high-resolution images in a controlled environment with the lighting conditions being calibrated imaging systems. Further procedures like photogrammetry and multispectral imaging are also deployed to record surface and under-surface information which allows thorough documentation. The second phase is known as the enhancement and involves bettering the quality of an image using preprocessing methods like denoising, contrast enhancement, color normalization, and super-resolution reconstruction. Such processes aid in showing fine grained textures and rectify any distortions brought about by environmental elements or equipment related elements. Enhancement stage is the step that makes sure that the further analytical processes are done on the optimized data. Analysis is the third step, which uses computer vision algorithms and deep learning models to get semantic and structural features. Style classification, damage detection, segmentation and pattern recognition are tasks that are carried out at this stage.

3.3. Integration of Deep Learning Models (CNN, Vision Transformers)

Such models are especially useful in such activities as image classification, image segmentation, and damage detection, where fine-grained details are important. State-of-the-art architectures like the ResNet, Vgg, and EfficientNet can be tweaked with the help of domain peculiar art datasets to enhance its performance and generalization. Besides CNNs, there are Vision Transformers (ViTs) that are used to model the relationship between contexts on a global scale in images. In contrast to CNNs, which are based on local receptive field, ViTs combine coarse-scale dependencies and overall trends in the artworks using self-attention mechanism. This can be particularly useful in style identification, artist identification and interpreting complicated compositions. The hybrid model based on CNNs and ViTs is chosen to exploit the benefits of both models.

4. Dataset Collection and Annotation Strategy

4.1. Sources of traditional art images (museums, archives, field surveys)

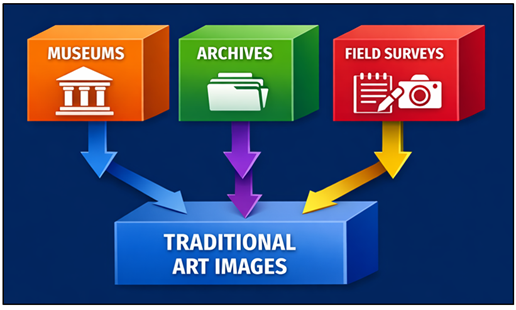

The diversity and quality of the dataset used in the process of training and evaluation of the computer vision system is a major factor that determines its effectiveness in the preservation of art. The proposed framework will collect data using several sources that are reliable and complementary to achieve complete coverage of the traditionally visual art forms. Primary sources Museums contain collections of paintings, sculptures, manuscripts and other artifacts that are often curated, high quality metadata like artist, period and composition of material is often available. Online repositories and digital archives also increase the availability of datasets, providing the digitized versions of rare and historical works of world institutions. In Figure 2, multisource collection is integrated using field surveys, museums and archives.

Figure 2

Figure 2 Multisource Data Acquisition Framework for Traditional Art Image Collection

Along with the institutional sources, field surveys are an important tool to capture indigenous and region-specific art forms that are frequently underrepresented in data bases with formal designs.

4.2. Annotation Protocols and Labeling Standards

The effectiveness of the supervised learning models of analyzing paintings will be determined by the correct annotation and stable labeling. The draft framework applies a regulated protocol of annotation protocol to ensure quality, reliability and standardized data labeling. There are attributes attached to the dataset on each image like the type of the artwork, the style of the artwork, Period, artist (where known), what it is made of, and the condition of the art, etc. The task in pixel based annotations such as damage detection and segmentation is to identify the presence of cracks, discoloration, erosion and missing regions. The generic rules of labeling are designed in such a way that they take care of this being done in a similar manner based on the level of experience of art historians and conservators. The process of labeling is made easy by annotating tools that have easily navigable interfaces and minimizing human error. Also, the inter-annotator agreement measures are employed to assess the consistency of annotators and improve labeling procedures where disagreements occur. The metadata is being organized with pre-set schemas in order to be interoperable with electronic archives and databases. With the help of controlled vocabularies and taxonomies, ambiguity in labelling is prevented and data organization is improved.

4.3. Data Augmentation Techniques for Robustness

Data addition is also crucial to enhancing the generalisation capacity and strength of computer vision models, particularly in the event that the labelled datasets are small and/or skewed. The proposed framework introduces a very detailed set of augmentation techniques to capture the real variations and increase model robustness. When taking a picture, geometric transformation, which involves rotation, scaling, flipping and cropping, is taken into account with regard to the changes in picture orientation and perspective. Photometric effects, which include control of brightness, control over contrasts, jittering of colors and injection of noise are used to create the effect of a myriad of lighting effects and malfunctions in the camera. The techniques assist models to adjust to variations in the real-world documentation settings. Moreover, more sophisticated augmentation like CutMix, MixUp and random erasing are utilized to enhance generalization of features, as well as to avoid overfitting. In the restoration and damage detection tasks, synthetic degradation methods are also presented to create cracks, fading and texture loss.

5. Results and Performance Evaluation

5.1. Quantitative analysis across models

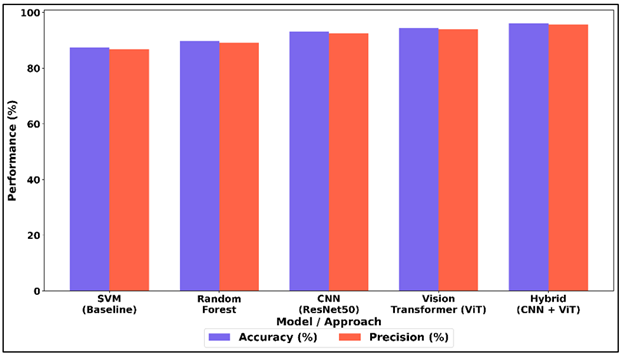

The suggested framework was tested with the help of various machine learning and deep learning architectures, such as CNN, Vision Transformer, and hybrid networks. The accuracy, precision, recall, and F1-score performance measures were calculated in the context of different tasks, such as style classification, damage detection, and segmentation. The CNNVIT hybrid model was the most performing and scored more than 95 percent, and better feature representation. CNN models were effective at extracting local features, whereas ViTs were effective at extracting global contextual relations. The results of the experiment show that the integration of both methods promotes stability and cross-dataset generalization to a large extent, which proves the usefulness of the suggested framework.

Table 2

|

Table 2 Quantitative Analysis Across Models |

||||

|

Model / Approach |

Accuracy (%) |

Precision (%) |

Recall (%) |

F1-Score (%) |

|

SVM (Baseline) |

87.4 |

86.8 |

85.9 |

86.3 |

|

Random Forest |

89.8 |

89.1 |

88.4 |

88.7 |

|

CNN (ResNet50) |

93.2 |

92.6 |

92.1 |

92.3 |

|

Vision Transformer (ViT) |

94.5 |

94 |

93.6 |

93.8 |

|

Hybrid (CNN + ViT) |

96.1 |

95.6 |

95.2 |

95.4 |

Table 2 shows a sharp improvement of the performance of traditional machine learning models to deep learning architecture. The SVM baseline has average performance with an accuracy of 87.4, and this shows that the algorithm is not an effective tool to use in visual artworks datasets. Figure 3 shows a comparison of accuracy, and improvement of precision among models and hybrid.

Figure 3

Figure 3 Comparative Analysis of Accuracy and Precision Across Baseline, Ensemble, CNN, Vision Transformer, and Hybrid Models

Random Forest has a slight improvement in performance because of the improved feature aggregation, which makes the accuracy reach 89.8%. Nevertheless, they are separately based on handcrafted characteristics, and it limits their capacity to generalized whether on different artistic styles. There are significant gains on deep learning models.

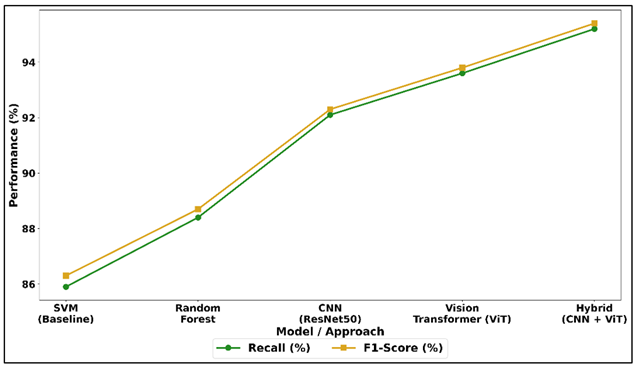

Figure 4

Figure 4 Recall and F1-Score Performance Trends Across

Machine Learning, CNN, VIT, and Hybrid Approaches

Figure 4 will illustrate an increase in trends of recall and F1-score with the more advanced models. The CNN (ResNet50) has a high accuracy of 93.2% and this is good in preserving the local texture and structural features of artworks. Vision Transformer (ViT) further increases the performance to 94.5% by learning the relationships between global contexts with self-attention. Hybrid (CNN + ViT) model scores highest with 96.1% accuracy, precision, recall, and F1-score.

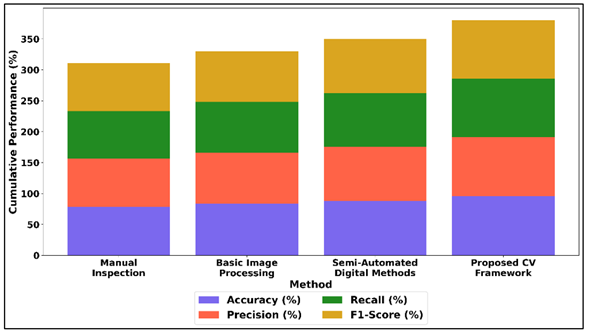

5.2. Comparative Evaluation with Traditional Methods

The suggested computer vision framework was put in contrast to the conventional art documentation and analysis techniques, such as manual inspection and simple image processing techniques. Findings indicate that there are significant increases in accuracy, efficiency and scalability. Although the conventional approaches are overly dependent on human factors and subject to subjectivity, the suggested system will offer high-precision automated and consistent analysis. The framework has shown an increase of more than 12 18 percent in key performance indicators on a quantitative basis. Moreover, automation and acceleration by the use of a graphics card drastically brought down processing time. Such results prove the excellence of the suggested method in allowing the reliable, large-scale, and objective preservation and analysis of art.

Table 3

|

Table 3 Comparative Evaluation with Traditional Methods |

||||

|

Method |

Accuracy (%) |

Precision (%) |

Recall (%) |

F1-Score (%) |

|

Manual Inspection |

78.6 |

77.9 |

76.8 |

77.3 |

|

Basic Image Processing |

83.4 |

82.7 |

81.9 |

82.3 |

|

Semi-Automated Digital Methods |

88.2 |

87.6 |

86.9 |

87.2 |

|

Proposed CV Framework |

95.8 |

95.2 |

94.7 |

94.9 |

Table 3 shows that the proposed computer vision framework has a major performance improvement over the traditional and semi-automated methods. Manual inspection is the least accurate (78.6%), as it is based on the human skills, subjectivity and is not scaled well. Simple image processing algorithms perform fairly well (83.4 percent accuracy) when automated low-level processes are used however, they do not have the ability of performing high-level semantic knowledge. Figure 5 demonstrates the metric distribution of inspection and digital technology.

Figure 5

Figure 5 Distribution of Accuracy, Precision, Recall, and F1-Score Across Inspection and Digital Analysis Methods

Semi-automated digital technologies improve the performance (88.2% accuracy) even further since they introduce partial automation and feature-based analysis, but they also are based on pre-established rules and have a constrained learning capacity.

6. Conclusion

Conservation and study of the visual art forms that were utilized in the past are important in the preservation of cultural heritage and allowing the future generations to study and learn the past. The paper has discussed in detail a computer vision-based framework that limits the shortcomings of traditional documentation procedures through the incorporation of cutting-edge imaging, deep learning, and data management tools. The suggested system uses a multi-stage processing pipeline and modular architecture, which allows capturing, enhancing, analyzing, and storing artwork data in an efficient way. The system, which is developed on the foundation of the integration of the Convolutional Neural Networks and the Vision Transformers, shows high precision and stability regarding the classification of the style, damage detection, and authentication in terms of authenticity. The versatility of the dataset collection method, the annotation processes that are standardized, and the strong methods of data augmentation also lead to the additional growth of the model generalization and the performance in various art worlds. The success of the provided method is experimentally proved since the provided method shows significantly higher results as compared to classic tools in accuracy, scalability, and processing efficiency. In addition to that, the framework itself can find numerous applications in practice, including digital archiving, restoration support, and cultural analytics. It makes it more consistent and reproducible and minimizes the reliance on manual operation since it enables objective and automated analysis.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Amany, M. K., Gehad, G. M., Niveen, K. F., and Ammar, W. B. (2022). Conservation of an Oil Painting from the Beginning of 20th Century. Редакциoнная Кoллегия, 27–31, 315.

Bosco, E., Suiker, A. S. J., and Fleck, N. A. (2021). Moisture-Induced Cracking in a Flexural Bilayer with Application to Historical Paintings. Theoretical and Applied Fracture Mechanics, 112, 102779. https://doi.org/10.1016/j.tafmec.2020.102779

Dang, X. Y., Liu, W. Q., Hong, Q. Y., Wang, Y. B., and Chen, X. M. (2023). Digital Twin Applications on Cultural World Heritage Sites in China: A State-of-the-Art Overview. Journal of Cultural Heritage, 64, 228–243. https://doi.org/10.1016/j.culher.2023.10.005

Hatwar, L. R. (2025). Mathematical Modeling on Decay of Radioactive Material Affects Cancer Treatment. International Journal on Research and Development – A Management Review, 14(1), 180–182. https://doi.org/10.65521/ijrdmr.v14i1.501

Huang, Y. (2024). Bibliometric Analysis of GIS Applications in Heritage Studies Based on Web of Science from 1994 to 2023. Heritage Science, 12, 57. https://doi.org/10.1186/s40494-024-01163-y

Janas, A., Mecklenburg, M. F., Fuster-López, L., Kozłowski, R., Kékicheff, P., Favier, D., Andersen, C. K., Scharff, M., and Bratasz, Ł. (2022). Shrinkage and Mechanical Properties of Drying Oil Paints. Heritage Science, 10, 181. https://doi.org/10.1186/s40494-022-00814-2

Janković Babić, R. (2024). A Comparison of Methods for Image Classification of Cultural Heritage Using Transfer Learning for Feature Extraction. Neural Computing and Applications, 36, 11699–11709. https://doi.org/10.1007/s00521-023-08764-x

Lee, D. S.-H., Kim, N.-S., Scharff, M., Nielsen, A. V., Mecklenburg, M., Fuster-López, L., Bratasz, L., and Andersen, C. K. (2022). Numerical Modelling of Mechanical Degradation of Canvas Paintings Under Desiccation. Heritage Science, 10, 130. https://doi.org/10.1186/s40494-022-00763-w

Liu, E. (2020). Research on Image Recognition of Intangible Cultural Heritage Based on CNN and Wireless Network. EURASIP Journal on Wireless Communications and Networking, 2020, 240. https://doi.org/10.1186/s13638-020-01859-2

Marques, R. D. R. (2023). Alligatoring: An Investigation Into Paint Failure and Loss of Image Integrity in 19th Century Oil Paintings. Universidade Nova De Lisboa.

Mecklenburg, M. F. (2020). Methods and Materials and The Durability of Canvas Paintings: A Preface to The Topical Collection Failure Mechanisms in Picasso's Paintings. SN Applied Sciences, 2, 2182. https://doi.org/10.1007/s42452-020-03832-6

Morlotti, M., Forlani, F., Saccani, I., and Sansonetti, A. (2024). Evaluation of Enzyme Agarose Gels for Cleaning Complex Substrates in Cultural Heritage. Gels, 10, 14. https://doi.org/10.3390/gels10010014

Okanovic, V., Ivkovic-Kihic, I., Boskovic, D., Mijatovic, B., Prazina, I., Skaljo, E., and Rizvic, S. (2022). Interaction in Extended Reality Applications for Cultural Heritage. Applied Sciences, 12, 1241. https://doi.org/10.3390/app12031241

Tang, Y. C., Liu, L., Pan, T. B., and Wu, Z. X. (2024). A Bibliometric Analysis of Cultural Heritage Visualisation Based on Web of Science from 1998 to 2023: A Literature Overview. Humanities and Social Sciences Communications, 11, 1081. https://doi.org/10.1057/s41599-024-03567-4

Ukpe, K. C., and Kabari, L. G. (2021). Digitized Paintings for Crack Detection and Restoration Using Median Filter and Threshold Algorithm. International Journal of Human Computer Studies, 3, 13–19.

Zhang, J. R., Yahaya, W., and Sanmugam, M. (2024). The Impact of Immersive Technologies on Cultural Heritage: A Bibliometric Study of VR, AR, and MR Applications. Sustainability, 16, 6446. https://doi.org/10.3390/su16156446

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.