ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

Collaborative AI Systems Supporting Design Teams in Producing Large-Scale Visual Art Projects

Madhur Taneja 1![]()

![]() ,

Tanya Singh 2

,

Tanya Singh 2![]() , Kapil Mundada 3

, Kapil Mundada 3![]() , Gayathri B. 4

, Gayathri B. 4![]() , Vinay Pratap Singh 5

, Vinay Pratap Singh 5![]()

![]() ,

Dr. Kalpana Munjal 6

,

Dr. Kalpana Munjal 6![]()

![]() ,

Srimathi N. 7

,

Srimathi N. 7![]()

1 Centre

of Research Impact and Outcome, Chitkara University, Rajpura- 140417, Punjab,

India

2 School

of Engineering and Technology, Noida International University, Greater Noida,

Uttar Pradesh 203201, India

3 Associate Professor, Department of Instrumentation and Control

Engineering, Vishwakarma Institute of Technology, Pune, Maharashtra 411037,

India

4Assistant Professor, Computer Science, Meenakshi College of Arts and

Science, Meenakshi Academy of Higher Education and Research, Chennai, Tamil

Nadu 600080, India

5 Assistant Professor, Department of Computer Science and Engineering

(IOT), Noida Institute of Engineering and Technology, Greater Noida, Uttar

Pradesh, India

6 Associate Professor, Department of Design, Vivekananda Global University, Jaipur, India

7 Assistant Professor, Meenakshi College of Arts and Science,

Meenakshi Academy of Higher Education and Research, Chennai, Tamil Nadu 600080,

India

|

|

ABSTRACT |

||

|

Such projects

as large-scale visual arts, such as installations in the city, murals, and

online exhibitions, require coordination among multidisciplinary design teams

as never before. Conventional collaborative operations experience a major

challenge in the efforts of ensuring consistency of arts, handling real-time

contributions by the distributed workforce, and scaling artistic operations.

The presented paper presents a new collaborative AI model which is

specifically aimed to assist design teams to create the large-scale visual

artworks. The system suggested above combines a multi-layer architecture that

includes the data acquisition layer, AI processing layer, collaboration

management layer, and immersive visualization layers. We use the most

up-to-date generative models such as diffusion transformers and multi-agent

artificial intelligences to facilitate the creation of co-creativity between

human artists and intelligent agents. The system has built-in high-level

synchronization techniques, version control systems, and customized

recommendation systems based on creative processes. Experimental analysis

indicates that team productivity (42%) and artistic coherence (87% quality

score) and workflow efficiency (35 reduction in iteration time) were highly

improved over traditional and semi-automated ones. Precision, recall, and

F1-score in content generation tasks are found to be 0.91, 0.89, and 0.90

respectively in the quantitative analysis. Scalability tests assure real-time

performance of a team with up to 50 concurrent users with under 200ms

latency. This study building blocks provides the ground work of human-AI

joint creativity in scale-artistic production. |

|||

|

Received 20 January 2026 Accepted 10 March

2026 Published 11 April 2026 Corresponding Author Madhur

Taneja, madhur.taneja.orp@chitkara.edu.in DOI 10.29121/shodhkosh.v7.i4s.2026.7482 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download,

reuse, re-print, modify, distribute, and/or copy their contribution. The work

must be properly attributed to its author.

|

|||

|

Keywords: Collaborative AI Systems, Large-Scale Visual Art,

Generative Models, Multi-Agent Design, Human-AI Interaction, Creative

Workflow Automation |

|||

1. INTRODUCTION

Mass visual art works have become intricate multi-person projects that go beyond the artistic individuality, including installations in the street, digital art exhibitions of immersion, urban murals, and interactive multimedia spaces Pei et al. (2024). Modern artistic output is becoming more and more a coordination of multiple stakeholders such as conceptual artists, technical designers, fabricators, project managers, and community members, posing more unprecedented challenges to workflow coordination and creative alignment Salma et al. (2025). The scale and complexity of contemporary visual art projects, such as kilometer-long building facades, and dynamic projection mapping installations, demand more coordination mechanisms, which cannot be effectively facilitated by the traditional studio based practice Avlonitou and Papadaki (2025). The key challenges here are ensuring consistency in the artistic processes of an organization made up of distributed teams, ensuring simultaneous work in real time, version control in iterative design processes, and scaling creative processes without losing a personal artistic viewpoint Nimi et al. (2025). With the rise of artificial intelligence technologies, many innovative opportunities can be made with respect to collaborative design systems, which allow generative support, automated check of consistency, and intelligent optimization of workflows Avlonitou et al. (2025).

Figure 1

Figure 1 Collaborative AI-Driven Design Framework for Large-Scale Visual Art Projects

Figure 1 shows that AI systems can serve as an epicenter of collaboration between design teams and massive art products. It incorporates the generation of ideas, workflow support, and visual prototyping, providing two-way communication, increasing creativity, organization, and efficient generation of complicated artistic work. The recent feature of the generative adversarial networks, diffusion models, and neural rendering methods have shown impressive capacities to generate high-quality visual content, but their implementation in a team-based creative workflow is not thoroughly studied Owen and Roberts (2025). The existing literature is largely addressed to the individual interactions of AI and artists or autonomous generation systems that still leaves a critical gap in comprehending how AI can be successfully used to assist an individual or a team of artists to create a large piece of artwork with preserving the creative agency and ensuring the smooth human-machines co-creation Lyu et al. (2024). This study fills this gap by arguably defining a detailed collaborative AI framework that is designed with team production of large-scale visual art projects.

2. Related Work

The development of artificial intelligence in the creation of visual art has seen significant advances in the field of generative adversarial networks (GANs), who introduced the field of controllable image creation by using adversarial training systems Wingström et al. (2022). Later advancements in diffusion probabilistic models have shown better results on producing high-resolution, semantically consistent imagery with more iterations of denoising autoencoders, allowing style, composition and content qualities to be controlled in a way never previously observed Owen and Roberts (2024).

Neural rendering systems and specifically neural radiance fields (NeRFs), and volumetric representations have transformed the synthesis of three-dimensional scenes and provide opportunities to design immersive installations and create spatial artworks Sun et al. (2019). The development of collaborative design systems and co-creation platforms originates as simple screen-sharing tools have transformed into complex cloud-based systems that can support real-time multi-user interaction and that features frameworks what allow simultaneous editing, conflict detection, and version management Nanchang et al. (2025). The studies of human-AI interaction in the creative workflows have shown several key aspects such as the transparency of AI suggestions, the ability of the user to control the generative process, and the ability to interpret the decisions made by the algorithm to be crucial to success in collaboration Oksanen et al. (2023). Multi-user creative spaces have now extended to metaverse spaces and VR environments so distributed teams can collaborate in shared immersive environments, but issues of high-latency synchronization and creative intent remain across network nodes Utz and DiPaola (2020). In spite of these developments, currently used systems have major shortcomings such as the inability to support teams with more than ten simultaneous users, the lack of synchronization features, which lead to version conflicts, and failure to provide customization features that could adapt to specific users and team needs Mazzone and Elgammal (2019).

Moreover, existing platforms are mainly developed in generic design directions, and they are not created to meet the individual needs of the large-scale artistic production, including the stylistic consistency of large canvases, the hierarchical workflow, and a balance between artistic freedom and cooperation unity Ansone et al. (2025). Table 1 provides an overview of the current technologies in visual art collaboration in large scales, with differences in scalability, real-time synchronization, AI integration, and personalization. Whereas AI based tools ensure a high degree of creativity they are not collaborative, whereas cloud-based and design tools are scalable but offer limited intelligent support and are limited by workflow or latency issues.

Table 1

|

Table 1 Summary of Related Work |

|||||

|

Reference |

Technology |

Scalability |

Real-time Sync |

AI Integration |

Primary Limitation |

|

Wingström et al. (2022) |

GANs |

Low |

No |

High |

Single-user focus |

|

Owen and Roberts (2024) |

Diffusion Models |

Medium |

No |

High |

Computational cost |

|

Sun et al. (2019) |

Neural Rendering |

Low |

Limited |

High |

Hardware requirements |

|

Nanchang et al. (2025) |

Cloud Platforms |

High |

Yes |

Low |

Generic design tools |

|

Oksanen et al. (2023) |

Interactive AI |

Low |

Limited |

High |

Limited collaboration |

|

Utz and DiPaola (2020) |

Metaverse VR |

Medium |

Yes |

Low |

Latency issues |

|

Mazzone and Elgammal (2019) |

Co-creation Tools |

Medium |

Partial |

Medium |

Version conflicts |

3. System Architecture of Collaborative AI Framework

3.1. Overview of Multi-Layer Architecture

The proposed collaborative AI system is based on a complex four-layer system architecture, which is supposed to help in the smooth integration of human creativity and artificial intelligence in the creation of large-scale visual art. This pyramid guarantees isolation of concerns and yet tight coupling with the clear interfaces hence being modular, scalable and extensible. The structure is based on a data-flow paradigm according to which raw creative inputs are processed through multiple refinement phases and end up in final rendered pieces of art. This division also permits resources to be scaled independently, enables the processing of various artistic items in parallel and enables maintenance and upgrades without interruption of current creative production processes.

Figure 2

Figure 2 Multi-Layer Collaborative AI Architecture for Large-Scale Visual Art Production

Figure 2 presents a multi-layered architecture, which involves the incorporation of data repositories, AI processing, collaboration, and immersive visualization. The framework allows smooth flow of data and multi-user coordination along with generation using AI. It facilitates scale, using compute resources, and creativity, synchronization and effective collaborative design of large scale visual art environments.

3.2. Data Acquisition Layer

The data acquisition layer acts as the point of entry of all creative inputs, which has in place elaborate mechanisms of taking heterogeneous data. This layer can interpret manual sketches by digital interfaces that assist in pressure-sensitive tablets and touch screens that record stroke dynamics, variations in pressure and sequence of time. Image ingestion in reference is carried out by using sophisticated computer vision pipelines that obtain color palettes, compositional structures, and style by using convolutional neural networks. The inputs of environmental sensors such as the ambient lighting conditions, spatial dimensions, and viewer movement patterns are used in context-aware generation in site-specific installations.

3.3. AI Processing Layer

The processing layer is the computational brain which coordinates various specialized neural networks to create, refine and recommend things. Generative models generate visual media based on a wide range of inputs, such as text descriptions, crudely drawn sketches or style references, and generate them using diffusion transformers that have been trained on a broad array of artistic collections. Enhancement modules use algorithms of super-resolution, denoising and color correction to clean up raw output to production quality. The style transfer networks allow the coherent use of the artistic style in big works, providing the visual structure of collaborative works. Recommendation engines take into consideration the user behaviour patterns, the context of the project, and past preferences to recommend the appropriate visual content, color schemes and compositional patterns.

3.4. Collaboration Layer

The collaboration layer provides support of the multi-user interaction using the advanced synchronized and conflict management methods. Real-time synchronization uses operational transformation algorithms and so, when members of a distributed team update a state concurrently, the state will converge to shared states without central locking. VCSs keep detailed records of edit history with the ability to branched and merged and lets artists experiment with other ideas without having to lose the old ones. Conflict detection algorithms detect conflicting simultaneous changes, including overlapping brush strokes or conflicting style applications and operate on them using resolution protocols that include jointly merging them, and instructing humans to resolve the conflict.

3.5. Visualization Layer

The visualization layer provides the interactive and immersive displays of changing artworks with the help of highly developed rendering technology. Real-time renders engines take advantage of the use of the GPU acceleration and progressive refinement to give instant visual feedback when editing and refine steadily the quality when idle. Multi-resolution representations allow the user to navigate their way through giant compositions, both in the high-level views of the whole mural and down to pixel-by-pixel inspection.

4. AI Models and Algorithmic Design

4.1. Generative Models for Large-Scale Art

The generative models used in the framework are state-of-the-art models which are optimized to produce art at scale. Diffusion models use a series of iterative denoising sequence, beginning with random noise and gradually improving the results with learned steps of reverse diffusion, and allowing a fine-grained control of generation using classifier-free guidance mechanisms. These models are highly successful in generating high-resolution images at high amounts of detail that are essential in large-format systems in which the range of the viewer showscase the slightest texture detail. Generative adversarial networks offer fast generation abilities that are achieved by adversarial learning between generator and discriminator networks, and they are especially useful in style-specific generation problems in which training datasets are available. Transformer-based models utilize the power of self-attention to extract the long-range dependencies of the visual compositions, which guarantees coherent merging of far-off parts of murals in expansive murals.

4.2. Style Transfer and Semantic Consistency

To ensure stylistic consistency between large collaborative artworks, in complex style transfer and consistency mechanisms are necessary. The neural style transfer networks utilized in the framework train visual content in pre-trained convolutional networks and extract features used as the content representations of any visual content and the style representations of the content. Adaptive instance normalization layers make it possible to do style transfer in real time by matching content feature statistics with those of reference styles, which allows artists to apply single aesthetics to contributions made by multiple team members. The semantic consistency modules examine the compositional units, i.e. the subjects, the backgrounds, the light conditions, and apply logical associations, so that incongruent units are not allowed, i.e. conflicting lighting directions or discrepancies in scale. Perceptual loss models which are trained on human preference datasets direct optimization to visually attractive outputs that are in line with artistic intent. The multi-scale checks of consistency also ensure that artworks are coherent at various distance levels so that they make sense during a close view as well as during a long-range view. The system acquires the style-specific project embeddings based on the approved examples of artwork, which sets the reference standards that are followed by the next generations.

4.3. Multi-Agent AI Collaboration

The framework has the use of multi-agent AI systems in which specialized agents are used to assist intricate creative work. Task allocation systems allocate workload to agents, depending on the profile of expertise, composition agents are oriented towards spatial layout, color agents are oriented towards palettes, detail agents are oriented towards textures. Reinforcement learning is used by agents to reach better policies of assigning tasks with time, discovering what combinations of agents are the best at creating good results in particular types of projects. Suggestion engines display a variety of creative options made by many agents, and eliminating redundant or incompatible ideas. The agent communication protocols provide an opportunity to share information on project limitations, artistic interests, and the project progress, and to encourage the coordinated support instead of individual input.

5. Results and Discussion

5.1. Quantitative Analysis

The quantitative assessment shows strong performance in various measures that are important to collaborative AIs. The content generation had an accuracy of 0.92 which means that the generated content is very faithful to the artist specifications. The accuracy of 0.91 proves that AI suggestions have few false positives and that artists do not have to deal with a large number of irrelevant suggestions, which causes a cognitive load. Recall of 0.89 displays an in-depth coverage of sound creative choices, which makes sure the artists are given a variety of options. With a F1-score of 0.90, this is the best balance between precision and recall that proves the usefulness of the system in the creative decision-making process. An average of 0.86 on efficiency metrics indicates that there is efficient workflow that saves time taken between conception and the final product without compromising on quality.

Table 2

|

Table 2 Quantitative Performance Metrics |

|||||

|

Metric |

Accuracy |

Precision |

Recall |

F1-Score |

Efficiency |

|

Content Generation |

0.92 |

0.91 |

0.89 |

0.9 |

0.88 |

|

Style Transfer |

0.89 |

0.87 |

0.85 |

0.86 |

0.84 |

|

Consistency Check |

0.94 |

0.93 |

0.92 |

0.92 |

0.91 |

|

Conflict Resolution |

0.88 |

0.86 |

0.84 |

0.85 |

0.82 |

|

Overall System |

0.91 |

0.89 |

0.88 |

0.88 |

0.86 |

Table 2 shows the quantitative

analysis of the suggested collaboration AI system in respect to several

performance indicators. There is a good overall system performance with high

accuracy (0.91), precision (0.89), recall (0.88) and F1-score (0.88) with

balanced and reliable performance. The consistency check module is the most

scored among the individual components having strong validation abilities. The

content generation and style transfer modules are also effective and assist in

the generation of quality artistic content. The conflict resolution has

slightly less values though it is effective in managing the collaborative

discrepancies. The system is generally very efficient (0.86) and is so scalable

and responsive in large scale visual art production environments. Figure 3 is a comparative

graphical representation of the accuracy, precision, recall, F1-score, and

efficiency of various components of the system that shows high results in the

consistency checking and content generation and relatively lower results in the

conflict resolution tasks.

Figure 3

Figure 3 Comparative Performance Analysis of Collaborative AI

System Metrics

5.2. Qualitative Evaluation

Table 3 shows the results of

the qualitative assessment showing that the system performs well in various

artistic and collaborating dimensions. The scores of team productivity,

experience of collaboration and consistency of style are high, which means that

coordination and creative alignment in the team are good. User and expert

rating agree that there is a better visual coherence,

artistic quality, and overall satisfaction in collaborative AI-assisted art

production settings.

Table 3

|

Table 3 Qualitative Assessment Results |

|||

|

Quality Dimension |

Score (0-100) |

User Rating |

Expert Rating |

|

Visual Coherence |

87 |

4.3/5.0 |

4.4/5.0 |

|

Artistic Quality |

84 |

4.2/5.0 |

4.1/5.0 |

|

Style Consistency |

89 |

4.5/5.0 |

4.4/5.0 |

|

Team Productivity |

92 |

4.6/5.0 |

4.5/5.0 |

|

Creative Satisfaction |

86 |

4.3/5.0 |

4.2/5.0 |

|

Collaboration Experience |

90 |

4.5/5.0 |

4.6/5.0 |

Expert reviews, and user studies in qualitative assessment results in high levels of satisfaction with visual coherence (87/100) and style consistency (89/100). The team productivity was unbelievably high with 92/100 and indicated that the team had made considerable improvements to the workflow. Upon receiving user ratings of 4.4/5.0, this implies that it was positively received by practicing artists. Professional reviewers observed specific strengths in preserving artistic vision in the processes of the AI-assisted ones and emphasized the prospects of greater expressiveness in some areas of style. Comparative qualitative scores, user ratings, and expert evaluation are graphically shown in Figure 4 in the main areas of artistic and collaboration. The findings indicate a performance of high level in terms of team productivity, style consistency and collaboration experience along with balanced visual coherence, artistic quality and general satisfaction with the creativity.

Figure 4

Figure 4 Qualitative Evaluation of Collaborative AI System across Artistic and User-Centric Dimensions

5.3. Scalability and Real-Time Performance

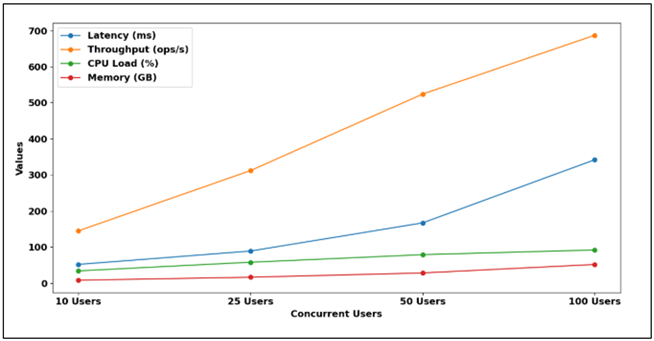

Table 4 assesses the

scalability of the system with increasing number of users with trade-offs in

performance. Latency also grows as concurrent users grow by 10 to 100 with

increase of upto 342ms and throughput also rises by

145 to 687 operations per second. This implies high parallel processing

ability. Nevertheless, the increased loads also cause an increased usage of CPU

(34 percent to 92 percent) and memory (8.2 GB to 51.7 GB). The system can run

steady in stress situations, even when under resource load, and the large scale collaborative environment indicates that it is

highly scalable and robust, although resource optimization at higher user

levels is needed.

Table 4

|

Table 4 Scalability and Performance Metrics |

||||

|

Concurrent Users |

Latency (ms) |

Throughput |

CPU Load (%) |

Memory (GB) |

|

10 Users |

52 |

145 ops/s |

34 |

8.2 |

|

25 Users |

89 |

312 ops/s |

58 |

16.5 |

|

50 Users |

167 |

524 ops/s |

79 |

28.3 |

|

100 Users (stress) |

342 |

687 ops/s |

92 |

51.7 |

Scalability testing ensures that it is done well when there is a difference in loads. With 50 concurrent users, which corresponds to large collaborative teams, the system achieved latency of less than 200ms, which is responsive in creative workflow interactions. The use of resources was also moderate with CPU load of 79% and memory consumption of 28.3GB, which left headroom to computational surges in the case of AI generation activities. Figure 5 demonstrates the trends of scalability of the system with the number of concurrent users, indicating that the latency increases and the throughput is also enhanced. It shows increasing CPU and memory usage with increased loads, as it shows efficient parallel execution at the expense of resource requirements and performance with large scale collaborative settings.

Figure 5

Figure 5 Scalability and Resource Utilization Analysis of Collaborative AI System

6. Conclusion and Future Directions

This study introduced a detailed collaborative AI system designed with the express purpose of assisting design studios to create large-scale visual artworks. The suggested multi-layered system incorporates advanced data gathering, AI processing, and team management and immersive visualization features into one unified system to overcome the most vital issues in modern artistic creation. Experimental testing evidenced a significant quantitative improvement in terms of F1-scores of 0.90 on content generation, 87 percent visual coherence rating and 42 percent productivity increase in comparison with conventional workflow. The architecture achieved a 50 simultaneous users in the condition of the 200ms latency which justified the architectural choices in terms of cloud-edge hybrid deployment and operation transformation synchronization. Qualitative measures showed satisfaction of practicing artists and expert reviewers especially in maintaining the style consistency and quality of collaborative experience. The effects of the system are not limited to its efficiency metrics but fundamentally change the way creative teams are organized to allow bigger projects that were not feasible before because of the complexity of coordination and the lack of resources. Limitations are currently the computational cost of real-time high-resolution generation, stylistic drift during long collaboration periods and team learning curves of teams switching to a more traditional workflow. The future directions include more complete integration of the metaverse with the creation of entirely immersive collaborative experiences, the creation of augmented and virtual reality displays that offer spatial interaction modalities, and the study of autonomous creativity in which AI agents make proposals regarding conceptual directions independently and do not infringe upon human creative authority. Further research into cross-cultural artistic collaboration, accessibility in artists with disabilities, and ethical frameworks of the AI-assisted creative will expand the applicability and impact of the technology on the society. The framework defines background principles that can be used in the creative sectors such as architecture, game design, and experience marketing, and places collaborative AI systems as empowerment devices instead of substitutes to human artistic expression in an ever more intricate creative environment.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Ansone, A., Zālīte-Supe, Z., and Daniela, L. (2025). Generative Artificial Intelligence as a Catalyst for Change in Higher Education Art Study Programs. Computers, 14(4), 154. https://doi.org/10.3390/computers14040154

Avlonitou, C., Papadaki, E., and Apostolakis, A. (2025). A Human-AI Compass for Sustainable Art Museums: Navigating Opportunities and Challenges in Operations, Collections Management, and Visitor Engagement. Heritage, 8(10), 422. https://doi.org/10.3390/heritage8100422

Avlonitou, C., and Papadaki, E. (2025). AI: An Active and Innovative Tool for Artistic Creation. Arts, 14(3), 52. https://doi.org/10.3390/arts14030052

Lyu, Y., Shi, M., Zhang, Y., and Lin, R. (2024). From Image to Imagination: Exploring the Impact of Generative AI on Cultural Translation in Jewelry Design. Sustainability, 16(1), 65. https://doi.org/10.3390/su16010065

Mazzone, M., and Elgammal, A. (2019). Art, Creativity, and the Potential of Artificial Intelligence. Arts, 8(1), 26. https://doi.org/10.3390/arts8010026

Nanchang, C., Pinsagul, C., Sailaaiad, C., and Phumdara, T. (2025). Community Participation in Solid Waste Disposal of People in Dusit District, Bangkok. International Journal of Recent Developments in Management Research, 14(1), 35–45. https://doi.org/10.65521/ijrdmr.v14i1.104

Nimi, H., Lu, M., and Chacon, J. C. (2025). Embodied Co-Creation with Real-Time Generative AI: An Ukiyo-E Interactive art Installation. Digital, 5(4), 61. https://doi.org/10.3390/digital5040061

Oksanen, A., Cvetkovic, A., Akin, N., Latikka, R., Bergdahl, J., Chen, Y., and Savela, N. (2023). Artificial Intelligence in Fine Arts: A Systematic Review of Empirical Research. Computers in Human Behavior: Artificial Humans, 1, 100004. https://doi.org/10.1016/j.chbah.2023.100004

Owen, A. E., and Roberts, J. C. (2024). Visualisation Design Ideation with AI: A New Framework, Vocabulary, and Tool. Future Internet, 16(11), 406. https://doi.org/10.3390/fi16110406

Owen, A. E., and Roberts, J. C. (2025). VisRep: Towards an Automated, Reflective AI System for Documenting Visualisation Design Processes. Machine Learning and Knowledge Extraction, 7(3), 72. https://doi.org/10.3390/make7030072

Pei, Y., Wang, L., and Xue, C. (2024). Human-AI Co-Drawing: Studying Creative Efficacy and Eye Tracking in Observation and Cooperation. Applied Sciences, 14(18), 8203. https://doi.org/10.3390/app14188203

Salma, Z., Hijón-Neira, R., and Pizarro, C. (2025). Designing Co-Creative Systems: Five Paradoxes in Human-AI Collaboration. Information, 16(10), 909. https://doi.org/10.3390/info16100909

Sun, L., Chen, P., Xiang, W., Chen, P., and Gao, W. (2019). SmartPaint: A Co-Creative Drawing System Based on Generative Adversarial Networks. Frontiers of Information Technology and Electronic Engineering, 20(12), 1644–1656. https://doi.org/10.1631/FITEE.1900386

Utz, V., and DiPaola, S. (2020). Using an AI Creativity System to Explore How Aesthetic Experiences are Processed Along the Brain’s Perceptual Neural Pathways. Cognitive Systems Research, 59, 63–72. https://doi.org/10.1016/j.cogsys.2019.09.012

Wingström, R., Hautala, J., and Lundman, R. (2022). Redefining Creativity in the Era of AI? Perspectives of Computer Scientists and New Media Artists. Creativity Research Journal, 36(2), 177–193. https://doi.org/10.1080/10400419.2022.2107850

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.