ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

Generative Adversarial Networks as Creative Partners in Digital Painting and Illustration workflows

Vijaya Balpande 1![]() , Neha Rana 2

, Neha Rana 2![]() , Rajesh

Raikwar 3

, Rajesh

Raikwar 3![]() , Baoxin

Le 4

, Baoxin

Le 4![]()

![]() , Atish

Baburao Mane 5

, Atish

Baburao Mane 5![]() , Asha

Rani G 6

, Asha

Rani G 6![]()

1 Department

of Computer Science and Engineering, Priyadarshini College of Engineering,

Nagpur, Maharashtra, India

2 School

of Pharmacy, Noida international University, Greater Noida, Uttar Pradesh

203201, India

3 Assistant Professor, Department of Electrical Engineering, Vishwakarma

Institute of Technology, Pune, Maharashtra 411037

4 Faculty of Education Shinawatra University, Thailand

5 Department of Mechanical Engineering, Bharati Vidyapeeth's College of

Engineering, Lavale, Pune, Maharashtra, India

6 Assistant Professor, Meenakshi College of Arts and Science, Meenakshi

Academy of Higher Education and Research, Chennai, Tamil Nadu 600080, India

|

|

ABSTRACT |

||

|

The

application of artificial intelligence to creative processes has profoundly

changed the modern digital art and illustration processes. Generative

Adversarial Networks (GANs) are among many other AI approaches that have

become potent in creating quality visual art and assisting the exploration of

art. In this paper, the author explores the use of GANs as a creative

collaborator in the digital painting and illustration workflow. The paper

analyzes the technical principles behind GAN architectures, their use in the

artistic image generation, and their role in human-AI creative processes. The

most important applications of GAN systems, such as concept generation, style

transfer, image-to-image translation, and automated colorization, are

examined in order to comprehend how the technologies can support an artist at

any phase of visual creation. The examples of major GAN models DCGAN,

CycleGAN, StyleGAN, and StyleGAN2 are also compared to discuss the

effectiveness of these models in the synthesis of artistic images. According

to the results provided, it is seen that advanced architectures are better in

image realism, consistency of structures and artistic usability than the

previous models. Moreover, the study demonstrates the advantages of GAN-based

tools as it promotes quick ideation, experimentation with styles, and design

feedback, without taking control of the creative process of artists. The

paper also talks of the technical structures of integrating GAN systems in

the digital art setting and talks about issues of ethical issues surrounding

authorship, originality, and bias in the dataset. All in all, the results

indicate that the technologies based on GAN redefine the production of

digital art by facilitating the interactive collaboration between human creativity

and machine intelligence and creating new opportunities in the realm of

innovations in computational creativity and digital illustration practice. |

|||

|

Received 17 January 2026 Accepted 14 March

2026 Published 11 April 2026 Corresponding Author Vijaya

Balpande, vpbalpande15@gmail.com DOI 10.29121/shodhkosh.v7.i4s.2026.7468 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download,

reuse, re-print, modify, distribute, and/or copy their contribution. The work

must be properly attributed to its author.

|

|||

|

Keywords: Generative Adversarial Networks, Digital Art,

Artificial Intelligence in Creativity, Digital Painting, Illustration

Workflows, Human–AI Collaboration, Computational Creativity |

|||

1. INTRODUCTION

1.1. Background of Artificial Intelligence in Digital Art

The incorporation of artificial intelligence (AI) into creative activities has had a major impact on the field of digital art. In the last 20 years, machine learning, computer vision, and computational creativity have developed, allowing artists and designers to experiment with newer forms of image generation and visual experimentation, as well as to express themselves through novel forms. Previously based on the use of graphic tablets, image-editing programs, and digital painting systems, digital art is becoming more integrated with AI-driven technologies that help to create visual information, propose stylistic and appearance options, and automate repetitive processes. Such advances have opened up new avenues of the artist by facilitating the exploration of intricate visual structures, generative aesthetics, and mass data of images, which was hard to manipulate formerly manually. Consequently, AI has become not only a technological instrument but also a powerful element of modern digital art, which is used to conceptualize, create, and experience visual sources.

1.2. Emergence of Generative Adversarial Networks (GANs) in Creative Fields

The creation of Generative Adversarial Networks (GANs) is one of the most powerful AI-powered aid in image generation. GANs were first introduced in 2014 and they are comprised of two neural networks the discriminator and the generator which are connected by a competitive learning process. The generator generates synthetic images and the discriminator evaluates the authenticity of the synthetic data with real data. This adversarial process is done through training repeatedly whereby the generator is allowed to generate output that is more realistic and visually appealing. GANs have been used in recent years in creative industries like digital art, illustration, animation, and design. The models based on GAN have been applied in artists and researchers in areas such as style transfer, image synthesis, exploring visual concepts, and generating artwork automatically. The capabilities of GANs enable the production of new visual compositions that can serve to stimulate artistic exploration and expand traditional ways of creativity Heidrich and Schreiber (2023).

1.3. Role of AI as a Collaborative Tool in Artistic Workflows

Instead of substituting the human-based creativity, AI technologies have been considered more as collaborators in artistic processes. In digital art, illustration, and painting, the AI-driven systems could help the artist by creating initial sketches, proposing colour palettes, creating visual variants of style, or improving the visual detail. The combination of this interactive process enables artists to pay a greater attention to the conceptual and expressive elements of their work and use the capabilities of computational tools, which can be used to increase ideation and experimentation. Specifically, GAN-based systems make it possible to quickly visually prototype, creating variations of the image, depending on the training data and user input. The synergy that exists between human creativity and machine intelligence results in a hybrid creative process where the artist determines the direction of the work, but AI is used to explore computationally and provide generative power. This collaboration has contributed to novel co-creative models in which the role of author of artworks is seen as a result of the reciprocal connection between intentionality and algorithmicity.

1.4. Research Objectives and Scope of the Study

The main goal of the study is to look into Generative Adversarial Networks as creative collaborators in digital painting and illustration processes. The paper explores the role of GAN-based systems in various phases of the artistic process, such as the generation of the idea, style creation, and visual enhancement. It also examines the technical and artistic inferences of the implementation of GAN models in the modern digital art realm. In addition, the study will examine the advantages, drawbacks, and ethics surrounding AI-assisted creativity. Through the analysis of the artist-generative AI technology, this research aims to offer information about how GAN-based tools can be used to facilitate the artistic process without diminishing the importance of human creativity in the creation of digital art. The study scope covers an introduction to the architecture of GANs, the usage of GANs in the field of digital art generation, and the implications of AI-human collaboration across the creative industries as a whole Phillips et al. (2024).

2. Foundations of Generative Adversarial Networks

2.1. Concept and Architecture of GANs

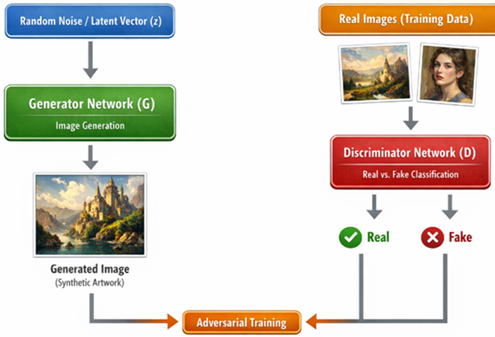

Generative Adversarial Networks (GANs) represent a type of deep learning architectures that produce synthetic data that is similar to the real-world data. The basic idea behind GANs is that it is an adversarial training procedure in which two neural networks are used: a generator and discriminator. The role of the generator is to create the artificial image based on the noise in a random input or latent vectors whereas a discriminator sets the extent to which the generated pictures are real or fake by contrasting them with actual data samples. This adversarial system has the benefit of simultaneously training both the generator and the discriminator: the discriminator is trained to be more sensitive to the difference between a real and a generated image, and the generator is trained to generate more realistic ones. This interactive training provides GANs with immensely precise visual results, thus being especially appropriate to the area of image generation and inventive media.

2.2. Generator and Discriminator Interaction Mechanism

The main concept of GANs is the competition between the discriminator and the generator network. At training, the generator tries to generate images that may effectively fool the discriminator, whereas the discriminator tries to correctly understand whether they are real or generated images. It can be perceived as a minimax optimization problem, in which the generator reduces the probability of the discriminator making the right decision in the case of generated images and the discriminator increases its potential to discriminate between real and synthetic images.With repeated iterations of this adversarial training, the generator progressively approaches the knowledge of the actual distribution of the training data. Consequently, this causes it to give rise to images that have been able to recreate the visual properties of real-world data, such as textures, colors, and structural patterns. The creation of high-quality images utilized in digital art and illustration is made possible by this learning mechanism.

2.3. Evolution of GAN Models in Image Generation

Since its introduction, the GAN architectures have been developed to be more stable with respect to training and image quality as well as controllable. The training instability, mode collapse, and lack of capability of synthesizing high-resolution images were the common pitfalls encountered by early GAN models. In order to solve these problems, a number of better architectures have been designed. An example is the Deep Convolutional GANs (DCGANs), which added the convolutional layers to improve the quality of image generation. Conditional GANs (cGANs) permitted the generation outputs to be controlled (with label information) and this allowed artists and designers to direct image creation to take place according to certain attributes. Subsequent generations like StyleGAN and StyleGAN2 were also more realistic in visual appearance, allowing a fine-resolution manipulation of the image style, texture and structure. This development has greatly increased the scope of the GANs in terms of creative implementation, especially in digital art and illustration Chen et al. (2021).

2.4. Applications of GANs in Visual Creativity

The development of GANs has found extensive applications in numerous fields of creativity because of the possibility of producing images that look different and pleasing to the eye. GANs have aided a number of creative processes in digital painting and illustration workflows including aesthetical texture creation, realistic background synthesis, sketch-to-detailed image, and style transformations. They can also be employed in the development of concept art in which artists experiment with various visual variations at a rapid rate before making final decisions related to design. Also, GANs are being incorporated more and more into creative software with the help of which artists can create unique visual elements and explore new ways of aesthetics. GAN-based systems help to facilitate more productive and creative artistic processes by supporting the interactive collaboration between machine intelligence and human creativity due to the possibility of quick visual exploration and automated image synthesis.

3. Digital Painting and Illustration Workflows

Digital painting and illustration now constitute a key practice in the visual arts, design, animation and media production of today. The development of digital technologies allows artists to use special software and hardware tools to develop sophisticated visual compositions that used to be done in practice using such physical resources as canvas, paints and brushes. The current digital painting setups provide an artist with the opportunity to recreate the traditional artistic method, but offers greater flexibility, accuracy, and experimentation. Software and web applications like digital painting programs, illustration programs allow artists to operate on various layers, brush setting options, paintable textures, and color editing options. These characteristics enable artists to build images step by step, to perfect visual elements and also to experiment with various styles without modifiying the original composition in an irreversible way. Consequently, the digital processing of paintings involves both artistic artisanship knowledge and computer power to facilitate repetitive creativity and visual trials. The basic workflow of digital painting and illustrations starts with the conceptual development and ideation, when artists define the visual topic, the elements of the narrative and the style direction of a project. Artists can create conceptual sketches or thumbnails at this point in order to experiment with compositions, characterization, or setting arrangements. These sketch artistically are the basis of the complete artistic work and they enable artists to experiment the perspective, spacing and visual narration. After the ideation phase, the artists transition to the sketch refinement phase whereby crude sketches are refined into more organized sketches. Digital tools enable the rapid correction, resizing, and proportional or composition adjustments and thus artists have the ability to see their idea through before committing to the detailed rendering process Qiu and Cao (2019).

The color blocking and base composition is the next step of the working process where the artists define the main palette in the painting and the lighting scheme. This stage is critical in determining the mood, atmosphere as well as visual hierarchy in the composition. The digital painting software also allows the artists to work with a variety of color schemes by using a variety of layers and blending modes that can significantly affect the emotional tone of the painting. After setting the base colors, the artist then goes to the rendering and detailing phase where texture, shading, highlights, and small details are done. This can take a lot of attention of digital brushes, pressure sensitivity and blending tricks to ensure that there is depth and reality in the image. In spite of the strengths of the digital tools, artist are often faced with a number of challenges they face when undertaking digital painting and illustrations. Among the major issues, there is the time-consuming character of detailed rendering, especially when it is applied to high-resolution images or complicated scenes. To a great extent, artists have to put a lot of effort into the work of perfecting textures and lighting effects, as well as details of the image. The other weakness is the issue of creative block or idea constraint whereby artists can prove difficult to develop new visual images or experiment with new stylistic styles. Also, such stylistic consistency as in large artworks, whether it is illustrated books, animation elements, or collections of concept art, may be taxing and needs much thought to details of design and overall visual consistency.

Over the past few years, artificial intelligence technologies, specifically generative models, have started to deal with some of those issues and assist various steps of the digital art workflow. AI-based systems can help artists create references to visuals and test color sets and generate early versions of images that provide new directions in art. These tools will be able to greatly increase the pace of ideation by providing numerous design options in a limited amount of time. As an illustration, concept art may use AI-generated images as a visual aid, allowing artists to experiment with the stylistic interpretation of the concept in a variety of ways before choosing a specific course of action to follow up with. Moreover, AI-based products can also be used to create textures, transfer styles, and process images automatically and lessen the number of people having to work on repetitive tasks. Through incorporation of generative technologies in digital art systems, the artists will be able to integrate the computational aid with their own creative decisions to create a more polished and aesthetically rich piece. Such integration is useful in providing a hybrid workflow whereby human creativity is still the driving force whereas machine intelligence adds computational experimentation and efficiency. On the whole, the processes of digital painting and illustration are also changing as being entirely manual to being a human-machine creative system. The use of AI technologies, like generative models, like GANs, is changing the way artists conceptualize, create, and perfect visual art. Instead of substituting artistic creativity, these technologies offer new possibilities, which add to the creative potential of artists and allow them to experiment with new and innovative forms of expression, faster production and experiment with the visual forms that were difficult to realize previously and required the use of traditional means of digitality.

4. GAN-Based Creative Assistance in Digital Art

Generative Adversarial Networks (GANs) have emerged as strong sources of aiding creative work in digital painting and illustration. By being able to produce high-quality visual content, artists can experiment with the stylistic variations, experiment with new ideas, and quicken different steps of the artistic process. GAN models are able to create images that, by learning patterns on massive image data sets, visually coherent images, which have a resemblance to artistic styles, textures and compositions. Consequently, GAN systems will be used as creative aids, which complements human artistic capabilities and does not take over them. These systems are becoming more and more a part of design platforms within digital art environments to increase ideation, experimentation and visual refinements. Concept generation with the assistance of AI is one of the influential ways of using GANs in creative processes. At the initial level of artistic output, an artist may have to experiment with a number of visual concepts before they settle on a design path. GAN-related systems are capable of creating different visual ideas basing on training data or human input in the form of drawings, words, or reference images. These are produced outputs which also act as a visual stimulus to the artist artists going through a creative hitches and quickly experimenting with various design options.

One more useful application of GANs is style transfer and image improvement. GAN models are able to learn the stylistic aspects of pre existing art and apply it to a new image, allowing one to turn simple drawings or photos into paintings with a certain artistic style. It enables the artists to experiment with a range of aesthetics, including impressionist textures, comic-style shading, or brush effects of the painters. Also, the enhancement approaches based on GAN could be used to enhance the image quality by increasing its resolution, sharpening of the details, and light refinement to achieve more pleasing outcomes. Image-to-image translation is also supported by GANs and it is especially useful in artistic iteration and experimentation. Here a visual input, in one form or another, e.g. a line drawing or a coarse sketch, is transformed into a more detailed or stylized image. This method helps an artist to produce numerous versions of a design in a short time so they can easily compare how various images can be interpreted before making a final decision. Moreover, GAN models may be used to automatically colorize and generate texture, which has a lasting effect on minimizing work on the rendering process. Through the analysis of already existing art pieces, GANs can recommend proper color schemes, shading effects, and textures of the surface that would make the pieces look more realistic and expressive of the artistic depth. GAN-based tools can successfully complement digital art workflows, allowing artists to do creative decisions and use computational help with repetitive or exploratory tasks through these capabilities Hanafy (2023).

5. Human–AI Collaboration in Creative Practice

The introduction of artificial intelligence into the creative space has culminated in novel ways of how artists and computational systems can interact. Instead of being independent artists, AI tools, in particular, Generative Adversarial Networks (GANs), act as complementary tools that allow artists to explore and be productive. Digital painting and illustration processes are workflows where artists interface with systems that are part of the AI-driven system by inputting a sketch, reference image or textual prompt. Such inputs dictate the generative process too, which allows AI tools to generate visual suggestions that can be refined, altered, or reinterpreted by artists. This communication creates a collaboration between human intention and the creative process and AI as a kind of computational experimentation and visual variation. Human-AI collaboration tends to lead to co-creation and enhanced creativity, where creative results can be achieved due to the joint efforts of an artist and an AI system. Rather than eliminating the human creativity, GAN-based models increase the pool of opportunities that artists have by creating other compositions, textures, and stylistic variations. Artists are able to analyze such outputs, choose those elements that go with their vision, and use them in their work. This process of iteration is a benefit because it invites experimentation and lets artists experiment with other aesthetic directions that might have been explored solely by traditional means. In this respect, AI serves as an innovative booster, which provokes new concepts and maintains the role of the artist as the ultimate decision-maker.

GAN models are being actively introduced into a workflow in professional digital studios. The tools developed with GAN can be used by artists at the first stage of concept development, visual prototyping, or detail refinement. Such tools are able to quickly create numerous design alternatives which allow creative teams to review and develop ideas effectively. GAN-assisted tools enable artists to concentrate on conceptual and narrative development because repetitive and mundane activities like creating textures, color recommendations, or other visual elements are automated. A wide variety of experimental and professional projects prove the practical use of the GAN-assisted art generation. GAN models have been used to create abstract paintings, stylized portraits and hybrid works of art by combining machine generated imagery with manual digital painting methods. This kind of case study underscores the changing status of AI as a co-creator in artistic practice, and demonstrates how computational creativity can augment human creativity in the production of digital art at the present day Zhang et al. (2023).

6. Technical Framework for GAN-Driven Illustration Systems

The introduction of Generative Adversarial Networks (GANs) into the digital illustration process necessitates a formal technical structure that facilitates the idea of image generation, interaction with an artist, and efficient implementation of the system. This type of structure is usually paired with machine learning infrastructure and user-friendly design philosophy, which would allow AI-produced results to be valuable and manageable to artists. The structure consists of various important elements such as dataset preparation, GAN architecture selection, interface development, and operational pipeline, which is a link between the generative model and artistic procedures. The combination of these elements allows the GAN-powered systems to be used as efficient creative facilitators in digital art painters’ and illustrators’ workspaces.

6.1. Dataset Preparation and Training Strategies

One important step when it comes to creating GAN-based illustration systems is the preparation of the data, since the quality and the variety of the training data play an important role in determining the visual output of the model. Artistic generation Image datasets Artistic generation systems are typically based on a curated set of artworks, illustrations, sketches or photos that provide examples of the visual style that the system is looking to learn. To provide the uniformity of training conditions, these images will have to be preprocessed by tasks which include resizing, normalization, noise reduction, and data augmentation. To make the method more diverse, data augmentation techniques (rotation, scaling, color changes, etc.) are frequently applied to expand the range of images in the dataset and enhance the model capacity to produce more diverse images. Another type of training method can be by using datasets that are style-specific, that is, to induce the GAN to acquire specific artistic properties, e.g., brush strokes, color scheme, composition, etc.

6.2. GAN Architectures for Artistic Image Generation

It is possible to use different GAN structures to generate artistic images based on the required quality of output and the degree of control. Convolutional GANs are typical of textured visual generation and the creation of stylized pictures since convolutional layers are effective in capturing spatial characteristics in pictures. Conditional GANs allow artists to control the generation of images with labels, sketches or reference input, giving artists more creative control over the output. More sophisticated architectures enable synthesizing images of high resolution and manipulating styles, which enables detailed digital drawings and complicated visual geometries. The choice of a suitable architecture can be based on the size of dataset, the available computational resources and the detail of artistic output.

6.3. Interface Design for Artist–AI Interaction

To succeed in artistic workflows, the GAN systems should be given the user interfaces that are intuitive to ensure that the artists can interact with the AI models. A nice interface enables the artists to post drawings, tweak settings, choose stylistic examples, and see the results generated in real-time. Because they do not need excessive technical expertise of machine learning, visual control elements like sliders, style selectors, and brush-based input tools can assist artists to control the generative process. Such interfaces are aimed at providing accessibility and responsiveness of AI systems so that an artist can act freely and have creative control over the final piece of art Joshi et al. (2025).

6.4. Implementation Pipeline for GAN-Based Art Generation

The illustration system based on a GAN normally has multiple steps in the pipeline of implementation. It is a stepwise process of generating images via GAN, which, through training on specified image data sets, learns the patterns of visuals. The model is implemented into a graphical interface with an application environment where artists are provided with the opportunity to engage the model after the training. In case the artist gives an input, say a drawing or reference picture, the input is processed by the system and visual outputs are produced according to the learnt model. These outputs can further be optimized after more trials and through this process artists can adjust parameters or blend generated work with hand drawing digital techniques.

Figure 1

Figure 1 GAN Architectures for Artistic Image Generation

Figure 1 shows the architecture and operation of a Generative Adversarial Network (GAN) to create artistic images as part of the digital painting and illustration processes. A GAN involves two major neural networks: the Generator (G) and the Discriminator (D) which are both trained concurrently via an adversarial learning procedure. With the help of this architecture, the system can produce realistic synthetic images similar to the works of the training dataset. These two networks interact in order to make up the basis of GAN-based creative systems in digital art applications. The first layer is the latent input layer which on the diagram is denoted by Random Noise or Latent Vector (z). This is a randomly chosen collection of numbers which serves as the starting seed of image generation. The latent vector holds the coded information which is decoded by the generator network to generate visual content. Even though the vector is not an image, it contains the mathematical initial point on which an artistic pattern, shapes, colors and texture are modeled. The Generator Network (G) is in charge of converting the latent vector to a synthetic image. The generator produces the structured image which resembles the works of art in the training set, gradually changing the random input with the help of deep neural network layers (typically convolutional layers in the contemporary architectures). In the process, the network acquires complicated visual attributes like composition, shading, brush strokes and aesthetics. The result of the generator is called a generated image or synthetic work of art, which tries to resemble real artistic images.

Meanwhile, the Discriminator Network (D) is a classifier which analyzes the authenticity or fake nature of an image. The discriminator accepts two kinds of input, real images in the training set and generated images in the form of synthetic images created by the generator. It is then its duty to process visual patterns and identify whether a picture was an original image or a product of the model. The discriminator generates a score of probability as to whether the image is real or fake. The generator and the discriminator go through an adversarial continuous training process Ramesh (2021). The generator tries to create the images that are capable of cheating the discriminator whereas the discriminator tries to become more capable of detecting the generated images. By repeatedly running through this competition learning process the generator learns the underlying visual distribution of the data and generates more and more realistic images. Such an adversarial process allows GANs to produce artistic visual content of high quality that has the potential to facilitate digital painting, illustration, concept art generation, and other forms of creativity. This architecture is useful so that GAN systems can act as creative assistants in the context of digital art workflows. With such systems, artists are able to create concept art, test out style variations, and create visual elements that are inspiration or starting point of further artistic development. Human creativity and machine-generated imagery are collaborative and enrich the possibilities of producing digital art and promote new ways of telling visual stories and illustrating them.

7. Comparative Analysis

Table 1

|

Table 1 Comparative Analysis Table |

|||||

|

GAN Model |

Architecture Characteristics |

Image Quality (FID ↓) |

Structural Similarity (SSIM

↑) |

Artist Preference (%) |

Limitations |

|

DCGAN |

Convolutional GAN using deep

convolutional layers for generator and discriminator |

65 |

0.62 |

52% |

Lower image realism, limited

control over style and composition |

|

CycleGAN |

Image-to-image translation

architecture enabling transformation between domains |

48 |

0.71 |

64% |

Requires paired domain

structures, may produce artifacts in complex scenes |

|

StyleGAN |

Style-based generator

architecture with progressive growing and style control layers |

22 |

0.84 |

81% |

Higher computational

requirements and longer training time |

|

StyleGAN2 |

Improved StyleGAN

architecture with refined normalization and artifact reduction |

15 |

0.89 |

88% |

Requires large datasets and

high-performance GPU resources |

Table 1 presents a comparative analysis where it is evident that there is a performance improvement across GAN architectures. Original forebears such as DCGAN give a generalized method to the generation of images but generate less realistic artistic work. CycleGAN is also more flexible since it allows the translation of styles across domains of images and is applicable in artistic style translation challenges. More recent design, like StyleGAN and StyleGAN2, have shown to be much higher in visual sense as well as in structural consistency and artistic taste. StyleGAN2 has the lowest value of FID score and highest SSIM value which suggests better quality of images and structural faithfulness. Also the increased score on artist preference indicates that its products have better use in creative exploration in digital painting and illustration processes. The analysis, in general, suggests that a new generation of GAN architecture, especially StyleGAN-based models, is better suited to the creation of AI-aided digital art, which is more realistic, contains more information, and allows fostering creative processes O’Meara and Murphy (2023).

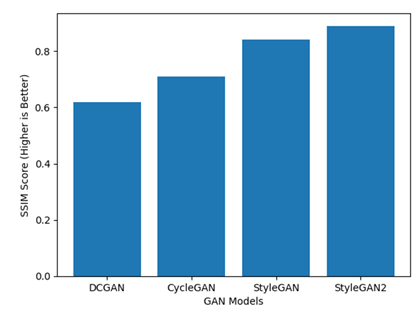

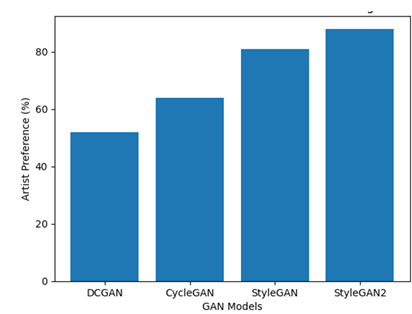

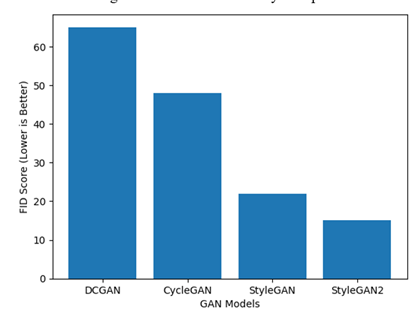

Comparative analysis of a variety of popular GAN systems, including DCGAN, CycleGAN, StyleGAN, and StyleGAN2 presented in Figure 2, Figure 3, Figure 4 have been made to assess the performance of Generative Adversarial Networks in the digital painting and illustration workflow. The analysis is based on three key performance metrics typical of the research on generative images: Fréchet Inception Distance (FID), Structural Similarity Index Measure (SSIM), and artist preference metric gained as a result of a qualitative user study. All these measures quantify the reality, the quality of structure, and the artistic acceptability of created photographs. Fretichet Inception Distance (FID) is the competitive of the resemblance between created images and the actual pictures in the dataset. Higher FID value shows greater visual realism and greater association with the training distribution. The comparison of the results shows that StyleGAN2 has the lowest FID score, which shows the best ability to produce high-quality images of art. The previous architecture like the DCGAN has a much higher FID score, indicating a low level of image realism and a higher variation with the real image distribution.

Figure 2

Figure 2 Structural Similarity Comparison

Figure 3

Figure 3 Comparative FID Score of GAN Models

Figure 4

Figure 4 Artists preference of GAN

Structural Similarity Index Measure (SSIM) is used to compare the structural faithfulness of generated images compared to reference images. The values of SSIM are higher, which implies that structural patterns (shapes, edges, and textures) are better preserved. StyleGAN2 takes the lead in the comparative analysis with StyleGAN trailing in close. Such architectures exhibit better capacity in keeping the structure of generated artwork and this is of great concern in digital illustration tasks, especially the ones that deal with complex visual representations. Besides quantitative assessment, a qualitative study of artist preferences was done to determine the way artists in the creative processes view GAN-created images. Images created with the help of various GAN models were displayed to participants and they were required to rate it in terms of aesthetic value, novelty, and the applicability to real concept development. The findings show that StyleGAN2 scored the highest preference rating, which implies that artists consider the results of this method as more aesthetically pleasing and can be used to explore creativity. On the whole, the comparative analysis reveals the obvious development of the GAN technology. Although the previously mentioned models, i.e., DCGAN and CycleGAN, also offer useful generative functionality, more sophisticated versions, i.e., StyleGAN and StyleGAN2, can be used with a much better visual realism, structural quality, and artistic applicability. It was found that the recent GAN designs are more compatible with digital painting and illustration processes where high-quality visual results are needed.

8. Future Directions in AI-Assisted Digital Art

The accelerated development of the artificial intelligence technologies is still changing the world of digital art and illustration. The future of AI-assisted creative systems will involve making generative tools used by artists of the future higher-quality, more interactive, and customized. The unification of GANs models with diffusion-based generative systems and hybrid designs is one of the important directions. Recently, diffusion models have shown exceptional results in creating very detailed and coherent images. Integrating the GAN design with diffusion-based systems may result in hybrid-generative systems that is faster, controllable, and visually realistic. These systems could help artists to create sophisticated artistic compositions, yet have a fine control of stylistic characteristics, textures as well as visual structures. The other potential trend is the introduction of AI collaborative tools in real time to artists. The existing systems of AI-assisted art are usually based on the offline processing or computationally demanding training algorithms. The next generation systems will probably be oriented on interactive systems, in which artists will be able to work with AI in real-time in the creative process. These tools could enable artists to draw and paint or edit pictures as the AI system will also give recommendations, style options, or aesthetic improvements. Real-time collaboration would greatly speed up artistic workflows and provide a feeling of experimentation as artists would be able to experiment with multiple visual possibilities in real-time Shanmugasundaram and S (2025).

The field of AI technologies is also projected to be involved in generation of personalized art, wherein the generative models would be tailored to the personal style and preferences of the artists. With the help of the past creations of an artist, AI systems may create their own creative models that would embody the specific visual features, color schemes, and compositions of certain artists. This intimacy would enable AI to play the role of an incredibly customized creative assistant, able to create art that meets the aesthetic of the vision of the artist but which also provides new creative options. In addition, computational creativity can be seen as a field that has many new research opportunities. Researchers and technologists are starting to consider ways to engage AI systems in creative workflow other than the generation of images in a straightforward way. The future study could be about how the AI can be used in the support of storytelling, conceptual design, interactive art in media, and group artistic activities in the field of various disciplines. Ethical and authorship concerns, as well as the intellectual property systems, will also gain prominence in the research areas as the AI-generated art will become more common. In general, these future directions indicate that AI-assisted systems can become more advanced creative collaborators, which can both promote artistic innovation and maintain the primary place of human creativity in the creation of digital art Liu and Chilton (2022).

9. Conclusion

This paper examined how Generative Adversarial Networks (GANs) can be used as creative collaborators in the process of digital painting and illustration. The study involved the contribution of GAN-based systems to the creative process in different areas, such as the creation of ideas, the transformation of styles, the refinement of images, and the exploration of visual images. Examining the technical underpinnings of GAN systems and their use in the creative context, the paper has brought out the increasing importance of artificial intelligence as an enabling technology in the modern digital art practice. The results suggest that GAN models can work well to improve the artistic processes to produce high-quality images, help explore ideas, and speed up creative experimentation. StyleGAN and StyleGAN2 are advanced versions of the architecture and can be used in concept art and illustration development and in digital design, among other applications, because they show notably high performance when producing realistic and visually detailed images. These systems enable artists to experiment with a variety of visual variations in a short period and in this way extend the creative possibilities one can make throughout the design process. Instead of substituting creativity in individuals, GAN technologies are collaborative technologies that complement artistic decision-making and can encourage creative visual experimentation. The paper also underlines the significance of the combination of convenient interfaces and properly organized technical systems to make the interaction between artists and AI-based systems effective. The partnership of humans and AI is a key aspect of preserving the artistic authenticity and using computational power to be efficient and experimental. Nevertheless, the obstacles of bias in the dataset, the complexity of the training, and ethical implications of authorship and originality should be still discussed as AI-generated art becomes more popular. To sum up, GAN-driven systems can be viewed as a game-changer in the production of digital art, which allows expanding the sphere of collaboration between artists and intelligent machines. With the further development of generative AI technologies, it is probable that they will become even more significant in the future of creative practices since they will provide the potent means to increase innovative power in artistic creation and retain the primary role of human creativity.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Chen, F., Zhu, F., Wu, Q., Zheng, J., and Zhang, X. (2021). A Review of Generative Adversarial Networks and their Applications in Image Generation. Journal of Computer Science, 44, 347–369.

Hanafy, N. O. (2023). Artificial Intelligence's Effects on Design Process Creativity: A Study on the Use of AI Text-To-Image in Architecture. Journal of Building Engineering, 80, 107999. https://doi.org/10.1016/j.jobe.2023.107999

Heidrich, D., and Schreiber, A. (2023). Visualizing Source Code As Comics Using Generative AI. In Proceedings of the IEEE Working Conference on Software Visualization (VISSOFT) ( 1–10). https://doi.org/10.1109/VISSOFT60811.2023.00014

Joshi, M., Tiwari, A., Dhabliya, D., Lavate, S. H., Ajani, S. N., and Gandhi, Y. (2025). Building AI-Driven Frameworks for Real-Time Threat Detection and Mitigation in IoT Networks. In Proceedings of the International Conference on Emerging Smart Computing and Informatics (ESCI 2025). https://doi.org/10.1109/ESCI63694.2025.10988310

Liu, V., and Chilton, L. B. (2022). Design Guidelines for Prompt Engineering Text-To-Image Generative Models. In Proceedings of the CHI Conference on Human Factors in Computing Systems. https://doi.org/10.1145/3491102.3501825

O’Meara, J., and Murphy, C. (2023). Aberrant AI Creations: Co-Creating Surrealist Body Horror Using the DALL-E Mini Text-To-Image Generator. Convergence, 29, 1070–1096. https://doi.org/10.1177/13548565231185865

Phillips, C., Jiao, J., and Clubb, E.

(2024). Testing the Capability of AI Art Tools for

Urban Design. In Proceedings of the IEEE Computer Graphics and Applications

Conference.

Qiu, R., and Cao, Y. (2019). On Copyright Protection of AI Creation. Journal of Nanchang

University (Humanities and Social Sciences), 2, 35–43.

Ramesh, A., et al. (2021). Zero-Shot Text-To-Image Generation. In Proceedings of the International Conference on Machine Learning (ICML).

Shanmugasundaram, D. S., and S., S. (2025). The Role of Artificial Intelligence in Industry 4.0: Overview, Challenges and Outlook. International Journal of Research and Development in Management Review, 14(2), 46–50. https://doi.org/10.65521/ijrdmr.v14i2.1273

Yin, H., Zhang, Z., and Liu, Y. (2023). The Exploration of Integrating the Midjourney Artificial Intelligence Generated Content Tool into Design Systems to Direct Designers Towards Future-Oriented Innovation. Systems, 11, 566. https://doi.org/10.3390/systems11120566

Zhang, B., Zhou, Y., Zhang, M., Chen, H., and Li, J. (2023). Review of Research on Improvement and Application of Generative Adversarial Networks. Application Research of Computers, 40, 649–658.

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.