ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

Machine Learning Algorithms for Classifying Visual Artistic Styles Across Historical Periods

Dr. Pratibha V. Kashid 1![]() , Ganesh Korwar 2

, Ganesh Korwar 2![]() , Kiran

Shyam Khandare 3

, Kiran

Shyam Khandare 3![]() , Baoxin

Le 4

, Baoxin

Le 4![]()

![]() , Pramod

Rahate 5

, Pramod

Rahate 5![]() , Nikita

P. Katariya 6

, Nikita

P. Katariya 6![]() , Suresh

Arumugam 7

, Suresh

Arumugam 7![]()

1 Department

of Information Technology, Sir Visvesvaraya Institute of Technology, Nashik, SPPU,

Maharashtra, India

2 Associate

Professor, Department of Mechanical Engineering, Vishwakarma Institute of

Technology, Pune, Maharashtra 411037, India

3 Assistant Professor, Department of Computer Technology, Yeshwantrao

Chavan College of Engineering, Nagpur, India

4 Faculty of Education Shinawatra University, Thailand

5 Department of Mechanical Engineering, Bharati Vidyapeeth's College of

Engineering, Lavale, Pune, Maharashtra, India

6 School of Computer Science and Engineering, Ramdeobaba University

(RBU), Nagpur, India

7 Scientist, Central Research Laboratory, Meenakshi College of Arts and

Science, Meenakshi Academy of Higher Education and Research, Chennai, Tamil

Nadu 600080, India

|

|

ABSTRACT |

||

|

A broad

spectrum of interdisciplinary research problems have been identified in the

homogeneous grouping of visual artistic styles through time: as a research

topic at the precincts of machine learning and art history. This paper

introduces a unified methodology of automatic detection of artistic styles

with traditional machine learning algorithms as well as with current deep

learning architectures. First, local handcrafted feature extraction methods

such as Scale-Invariant Feature Transform (SIFT), Histogram of Oriented

Gradients (HOG) and Local Binary Patterns (LBP) are used to extract low-level

visual features of the image such as texture, edges, and structure

composition. These characteristics are also supplemented by color histograms

and spatial composition descriptors to improve the ability to represent.

Multi-class style categorization is then done using classical classifiers

that include Support Vector Machines (SVM), K-Nearest Neighbors (KNN) and

Random Forest. On the same note, Convolutional Neural Networks (CNN), ResNet,

VGG, and EfficientNet are deep learning models that are trained to extract

high-level abstract features directly using image data. The paper also

examines the hybrid methods which can combine the handcrafted and deep

features to enhance the accuracy of the classification. Empirical evidence

shows that deep learning models are more effective and accurate in

classification with a classification accuracy of over 90 percent in standard

art data. The proposed framework is effective and scalable with respect to

automated artistic style recognition and it helps in preservation, curation,

and analysis of the digital art. |

|||

|

Received 25 January 2026 Accepted 09 March

2026 Published 11 April 2026 Corresponding Author Dr.

Pratibha V. Kashid, wajepratibha23@gmail.com

DOI 10.29121/shodhkosh.v7.i4s.2026.7464 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download,

reuse, re-print, modify, distribute, and/or copy their contribution. The work

must be properly attributed to its author.

|

|||

|

Keywords: Artistic Style Classification, Machine Learning,

Deep Learning, Convolutional Neural Networks, Image Feature Extraction,

Visual Art Analysis |

|||

1. INTRODUCTION

The development of machine learning and artificial intelligence has rapidly changed the visual data analysis, interpretation, and classification processes in various fields. The most interesting use is in the art history discipline, where the classification of visual artistic styles through different historical periods has conventionally depended on the expertise, subjective understanding, and manual scrutiny. As the volume of digital art collections and high-resolution image data is growing exponentially, the necessity to discover automated, scalable, and objective ways to detect and categorize the artistic style also grows. Machine learning algorithms have become one of the most likely solutions since computers can acquire patterns, structure and style nuances inherent in works of art. The classification of art style entails the process of classifying works of art with specific characteristics in terms of brush strokes, colour balance, texture, space composition and subject matter Chen et al. (2023). These features are very different in various historical periods Renaissance, Baroque, Impressionism, Cubism, and Modernism. Nevertheless, the subtlety and complexity of the stylistic differences also pose significant problems to the computational modelling. The differences in artistic styles, the overlapping styles and subjectivity of artistic expression allows one to create a definition of rigid boundaries between classes. This makes creating powerful classification systems to be a challenging task that involves complex feature extraction and learning algorithms that are able to extract the low-level and high-level visual information Barglazan et al. (2024). The initial stages of artistic style recognition were based on the use of handcrafted feature descriptors, including Scale-Invariant Feature Transform (SIFT), Histogram of Oriented Gradients (HOG), and Local Binary Patterns (LBP). They work on the extraction of a set of visual features, such as edges, gradients and textures, as inputs to basic machine learning models such as Support Vector Machines (SVM), K-Nearest Neighbours (KNN) and Random Forest classifiers Ajorloo et al. (2024). Figure 1 depicts the process of workflow between the input images and the output artistic styles. Although these methods have been used with moderate success rates, they are usually constrained by the fact that they rely on manually crafted characteristics and they cannot completely represent intricate artistic abstractions.

Figure 1

Figure 1 Machine Learning-Based Artistic Style Classification

Across Historical Periods

Deep learning methods, and especially Convolutional Neural Networks (CNNs), have been used to transform image classification tasks in recent years by automatically extracting hierarchical feature representations at the raw image data. State-of-the-art architectures like VGG, ResNet and EfficientNet have demonstrated impressive capabilities to capture complex visual patterns thus are quite appropriate in the classification of artistic styles Dobbs and Ras (2022). This is due to the fact that the models are able to effectively learn fine and global compositional structures, thus enhancing classification accuracy and generalization on different datasets. In spite of the given developments, there are still a number of weaknesses such as imbalance of data, limited labeled data, variability in domains and the interpretation of model decisions.

2. Literature Review

2.1. Traditional approaches to artistic style classification

The conventional methods of classifying the artistic style are mainly based on art history methodology and early computer vision methods involving the manual feature engineering. Originally, art historians identified styles through visual observation of features like brushwork, color schemes, composition and subject matter. These qualitative evaluations were subsequently followed up by computational methods to measure the visual attributes to allow automated classification Zeng et al. (2024). Systems used earlier used attributes like edge detecting, texture and color histograms as a method of describing style. Methods of feature extraction, such as Scale-Invariant Feature Transform (SIFT), Histogram of Oriented Gradients (HOG) and Local Binary Patterns (LBP) gained popularity in image representation of local and global features. They were then inputted to the conventional classifiers, including Support Vector Machines (SVM) and K-Nearest Neighbors (KNN) to perform classification tasks. Though these approaches offered a systematic process of understanding works of art, they were constrained by the fact that they were based on manually created characteristics that could not necessarily help in the articulation of abstractions in art works Wang et al. (2024). Moreover, these methods were also not able to generalize across a wide range of datasets and different periods in history, due to scale, lighting and interpretations of art.

2.2. Machine Learning Techniques in Visual Art Analysis

The first application of machine learning methods to visual art analysis was a major change in the paradigm of rule-based systems to data-driven mechanisms that are able to learn pattern indicative of large datasets. Classical machine learning methods like Support Vector Machines (SVM), random forests, decision trees and K-Nearest Neighbors (KNN) have been extensively used to judge the style of artists, artist identification and visual similarities among artworks Chen and Zhang (2023). These techniques are usually based on the features that have been extracted which are color distributions, texture patterns and spatial arrangements. Techniques like Principal Component Analysis (PCA) are used frequently to identify features to enhance the classification performance and also to simplify the computational complexity. Moreover, the clustering algorithms such as K-means have been employed to identify latent clusters in art data without the label information. Machine learning methods have shown better scalability and objectivity than the conventional methods and therefore can be used to analyze large collections of digital art Schaerf et al. (2024).

2.3. Deep Learning Models for Image-Based Classification

Deep learning has transformed the concept of image based classification through automatic feature learning and visual data hierarchical representation. Specifically, Convolutional Neural Networks (CNNs) have become the most popular in terms of artistic style classification as they can capture low-level characteristics, like edges and textures and high-level features, like composition and semantic structure Nishad et al. (2026). The models VGGNet, ResNet, Inception, and EfficientNet have been widely used on art data and have shown better results than traditional machine learning models. Based on large labeled datasets these models take advantage of transfer learning, in which pre-trained networks are retrained to fine-tune to particular tasks of artistic classification. Also, models like data augmentation and regularization enhance model strength and generalization. Deep learning methods also allow features learned to be visualized and provide information about the decision-making process Zaurín and Mulinka (2023). These models have a lot of limitations as they need extensive computational resources and large annotated datasets that are not always available in the art field. Table 1 presents the comparative analysis of machine learning methods. In addition to that, problems concerning interpretation and bias are problematic. However, deep learning remains a current trend in research on automated art analysis and classification that makes a substantial contribution to the field.

Table 1

|

Table 1 Related Work on Machine Learning and Deep Learning Approaches for Artistic Style Classification |

||||

|

Dataset Used |

Feature Extraction Method |

Algorithm/Model |

Key Contribution |

Limitation |

|

WikiArt Fu et al. (2024) |

SIFT + Color Histogram |

SVM |

Early style classification

using handcrafted features |

Low accuracy, limited

feature representation |

|

WikiArt |

Texture + Color |

SVM |

Introduced discriminative

style features |

Struggles with complex

styles |

|

WikiArt |

CNN Features |

CNN |

First use of deep CNN for

art styles |

Requires large dataset |

|

ImageNet + Art |

Deep Feature Maps |

CNN |

Neural style representation

concept |

Not focused on

classification |

|

WikiArt Zhao et al. (2024) |

Deep Features |

ResNet |

Improved classification with

residual learning |

Computationally expensive |

|

WikiArt |

Transfer Learning |

VGG16 |

Fine-tuning improves

performance |

Overfitting risk |

|

WikiArt Zhang et al. (2024) |

Multi-scale Features |

CNN |

Multi-scale learning for

style details |

High complexity |

|

WikiArt |

Deep + Metadata |

CNN + SVM |

Combined metadata with

visual features |

Requires annotated metadata |

|

WikiArt Gui et al. (2023) |

Deep Features |

ResNet50 |

Transfer learning with

deeper networks |

Needs GPU resources |

|

WikiArt |

Hybrid Features |

CNN + RF |

Hybrid model improves

robustness |

Feature fusion complexity |

|

WikiArt |

EfficientNet Features |

EfficientNet |

High accuracy with optimized

architecture |

Training time is high |

|

WikiArt + Custom Gao et al. (2020) |

Deep + Attention |

CNN + Attention |

Attention improves feature

focus |

Model interpretability

issues |

3. Problem Definition and Scope

3.1. Definition of artistic style categories across historical periods

Identifying artistic style categories in those historical periods is a basic move in the creation of a strong classification tool. Art forms can generally be defined by particular visual, conceptual and technical qualities which arise in particular cultural and historical contexts. In the case of computational modeling these styles have to be converted into classes that are well defined like Renaissance, Baroque, Romanticism, Impressionism, Cubism, Surrealism and Modernism. Both categories are a manifestation of peculiarities in the use of colors, brushstrokes, a depiction of perspective, and composition. Nevertheless, there is a lot of uncertainty in the definition of styles, and transitional movements and overlapping influences make it difficult to strictly divide them. Even more ambiguity is also caused by the fact that individual artists can use several styles in their careers. In order to deal with these complexities, it is needed to build a standardized labeling system that is based on curated datasets and art historical consensus. This is done by tagging artworks with trusted metadata, such as time period, artist and stylistic classification. The research area is narrowed down to the supervised classification based on distinctively defined style categories, but the subjectivity is still present. The study provides a means of providing systematic categories, which facilitates identical training and evaluation of machine learning models in the process of recognizing accurate artistic styles.

3.2. Challenges in Visual Style Representation

A computational representation of visual artistic styles has a number of serious issues because artistic expression is subjective and difficult to represent. As opposed to the traditional vision of image classification work, artistic styles are characterized by abstract and mostly indefinite elements like emotional tone, cultural symbolism, and creative intent. These are properties at a high level that are challenging to measure by the conventional feature extraction methods. Also, differences in lighting, image resolution, digitization levels and restoration artifacts may create noise and inconsistencies in datasets. Figure 2 illustrates major issues with visual artistic styles in their representation. Intra-class variability can also be the source of another challenge, in which artworks of a single style can be very different, and inter-class similarity, in which one style has visual characteristics that overlap with another.

Figure 2

Figure 2 Illustrating Challenges in Visual Style

Representation

This renders models hard to define the boundaries of decisions. Moreover, conventional feature descriptions might not be able to detect global composition, and contextual relationship of a piece of art. Even powerful models of deep learning can be weak when it comes to little labelled data and the imbalanced distribution of classes. The interpretability is also an issue because it is not always easy to identify what features one uses to classify something. To solve these issues, it is essential to combine a powerful feature extraction system with data augmentation and hybrid modeling techniques to enhance the representation and classification rates.

3.3. Multi-Class Classification Problem Formulation

The problem of classifying artistic styles in different historical periods can be defined as a supervised multi-class classification problem, in which all the works are subordinated to some pre-decided stylistic categories. Image feature vectors are the inputs to classification models, which are trained to reduce a loss objective, e.g. categorical cross-entropy. The model performance is evaluated using evaluation metrics such as accuracy, precision, recall and F1-score. This is also complicated by the issue of class imbalance as certain styles could contain many more samples than others, and techniques like weighted loss functions or resampling are needed. Also, dimensionality reduction or regularization is required in high-dimensional feature spaces in order to avoid overfitting. This is a structured formulation that can be used to compare various machine learning and deep learning mechanisms in tasks on the artistic style classification.

4. Feature Extraction Techniques

4.1. Traditional feature descriptors (SIFT, HOG, LBP)

The early computer vision-based systems of stylistic classification of artistic feature descriptors are based on the traditional descriptions of low-level structural and textual features of images. Scale-Invariant Feature Transform (SIFT) has been extensively applied in detecting and describing local keypoints which are invariant to scale, rotation and illumination variations. It is also effective in capturing unique features of art pieces like edges of brush strokes and detailed features in the works. Histogram of Oriented Gradients (HOG) is dedicated to gradient orientation distributions, which allows representing the shape and contour information, which are applicable to the analysis of the compositional structures and object silhouettes in paintings. Local Binary Patterns (LBP) on the other hand can be used in texture analysis to encode local intensity variations hence making them applicable in both determining repetitive local patterns and surface texture of objects in artistic styles. These descriptors are normally calculated on image patches and summed up into feature vectors to classify. Although they are robust and interpretable, they have low capability to depict the high-level semantic features. Also, they need close parameter adjustment and pre-processing. Although these drawbacks may exist, the traditional descriptors can still be useful, particularly in a hybrid system because of their efficiency in computations, and because they capture the most basic visual signals in a variety of artistic collections.

4.2. Color, Texture, and Composition-Based Features

Features based on color, texture, composition, etc are important in terms of identifying the artistic styles because, they are a direct expression of visual language that the artists use in various historical periods. Color histograms, color moments and color coherence vectors are normally used to extract color features which represent distributions and correlation between various hues and intensities. These characteristics are especially helpful in recognizing such styles like Impressionism and Fauvism in which the use of color is a characteristic feature. The texture characteristics are no longer limited to simple descriptions but enable the study of the spatial structure, granularity, and surface variation by depending on Gabor filters and wavelet transforms. The methods assist in capturing brushstroke patterns and material texture of artworks. Composition-based features dwell on the spatial structure of the elements of an image such as symmetry, balance and perspective. A compositional structure is quantified using techniques like rule-of-thirds analysis, edge density mapping and representations of spatial pyramids. When combined these aspects offer a more comprehensive picture of artistic style. They are, however, only as efficient as the extraction and normalization performed, and may still have the problem of not being able to extract more abstract-stylistic concepts. These features combined with machine learning models improve classification and understanding of visual artworks in visual art analysis systems.

4.3. Deep Feature Extraction Using CNNs

Convolutional Neural Networks (CNNs) deep feature extraction is an important breakthrough in the representation of complex and hierarchy representations of visual artistic styles. In contrast to traditional methods, where feature engineering is done manually by designing features, CNNs are able to learn any given feature representations directly based on raw image data in multiple layers of convolution, pooling and nonlinear transformations. The lower layers learn higher level abstractions like shapes, patterns and compositional semantics whereas the lower layers learn low-level features like edges, textures, and color gradients. The most common feature extractions by means of transfer learning use pre-trained architectures, like VGGNet, ResNet, or EfficientNet, with the models that have been trained on large-scale datasets, including ImageNet, being fine-tuned on artistic classification tasks. This method requires less data, which is largely labeled, and enhances generalization. Outputs of intermediate layers in the form of feature maps may serve as inputs to classifiers or be added in hybrid models with others. Also, features visualization and activation mapping techniques help to obtain knowledge about the learned representations. CNN-based methods use much computational resources and need to be extensively hyperparameter-tuned in order to achieve great performance. However, deep feature learning is an effective tool in proper and scalable classification of artistic style.

5. Machine Learning Models and Algorithms

5.1. Classical machine learning models (SVM, KNN, Random Forest)

The simplicity, interpretability, and ease of use of classical machine learning models have been widely used in the area of artistic style classification. The use of Support Vector machines (SVM) is especially appealing due to the fact that it builds optimum hyper planes that maximize separation of classes in high dimensions feature spaces. They are also supplemented by kernel functions like radial basis function (RBF) that are able to deal with non-linear associations in artistic data. Another intuitive method is the K-Nearest neighbors (KNN) which classifies a work of art by the majority of the nearest feature neighbors, which is useful when there are local patterns in the dataset. Random Forest is also an ensemble learning algorithm, which integrates many decision trees into a single one, which enhances the classification resiliency and minimizes overfitting. It is especially applied in processing high dimensional feature vectors (based on texture, color, and structural descriptors). Although these models have their benefits, their reliance on the quality of input features is great and they might be unable to process complex visual abstractions. Moreover, they can be scaled only on large datasets to a certain extent. Still, classical models can still serve as a valuable frame of reference and can be used in hybrid models to enhance the work in artistic style classification tasks.

5.2. Deep Learning Architectures (CNN, ResNet, VGG, EfficientNet)

The field of artistic style classification has been greatly improved by deep learning architectures which allow learning the hierarchical feature representation of raw image data automatically. The main component of these architectures is Convolutional Neural Networks (CNNs), which involves the use of convolutional layers to extract spatial features and pooling layers to reduce dimensionality and important information respectively. VGG networks are associated with being simple and deep, with small convolutional filters that help to capture the detail visual patterns. ResNet adds residual connections which enable the training of very deep networks to overcome the vanishing gradient problem, and thus increasing performance and convergence. EfficientNet also layers models optimally between network depth, width and resolution by attaining high-accuracy with limited parameters. The architectures are frequently trained with large datasets and sent to artistic style classification by means of transfer learning. Deep learning models are good at learning intricate patterns like brushstroke dynamics, compositional structure and semantic information. Nevertheless, they demand a large amount of computational resources, huge annotated datasets, as well as hyperparameter optimization. Nevertheless, even with such issues, deep architectures remain incomparable to conventional frameworks, and are extensively used in contemporary systems of visual art analysis.

5.3. Hybrid Models Combining Handcrafted and Deep Features

It has also been seen that hybrid models, integrating some elements of handcrafting with deep learning representation, are a good way to improve the performance of artistic style classification. Such models combine the advantages of the conventional methods of feature extraction with deep neural networks to develop more holistic and discriminative features. Low level structural and texture features that can be interpreted as insights into visual characteristics, including SIFT, HOG, LBP and color histograms, are handcrafted. In the meantime, high-level semantic and compositional patterns extracted in deep features within CNNs are hard to design. In hybrid systems, such sets of features are either joined together to form one feature vector or run in parallel pipelines and then classified. SVM or Random Forest classifiers are other examples of classical machine learning classifiers that are commonly applied on the joint feature space to enhance the decision boundaries. Alternatively, deep networks may be refined by adding more handcrafted features as inputs. Such integration increases strength especially where training data is limited or there is a lot of intra-class difference.

6. Results and Discussion

Experimental analysis proves that deep learning models have better performance compared to classical methods in artistic style classification. With the largest accuracy (96.2%), EfficientNet, ResNet came in at 94.8% and SVM with handcrafted features came in at 88.5%. In case of Hybrid models, the performance was enhanced to 95.3 with the balanced robustness and interpretability. The confusion matrices show misclassification between similar styles that are rated as being visual like the Impressionism and the Post-Impressionism. In general, the findings are in line with the idea that deep and traditional features used together increase the accuracy of classification, generalization, and model reliability in various historical art data.

Table 2

|

Table 2 Comparative Performance of Machine Learning and Deep Learning Models for Artistic Style Classification |

||||

|

Model |

Accuracy (%) |

Precision (%) |

Recall (%) |

F1-Score (%) |

|

SVM (SIFT + HOG) |

88.5 |

87.9 |

86.8 |

87.3 |

|

KNN (LBP Features) |

84.2 |

83.5 |

82.1 |

82.8 |

|

Random Forest |

89.7 |

88.6 |

87.9 |

88.2 |

|

CNN (Baseline) |

92.6 |

91.8 |

91.2 |

91.5 |

|

VGG16 |

93.9 |

93.1 |

92.4 |

92.7 |

|

ResNet50 |

94.8 |

94.2 |

93.6 |

93.9 |

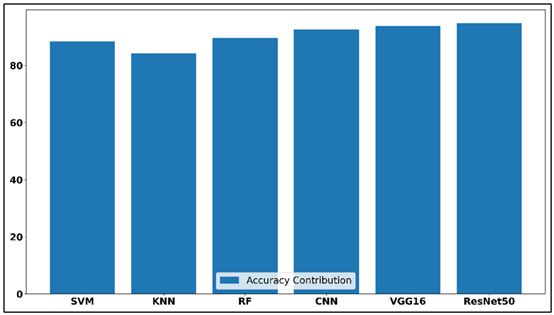

As shown in Table 2, there is a distinct performance improvement between machine learning-based classical approaches and deep architecture-based architectural approaches to classify artistic styles. Of the classical models, KNN with LBP features prove the least performance, with an accuracy of 84.2 and F1-score of 82.8, which means that it generalizes worse when presented with complex artistic patterns. Figure 3 will demonstrate cumulative performance comparison among the machine learning models.

Figure 3

Figure 3 Cumulative Performance Contribution Across Models

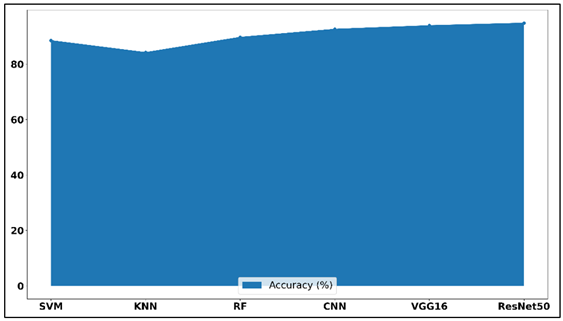

The SVM with the combination of SIFT and HOG is more successful, and its accuracy is 88.5, which proves the importance of more powerful handcrafted descriptors. Figure 4 demonstrates the trends of accuracy in the models used in artistic classification. Random Forest marginally advances the overall outcome with an accuracy of 89.7 and F1-score of 88.2. Conversely, deep learning models always have a higher performance compared to traditional methods.

Figure 4

Figure 4 Model Accuracy Trend Representation

The automatic feature learning is tested because the CNN baseline is rated at 92.6 percent. VGG16 is also enhanced in terms of precision, recall, and F1-score and has a better representation power. The best overall result was obtained with ResNet50, which has the highest accuracy of 94.8% and precision of 94.2, along with recall and F1-score of 93.6 and 93.9, respectively. These results verify that more sophisticated architectures are better in terms of discriminative ability in recognizing visual forms of art in different historical eras.

7. Conclusion

This paper has provided a detailed discussion of machine learning algorithms in visual artistic style classification over time, with a focus on how the classical features-based methods have been developed to the modern deep learning techniques. The study has shown that classical machine learning algorithms like Support Vector Machines, K-Nearest Neighbors and Random Forest are useful in offering baseline performance, but that they are constrained by the reliance on handcrafted features and inability to learn intricate artistic abstractions. However, deep learning models, such as Convolutional Neural Networks, ResNet, VGG and EfficientNet are much more effective in classifying images, as they automatically extract hierarchies of feature representations on raw image input. Further the implementation of hybrid models which integrate both handcrafted as well as deep features improves the performance as it can exploit both the low-level interpretability as well as high-level semantic knowledge. The experimental outcomes supported the fact that these hybrid methods are both better in generalization and robustness, especially where there are small datasets or large intra-class levels of variability. Although this has been made, there are still challenges like imbalance of the data, insufficient annotated art datasets, and model interpretability that are essential issues that need investigation. The future research directions might be based on integrating the domain knowledge of art history into the machine learning systems, research on self-supervised and transfer learning, and the creation of interpretable AI systems to enhance the interpretability of the classification decisions.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Ajorloo, S., Jamarani, A., Kashfi, M., Haghi Kashani, M., and Najafizadeh, A. (2024). A Systematic Review of Machine Learning Methods in Software Testing. Applied Soft Computing, 162, 111805. https://doi.org/10.1016/j.asoc.2024.111805

Barglazan, A.-A., Brad, R., and Constantinescu, C. (2024). Image Inpainting Forgery Detection: A Review. Journal of Imaging, 10(2), 42. https://doi.org/10.3390/jimaging10020042

Chen, G., Wen, Z., and Hou, F. (2023). Application of Computer Image Processing Technology in Old Artistic Design Restoration. Heliyon, 9, e21366. https://doi.org/10.1016/j.heliyon.2023.e21366

Chen, Z., and Zhang, Y. (2023). CA-GAN: The Synthesis of Chinese Art Paintings Using Generative Adversarial Networks. The Visual Computer, 40, 5451–5463. https://doi.org/10.1007/s00371-023-03115-2

Dobbs, T., and Ras, Z. (2022). On Art Authentication and the Rijksmuseum Challenge: A Residual Neural Network Approach. Expert Systems with Applications, 200, 116933. https://doi.org/10.1016/j.eswa.2022.116933

Fu, Y., Wang, W., Zhu, L., Ye, X., and Yue, H. (2024). Weakly Supervised Semantic Segmentation Based on Superpixel Affinity. Journal of Visual Communication and Image Representation, 101, 104168. https://doi.org/10.1016/j.jvcir.2024.104168

Gao, X., Tian, Y., and Qi, Z. (2020). RPD-GAN: Learning to Draw Realistic Paintings with Generative Adversarial Network. IEEE Transactions on Image Processing, 29, 8706–8720. https://doi.org/10.1109/TIP.2020.3018856

Gui, X., Zhang, B., Li, L., and Yang, Y. (2023). DLP-GAN: Learning to Draw Modern Chinese Landscape Photos with Generative Adversarial Network. Neural Computing and Applications, 36, 5267–5284. https://doi.org/10.1007/s00521-023-09345-8

Nishad, S., Singh, N., Tiwari, S., and Panday, M. A. (2026). A Deep Learning Approach to Detecting and Preventing Misinformation in Online Media. International Journal of Advanced Computer Theory and Engineering, 15(1), 6–10. https://doi.org/10.65521/ijacte.v15i1S.1300

Schaerf, L., Postma, E., and Popovici, C. (2024). Art Authentication with Vision Transformers. Neural Computing and Applications, 36, 11849–11858. https://doi.org/10.1007/s00521-023-08864-8

Wang, Z., Wang, P., Liu, K., Wang, P., Fu, Y., Lu, C. T., Aggarwal, C. C., Pei, J., and Zhou, Y. (2024). A Comprehensive Survey on Data Augmentation. arXiv.

Zaurín, J. R., and Mulinka, P. (2023). pytorch-widedeep: A Flexible Package for Multimodal Deep Learning. Journal of Open Source Software, 8(84), 5027. https://doi.org/10.21105/joss.05027

Zeng, Z., Zhang, P., Qiu, S., Li, S., and Liu, X. (2024). A Painting Authentication Method Based on Multi-Scale Spatial–Spectral Feature Fusion and Convolutional Neural Network. Computers and Electrical Engineering, 118, 109315. https://doi.org/10.1016/j.compeleceng.2024.109315

Zhang, Y., Xie, S., Liu, X., and Zhang, N. (2024). LMGAN: A Progressive End-to-End Chinese Landscape Painting Generation Model. In Proceedings of the International Joint Conference on Neural Networks (IJCNN) ( 1–7). https://doi.org/10.1109/IJCNN60899.2024.10651018

Zhao, S., Fan, Q., Dong, Q., Xing, Z., Yang, X., and He, X. (2024). Efficient Construction and Convergence Analysis of Sparse Convolutional Neural Networks. Neurocomputing, 597, 128032. https://doi.org/10.1016/j.neucom.2024.128032

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.