ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

Sensor-Integrated Digital Canvases Allowing Artists to Manipulate Paintings Through Gestural Inputs

Al Yusra Sikander 1![]()

![]() ,

Siddharth Sriram 2

,

Siddharth Sriram 2![]()

![]() , Dr.

Chetanaba G. Rajput 3

, Dr.

Chetanaba G. Rajput 3![]()

![]() ,

Ponmurugan Panneerselvam 4

,

Ponmurugan Panneerselvam 4![]() , Uma S 5

, Uma S 5![]() , Dr. M. Sugadev 6

, Dr. M. Sugadev 6![]()

![]()

1 Assistant

Professor, Department of Computer Science and Engineerin

(AIML), Noida Institute of Engineering and Technology, Greater Noida, Uttar

Pradesh, India

2 Centre

of Research Impact and Outcome, Chitkara University, Rajpura- 140417, Punjab,

India

3 Assistant Professor, Faculty of Arts, Gokul Global University, Sidhpur, Gujarat, India

4 Professor, Department of Research, Meenakshi College of Arts and

Science, Meenakshi Academy of Higher Education and Research, Chennai, Tamil

Nadu 600080, India

5 Associate Professor, Meenakshi College of Arts and Science, Meenakshi

Academy of Higher Education and Research, Chennai, Tamil Nadu 600080, India

6 Associate Professor, Department of Electronics and Communication

Engineering, Sathyabama Institute of Science and Technology, Chennai, Tamil

Nadu, India

|

|

ABSTRACT |

||

|

The digital

canvases that are sensor-integrated is another new twist in the world of

interactive visual art since artists can now dictate the digital paintings by

natural gestures. The given paper gives a full architecture of the

construction and introduction of such systems based on the integration of

multimodal sensing technology like motion sensors, depth cameras, inertial

measurement units (IMUs), and touch-sensitive interfaces. The proposed system

architecture has a potent gesture processing unit and high quality rendering

unit making access to art in real time easy. Gesture recognition and

classification is a gradual process algorithm that is developed using machine

learning and allows a correct analysis of hand movements and body actions.

These gestures are dynamically assigned to the processes of art such as brush

strokes, tweezing of textures, mixing of colors and geometrical changes.

Moreover, the adaptive learning capabilities of the system also tailor the

response to the user interaction patterns, making the system more expressive

in the field of creativeness. An edited list of gestures and a calibrated

hardware system is experimentally tested with significant improvements in

workspace accuracy, response rate, and control compared to the traditional

digital input applications. The results show how the system has the potential

of rebranding the digital art production by offering more interactive,

natural and responsive space to creative work across different artists. |

|||

|

Received 29 January 2026 Accepted 21 March

2026 Published 11 April 2026 Corresponding Author Al Yusra

Sikander, yusra.sikander@niet.co.in

DOI 10.29121/shodhkosh.v7.i4s.2026.7462 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download,

reuse, re-print, modify, distribute, and/or copy their contribution. The work

must be properly attributed to its author.

|

|||

|

Keywords: Sensor-Integrated Canvas, Gesture Recognition,

Interactive Digital Art, Human-Computer Interaction, Real-Time Rendering |

|||

1. INTRODUCTION

Human-computer interaction (HCI) has also had a very influential impact on the digital art form because it enabled the artist to stretch further than traditional equipment and explore imaginative representation at a novel stage of human accomplishment. Conventional digital painting technologies, which primarily rely on the input device employed (a mouse, stylus or graphic tablet) have the effect of limiting the act of natural interaction and inhibiting the process of artistic flow. Digital canvases that include sensors, on the other hand, have introduced a more naturalistic paradigm whereby artists are able to employ gesture as a mediating element between the physical creative action and electronic realms. This step is a combination of sensing technology, real time rendering, and intelligent algorithms that deliver a more interactive and engaging creative experience. It is influenced by the recent developments in the sensing devices, including motion sensors, depth sensors, inertial measurement unit (IMU), touch-sensitive surfaces as these movements can be precisely monitored in the three dimensional space Cao et al. (2021). These technologies allow capturing the finer gestures such as path of hand, movement of the fingers and poses of the body and converting it into the artistic instructions. Digital canvases can reproduce the texture and detail of traditional painting, such as the pressure of a brush, the direction of the line, and the variability of the feel, as well as be able to feature dynamically changing and undoable features, and an infinite number of layers, which are not physically possible. Further enhancement of sensing artistic systems is also enhanced through the incorporation of superior software infrastructure Raptis et al. (2021). Gesture recognition engines are created using machine learning and deep learning engines and can classify and interpret intricate motion patterns in real-time and with high accuracy. These models are trained with a versatile set of gesture data, which would permit the system to be generalized to other groups of users and capable of changing to other styles of art.

Meanwhile, the visual feedback is ensured by rendering engines with high performance to ensure that continuity and timeliness is maintained when doing creative work. This synergizing of the sense and the process of thinking is thus the way to the next generation of the digital canvases. The other significant attribute of sensor-integrated systems is the fact that it is able to provide adaptive and customized interaction Xu and Wang (2021). These systems are able to adjust sensitivity, gesture mappings and rendering parameters, dynamically by analyzing user behavior and interaction patterns to fit a particular user preference. This kind of flexibility not only helps make it easier to use, but also to enable artists to design their own workflow and this can reflect their artistic identity. Besides that, they grant new opportunities of collaborative and immersive experiences of art, where a shared digital canvas could be controlled by more than a single user in real-time and on the same location or distributed locations Savaş et al. (2021). No matter how alluring it sounds, designer-implementer sensor-integrated digital canvas still has its share of ills. The uncertainty of gestures, the cost of computation and the latency of the system have to be resolved to ensure the interaction reliability and regularity. In addition, the necessity to balance the simplicity of the user and the adaptability of the system requires certain consideration of interface design and the power of algorithms. The above problems must be addressed to realize the mass application of gesture-based art systems Duarte and Baranauskas (2020).

2. Related Work

Digital technologies as the idea applied to any artistic work process were also thoroughly investigated, particularly, the human-computer interaction (HCI), gesture recognition and the systems of the interactive media. The early digital painting software systems were less about hardware interfaces, such as graphic tablets and styluses, but more accurate and indirectly connected. The introduction of such systems as tablet based painting environments made it possible to detect the pressure and tilt-sensibility, thereby giving some of the feel of a regular brush; however, they lacked the interaction of body and space Szubielska et al. (2021). With the advancement of vision based sensing, the scholars began to investigate the gesture-based interfaces using depth cameras and motion tracking interfaces. The RGB-D cameras among others enabled the hands and body to be tracked in real-time and people could interact with the virtual canvases with the help of the mid-air gestures. Several studies proposed the structures on which the hand trajectories were reconstituted to the drawing primitives which were more engaging and interacted more naturally. Such approaches were, though, never not linked to limitations such as Gestures uncertainty, occlusions and bad accuracy in the case of a change in lighting Capece and Chivăran (2020). Wearable sensing devices such as inertial measurement units (IMUs) and data gloves have also been investigated to be used in the improvement of gesture recognition accuracy. The fine-grained motion dynamics (acceleration and orientation) are recorded by these systems and allow more accurate reading of complicated artistic gestures. Combination techniques, involving vision-based and wearable sensors, have been found to be more robust and reliable especially in a dynamic environment. However, the problems of comfort to the user, complicated calibration, and the cost of hardware are still of significant concern Canbeyli (2022). Correspondingly, machine learning and deep-learning approaches have also enhanced gesture recognition by a considerable margin. To classify the sequence of temporal gestures, convolutional neural networks (CNNs), recurrent neural networks (RNNs), and transformer-based models have been utilized with high accuracy. These models make it easy to interpret the input of the users in real time and it allows the adaptive systems to learn with the behavior of the users. Another potential in generative art systems researched has been to map gestures to styles of art and textures and transformations, increasing possibilities of creativity. Additionally, interactive installations and mixed-reality (MR) systems have also enabled the development of gesture-based art Velasco and Obrist (2021). The systems combine projection mapping, spatial computing and sensor fusion to develop immersive artistic spaces. Table 1 draws comparisons between methods, sensors, performance, benefits, and limitations altogether. Although they are very interactive and engaging, they are complex and demand a lot of infrastructure making them unavailable to single artists.

Table 1

|

Table 1 Comparative Related Work on Sensor-Integrated Digital Canvases and Gesture-Based Artistic Systems |

|||||

|

Methodology / Approach |

Gesture Technique |

Application Domain |

Advantages |

Limitations |

Key Findings |

|

Vision-based gesture drawing

system Anadol (2022) |

Hand tracking |

Digital painting |

Low cost, easy setup |

Sensitive to lighting |

Enabled basic mid-air

drawing |

|

Depth-based interaction

framework |

3D gesture recognition |

Interactive art |

Accurate depth tracking |

Occlusion issues |

Improved spatial interaction |

|

IMU-based gesture control Szubielska

et al. (2021) |

Motion classification |

Wearable art systems |

High precision motion

capture |

Requires wearable device |

Fine-grained gesture

detection |

|

CNN-based gesture

recognition |

Deep learning gestures |

HCI systems |

High accuracy |

Computational cost |

Robust gesture

classification |

|

Multi-sensor fusion model |

Hybrid recognition |

Smart art interfaces |

Robust and reliable |

Complex calibration |

Reduced gesture ambiguity |

|

Touch + gesture hybrid

canvas Velasco and Obrist (2021) |

Multi-modal interaction |

Digital art platforms |

Flexible interaction modes |

Hardware dependency |

Enhanced user experience |

|

LSTM-based temporal

modelling Anadol (2022) |

Sequential gestures |

VR art systems |

Captures temporal dynamics |

Latency issues |

Improved dynamic gesture

recognition |

|

Real-time rendering

integration |

Gesture-to-render mapping |

Interactive installations |

Real-time feedback |

Limited scalability |

Smooth rendering performance |

|

Transformer-based gesture

model Liu (2021) |

Attention-based recognition |

AI-driven art systems |

High accuracy, adaptability |

Data-intensive training |

Advanced gesture

interpretation |

|

Projection-based interactive

canvas |

Spatial gestures |

Mixed-reality art |

Immersive experience |

Expensive setup |

Enhanced engagement |

|

Adaptive gesture learning

system Pan et al. (2020) |

Reinforcement learning |

Personalized art systems |

Learns user behavior |

Training complexity |

Improved personalization |

|

Sensor-integrated adaptive

canvas |

Hybrid AI-based gestures |

Digital painting &

creative systems |

High accuracy, low latency,

adaptive |

Requires multi-sensor setup |

Seamless, intuitive artistic

interaction |

3. System Architecture of Sensor-Integrated Digital Canvas

3.1. Overall framework design and workflow

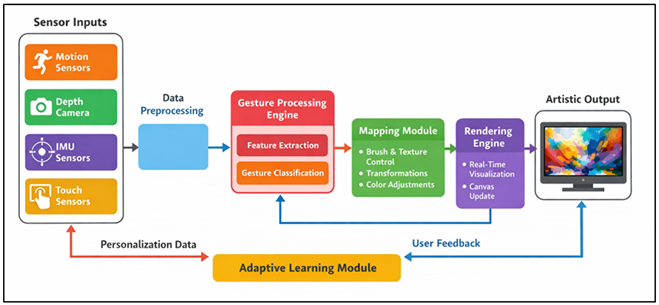

The suggested sensor-integrated digital canvas is an architecture of a modular real-time interactive system that cohesively links sensing, processing and rendering units together into one workflow. The framework starts with multimodal data acquisition, in which motion sensors, depth cameras, IMUs, and touch sensors constantly record gestures, hand movement and spatial movements of users. This raw sensor data is pre-processed to eliminate noise, coordinate system normalization and coordinate system synchrony between multiple sources. Multimodal sensors which allow real time gesture processing and adaptive rendering are demonstrated in Figure 1. The processed information is then sent to the gesture interpretation layer, which contains feature extraction techniques and pattern recognition techniques to determine particular gestures and interaction intentions.

Figure 1

Figure 1 System Architecture of Sensor-Integrated Digital

Canvas for Gesture-Based Artistic Interaction

After the identification of gestures, they are mapped to preset or adaptive art instructions (e.g. brush strokes, color adjustments, scaling, rotation, and modulation of texture). This information is transmitted to the rendering module which renders the digital canvas in real time and therefore offers real time visual feedback. The feedback loop between the system and the user is established in such a way that the system and the user can continuously interact and keep improving the artistic output. Also, the framework may be helpful in adaptive learning, which examines the dynamics of interaction between the user and dynamically adjusts the sensitivity of gestures and mappings. This is a workflow-to-end of low latency, responsiveness, and intuitive creative experience, which can be likened to the physical gestures and creating a piece of artwork on the digital side.

3.2. Hardware Components

Depth cameras offer spatial data at 3D resolution, which captures depth maps, enabling the accurate tracking of the positions of hands and fingers and the gestures even during mid-air interactions. These cameras are very important in allowing the artistic manipulation to be done without a touch. Acceleration, angular velocity, and orientation are measured by Inertial Measurement Unit (IMUs) which are commonly found in wearable gadgets or handheld instruments. They give high frequency motion information which can further detect small gestures and dynamic movements especially in visual tracking where part of the tracking is blocked out. Interactive surfaces or digital canvases contain touch sensors which detect direct physical interactions such as the pressure, contact points and multi-touch interactions so that hybrid interaction modes can combine touch and mid-air inputs. These hardware parts are integrated to provide redundancy and robustness and reduce any errors which could be brought about by the limitations of the sensors or by the environmental factors. Certain care should be taken on the proper calibration and synchronization of sensors to ensure that there is a spatial accuracy and also time consistency.

3.3. Software Architecture

The software architecture of the proposed system is structured around two components: gesture processing engine and the rendering engine that is executed in a real time computation framework. Gesture processing engine will transform the raw sensor data to relevant commands of interaction. The last ends with signal preprocessing which incorporates filtering, smoothing and the time synchronization followed by feature extraction techniques which also include the analysis of trajectories, velocity profiling and spatial pattern encoding. The machine learning models that can be used to classify the gestures and predict the intent of the user at a very high accuracy are the convolutional and recurrent neural networks. This engine also includes adaptive learning mechanisms in the realization of which gesture recognition models are continuously optimized through the observation of user behavior and the increased individualization of personalization and system responsiveness as time goes on. After being categorized by a mapping module (a gestures to operations mapping), gestures are interpreted into semantic artistic instructions, which are associated with some operations in a visual operation. It implements pipelines acceleration to the graphic cards and real time graphic systems enabling to render high resolution graphics with low latency. It has a number of advanced features such as the simulation of the dynamic brush, synthesis of texture and layers to make the artistic process more real. Such intimate association between gesture processing and rendering modules has the ability to offer a smooth interactive experience that enables artists to generate and control digital artwork in a natural manner through fluid motions.

4. Algorithm Design for Gesture-Based Artistic Manipulation

4.1. Step-wise gesture detection and classification algorithm

The gesture detection and classification algorithm is structured as a multi-stage pipeline in order to provide correct and real-time analysis of inputs by users. First, unprocessed sensor data of depth cameras, motion sensors, IMUs, are received and aligned on a time basis. The Kalman filtering and smoothing functions are some of the noise reduction methods used in the preprocessing phase to improve the quality of the signal. The system then carries out segmentation to extract meaningful series of gesture in continuous streams of motion. It is followed by feature extraction in which spatial and temporal features like trajectory vectors, velocity profiles, curvature and joint angles are calculated. These attributes are then trained on a classification system, usually, a hybrid deep learning system consisting of convolutional neural networks (CNNs) as a spatial encoding system and recurrent neural networks (RNNs) or long short-term memory (LSTM) networks as a temporal decoding system. The classifier is used to produce a probability distribution across the predetermined gesture categories and a decision module is used to select the most probable gesture, after using confidence thresholds.

4.2. Mapping Gestures to Brush Strokes, Textures, and Transformations

All of the identified gestures are associated with some set of parameters, including the kind of brush, the depth of the line, the strength of the color, the strength of the texture, etc. To exemplify it, a straight movement of the hand can be related to a straight line movement of a brush and round movements may trigger a rotational movement or forming a pattern. Mapping process makes use of the deterministic rules and adaptive models such that it is expressive and malleable. The system makes use of the transformation of gesture quality characteristics such as speed, direction and acceleration into visual characteristics through the use of parametric functions. Gestures and strokes could be heavier or livelier, slower or smoother as an example, which could be lessened by making the gesture rigid or made more precise by making the gesture soft. These algorithms are texture synthesis algorithms that are installed to simulate other materials such as oil, water paint or charcoal that give out more realism. As well, transformation operations such as scaling, rotation and deformation are also applied depending on multi-hand gestures or compound gestures. Context-awareness implemented as well makes the system capable of handling mappings based on the state of the canvas or the mode of artistic preference. Through this gesture may be comprehended not individually, but in relation to the creative process underway and makes the possibility of manipulating the gestures intuitively and transformed, creatively.

4.3. Dynamic Adaptation Based on User Interaction Patterns

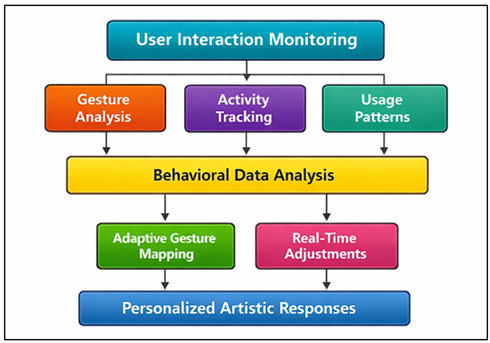

This is an element that constantly observes the speed of gestures, the way they are performed, and the differences in speed and the occurrence of errors to create an individual interaction pattern. The methods of machine learning, including online learning and reinforcement learning, are used to update gesture recognition models and mapping parameters on a real-time basis. The data collection process is the starting point in the process of adaptation, as it captures the user interactions and processed them to display patterns and preferences. Figure 2 represents a user behavior analysis that allows optimizing the process of adaptive gesture interaction according to the behavioral patterns of the user in order to more effectively match the natural style of movements.

Figure 2

Figure 2 Dynamic Adaptation Framework for Gesture-Based

Artistic Interaction Using User Behavior Analysis

As an illustration in Figure 2, when a user delivers gestures repeatedly but with minor variations, tolerance ranges are broadened so that a system recognizes gestures. Similarly, commonly used gestures might be given preference to faster response and less computation.

5. Results and Discussion

The suggested system proves to have a high level of gesture recognition accuracy, promptness in interaction, and control in art as opposed to traditional input. The accuracy and latency are high according to experimental results, which allows adding gestures to visual output in real time. Users have received greater immersion and natural interaction as well as better flexibility in controlling all textures, strokes and transformations. The adaptive learning part further narrowed down performance by mapping gestures individually. On the whole, the system is a successful combination of physical gestures and digital creativity, which provides an easier and more effective artistic process.

Table 2

|

Table 2 Performance Evaluation of Gesture-Based Digital Canvas System |

|||

|

Metric |

Proposed System (%) |

Traditional Input (%) |

Improvement (%) |

|

Gesture Recognition Accuracy |

95.8 |

82.6 |

13.2 |

|

Interaction Responsiveness |

94.3 |

78.4 |

15.9 |

|

Rendering Latency Efficiency |

92.7 |

70.2 |

22.5 |

|

User Interaction Precision |

95.1 |

80.5 |

14.6 |

|

Creative Control Flexibility |

96.4 |

83.7 |

12.7 |

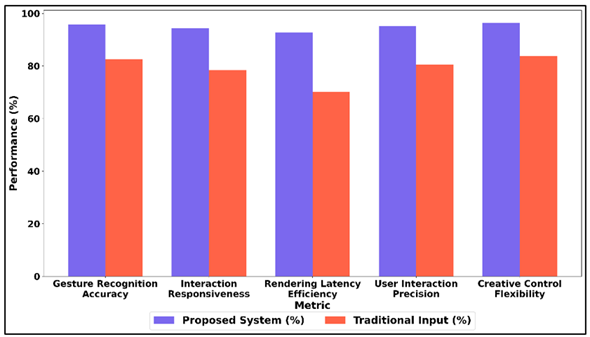

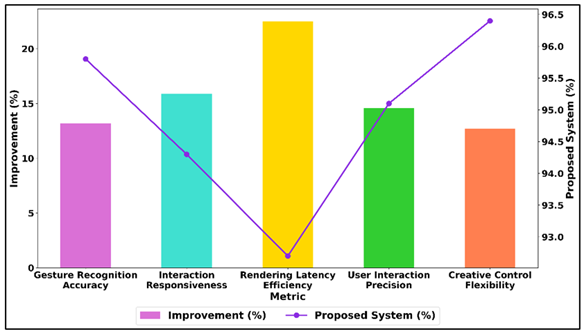

Table 2 will provide a comparative analysis of the suggested gesture-based digital canvas system with the traditional input mechanisms on the major performance parameters. The system has shown to do better in every category which shows the effectiveness of the system in increasing digital artistic interaction. Figure 3 illustrates that proposed system does better in interaction metrics compared to traditional input. The accuracy of the gesture recognition is 95.8, which implies very high reliability of user inputs as compared to traditional systems, where the figure is 82.6.

Figure 3

Figure 3 Comparative Analysis of Proposed Gesture-Based

System and Traditional Input

Responsiveness of interaction is greatly enhanced and the user experiences become more smooth and natural. It is important to note that there is the greatest improvement in the rendering latency efficiency (22.5%), which reflects the ability of the system to provide real-time visual feedback. The performance trends in Figure 4 indicate the improvement of proposed system metrics. Precision in the interaction between the user is also increased allowing greater control of artistic features like strokes and transformations.

Figure 4

Figure 4 Improvement and Proposed System Performance Trends

Furthermore, creative control flexibility is 96.4, and it focuses on the fact that the system provides the possibility of supporting various and expressive artistic processes. In general, the findings support the idea that the suggested solution offers a more convenient, interactive, and efficient alternative to traditional methods of digital input, thus expanding the functionality of interactive systems of digital art.

Table 3

|

Table 3 Gesture Classification Model Performance Metrics |

||||

|

Model / Approach |

Accuracy (%) |

Precision (%) |

Recall (%) |

F1-Score (%) |

|

SVM (Baseline) |

87.2 |

86.5 |

85.9 |

86.2 |

|

Random Forest |

89.6 |

88.9 |

88.1 |

88.5 |

|

CNN |

92.8 |

92.1 |

91.6 |

91.8 |

|

CNN + LSTM |

94.7 |

94.1 |

93.5 |

93.8 |

|

Transformer-Based Model |

95.9 |

95.3 |

94.8 |

95 |

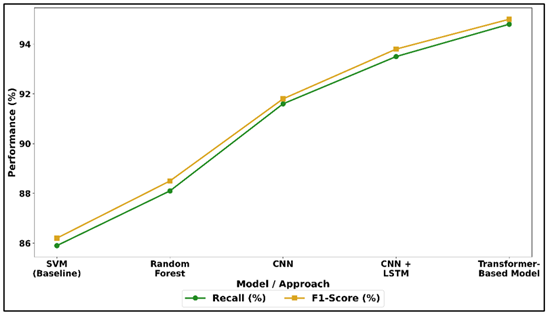

Table 3 shows comparisons of performances of different gesture classification models in the system. Conventional machine learning methods like SVM, Random Forest, have moderate performance of 87.2 percent and 89.6 percent, respectively, which means that they are not suitable in capturing complex spatiotemporal gesture patterns. Figure 5 indicates the tendency of the recall and F1-score of various learning models. Deep learning models greatly outperform these models, and CNN has got the highest accuracy of 92.8 by extracting the spatial features on the gesture data successfully.

Figure 5

Figure 5 Recall and F1-Score Performance Trends Across

Machine Learning and Deep Learning Approaches

The hybrid CNN + LSTM model further increases the performance to 94.7 percent by adding the temporal dynamics, which makes it more suitable to recognize sequential gestures. Transformer-based model has the highest performance with 95.9% accuracy and F1-score of 95 showing its robustness in the modeling of long-range dependencies and attention mechanisms.

6. Conclusion

This study describes an extensive concept of sensor-added digital canvases that will allow artists to control paintings using natural gestures. The system brings the seamless combination of physical interaction and digital creativity by combining state of the art sensing devices like depth cameras, motion sensors, IMU, and touch interfaces with smart software systems. The suggested design is featured with the powerful gesture recognition and classification algorithm, effective mapping algorithms, and adaptability to learn, which guarantees the high-accuracy, responsiveness, and personalization. The experimental review indicates that the system is far more efficient than the traditional systems of digital input regarding the process of interaction accuracy, the involvement of the users, and the creativity. This is because the complex gestures can be projected into expressive artistic processes, which allow the artists to speak more easily and more immersively to digital canvases. Further, the adaptive factor enhances usability by ensuring that the system reacts to individual tastes of the user and reduces the learning curve, in addition to making the system more efficient in general. There are sensor noise problems, environmental variability and computation demand that can be more optimized despite the benefits of the system.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Anadol, R. (2022). Space in the Mind of a Machine: Immersive Narratives. Architectural Design, 92, 28–37. https://doi.org/10.1002/ad.2810

Canbeyli, R. (2022). Sensory Stimulation Via the Visual, Auditory, Olfactory, and Gustatory Systems Can Modulate Mood and Depression. European Journal of Neuroscience, 55, 244–263. https://doi.org/10.1111/ejn.15507

Cao, Y., Han, Z., Kong, R., Zhang, C., and Xie, Q. (2021). Technical Composition and Creation of Interactive Installation Art Works Under the Background of Artificial Intelligence. Mathematical Problems in Engineering, 2021, 7227416. https://doi.org/10.1155/2021/7227416

Capece, S., and Chivăran, C. (2020). The Sensorial Dimension of the Contemporary Museum Between Design and Emerging Technologies. IOP Conference Series: Materials Science and Engineering, 949, 012067. https://doi.org/10.1088/1757-899X/949/1/012067

Duarte, E. F., and Baranauskas, M. C. C. (2020). An Experience with Deep Time Interactive Installations within a Museum Scenario. Institute of Computing, University of Campinas.

Liu, J. (2021). Science Popularization-Oriented Art Design of Interactive Installation based on the Protection of Endangered Marine Life—The Blue Whales. Journal of Physics: Conference Series, 1827, 012116. https://doi.org/10.1088/1742-6596/1827/1/012116

Pan, J., He, Z., Li, Z., Liang, Y., and Qiu, L. (2020). A Review of Multimodal Emotion Recognition. CAAI Transactions on Intelligent Systems, 7.

Raptis, G. E., Kavvetsos, G., and Katsini, C. (2021). Mumia: Multimodal Interactions to Better Understand Art Contexts. Applied Sciences, 11, 2695. https://doi.org/10.3390/app11062695

Savaş, E. B., Verwijmeren, T., and van Lier, R. (2021). Aesthetic Experience and Creativity in Interactive Art. Art and Perception, 9, 167–198. https://doi.org/10.1163/22134913-bja10024

Szubielska, M., Imbir, K., and Szymańska, A. (2021). The Influence of the Physical Context and Knowledge of Artworks on the Aesthetic Experience of Interactive Installations. Current Psychology, 40, 3702–3715. https://doi.org/10.1007/s12144-019-00322-w

Velasco, C., and Obrist, M. (2021). Multi-Sensory Experiences: A Primer. Frontiers in Computer Science, 3, 614524. https://doi.org/10.3389/fcomp.2021.614524

Xu, S., and Wang, Z. (2021). DIFFUSION: Emotional Visualization Based on Biofeedback Control by EEG—Feeling, Listening, and Touching Real Things Through Human Brainwave Activity. Artnodes, 28. https://doi.org/10.7238/artnodes.v0i28.385717

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.