ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

Reinventing Typography Design through Generative AI

Anil Kumar 1![]() , Prajapati Kalpana V. 2

, Prajapati Kalpana V. 2![]()

![]() , Richa

Srivastava 3

, Richa

Srivastava 3![]() , Dr.

Aditi Lule 4

, Dr.

Aditi Lule 4![]() , Gayathri

B. 5

, Gayathri

B. 5![]() , Namrata

Somnath Bajare 6

, Namrata

Somnath Bajare 6![]()

1 Department

of Computer Engineering, Poornima Institute of Engineering and Technology,

Jaipur, Rajasthan, India

2 Assistant

Professor, Computer Department Parul University, Vadodara, Gujarat, India

3 Assistant Professor, School of Business Management, Noida

International University, Greater Noida 203201, India

4 Symbiosis School of Planning, Architecture and Design, Nagpur Campus, Symbiosis

International (Deemed University), Pune, India

5 Assistant Professor, Meenakshi College of Arts and Science, Meenakshi

Academy of Higher Education and Research, Chennai, Tamil Nadu 600097, India

6 Department of Computer Engineering, Vishwakarma Institute of

Technology, Pune, Maharashtra, 411037, India

|

|

ABSTRACT |

||

|

Typography is

a supporting component of visual communication that determines the

readability of visual and aesthetic communication and cultural articulation

in both print and digital mediums. The new developments in generative

artificial intelligence have opened up new possibilities in redefining

typography design beyond the traditional and static workflow crafting. In

this paper, we describe GenType-AI, a generative

architecture that combines deep learning models with typographic design

principles to allow the creation of fonts in an automated and adaptive manner

and style-consciously. The paper will start by discussing the development of

typography as a result of traditional type foundries and data-driven creative

systems based on rule-driven digital tools. Although a history of AI-assisted

font generation exists, the majority of designs are still confined to

individual styles or restricted glyphs or do not allow semantic control of

typographic features. In order to overcome these shortcomings, the given methodology

will develop a diversified dataset based on sets of structured glyphs,

stylistic font corpora, and handwriting samples of more than one script.

GANs, VAEs, and diffusion models are used to learn the entire system of font

structure as well as finer style variations. The cross-lingual representation

learning and style conditioning allows the synthesis of characters that are

consistent across writing systems and alphabets. A rigorous training and

evaluation pathway is constructed based on quantitative evaluation indicators

of structural similarity and stroke consistency, and qualitative analysis by

experts in design. It was experimentally proven that GenType-AI

can generate visually coherent, stylistically diverse, and scalable typefaces

that can be applied in the real world. |

|||

|

Received 14 September 2025 Accepted 16 December 2025 Published 17 February 2026 Corresponding Author Anil

Kumar, anilkumar@poornima.org DOI 10.29121/shodhkosh.v7.i1s.2026.7115 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download,

reuse, re-print, modify, distribute, and/or copy their contribution. The work

must be properly attributed to its author.

|

|||

|

Keywords: Generative AI, Typography Design, Font Generation,

Diffusion Models, Creative AI, Multilingual Typography |

|||

1. INTRODUCTION

1.1. Background and evolution of typography design

Typography has been one of the key pillars of visual communication and this has shaped the perception, interpretation and recall of information. It could be traced back to the early systems of writing and manuscripts, where the letterforms were made with the help of hands and were strongly connected with the cultural and linguistic identity. Movable type was invented, which brought a great change in view of standardizing the letterforms and mass reproduction at the cost of skilled craftsmanship. Typography gradually developed with the changes in printing methods, metal types and phototypesetters, and advanced to digital font designer programs. The digital age brought about the concept of vector based fonts, desktop publishing and advanced typographic software which enabled designers to have more control in manipulating form, spacing and hierarchy more than ever before Feng et al. (2024). The typography design has been mostly a manual and skill-based profession with many of the rules and principles being set in stone over the years like proportion, balance, contrast and readability. The design choices are normally based on human perception, aestheticism and experience of typography. Although digital tools have made work more efficient they have not transformed the creative paradigm; designers continue to make typefaces by refining glyphs and styles through trial and error. With the growth of visual communication between screens, devices and languages, the conventional workflows become problematic in terms of scalability, personalization and adaptability. This historical process introduces the preconditions of new computational directions capable of supplementing the human imagination, responding to the increasing need of flexible, information-driven, and context-sensitive typographic systems in the design practice today Wang et al. (2023).

1.2. Rise of AI-Driven Creative Processes

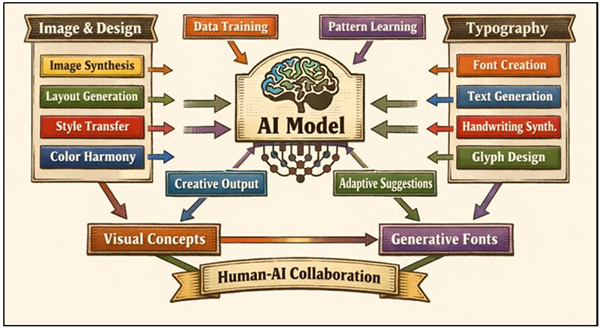

The advent of artificial intelligence has radically changed the creative industries, bringing in data-based approaches that support and in certain instances transform creative processes. Image synthesis, layout generation, color harmonization, and style transfer are increasingly applied in visual design through AI systems, allowing to quickly experiment with creative opportunities. Generative models are trained on large numbers of data and are capable of generating new content that is similar to human-designed designs but shows diversity and flexibility. This paradigm shift signifies the move toward the deterministic and rule-based tools to the probabilistic and learning based creative systems Lo (2023). Figure 1 depicts that AI manages the creative processes that combine ideation, creation, evaluation, and refinement.

Figure 1

Figure 1 Conceptual Block Diagram of AI-Based Creative Workflows

Designers no longer just use AI as a software tool but they can also collaborate with artificial intelligence as an agent able to propose alternatives, adapt to limitations, and respond to situational inputs. Early AI uses have been in typography in automation of repetitive processes like font interpolation or character recognition. Recently, techniques in the field of deep leveraging have facilitated end-to-end font creation, hand-writing creation, and style-conditioned glyph creation Strobelt et al. (2023).

1.3. Research Gap in AI-Augmented Typography

Although there is increasing focus on using artificial intelligence in typography, there are a number of research gaps. Most research in AI-based typography has been done in a limited problem domain, as in producing a small repertoire of glyphs or emulating a single typeface. Most solutions do not fully model typographic principles and so the output is likely to look realistically on screen but may lack consistency, readability or even stylistic consistency in large sets of characters. Secondly, the majority of the systems are trained on monolingual data thus limiting their use in multilingual and cross-cultural features where typography has to be adjusted to different scripts and different structural properties Short and Short (2023). The other shortcoming is the little amount of designer control; existing models often provide black boxes, with little visible opportunities to control style, mood, or functional restraint. The practices of evaluation are also not developed, and the combination of the expert-based qualitative assessment and quantitative measurements is not sufficient. Secondly, there is a lack of research on practical issues of deployment, including scalability, personalization, or branding and digital platform dynamic adaptation. Subsequently, the use of AI-generated typography remains a non-conformist phenomenon on the part of professional design practice Baidoo-Anu and Owusu Ansah (2023). To overcome these gaps, it is necessary to consider the structures that integrate generative modelling and explicit style conditioning, cross-lingual representation learning, and human-centred evaluation.

2. Literature Review

2.1. Traditional typographic principles and workflows

The traditional typography is established on the basis of a dense system of values that underlie the design and structure of the letterforms in order to ensure clarity, aesthetics and efficacy in communication. The principles of proportion, contrast in strokes, balance, alignment, kerning, leading, and hierarchy are core principles which have been developed by hundreds of years of typing practice. The designers usually start with the concept intentionality, which is the intended mood, purpose, and audience of a typeface, then go on to create key glyphs manually in order to create the visual identity Banh and Strobel (2023). The structural rules are established using foundational characters which tend to be H, O and n and spread to the entire glyph set. The workflow is dependent on the iterative refinement, visual inspection, and expert judgment to provide consistency in the weight, curvature and rhythm. These processes have been automated with the aid of digital typography tools, which have allowed manipulation of vectors, parametric controls and automated hinting but the underlying procedures are still mostly manual. Typographic conventions, historical references and tacit knowledge learned via practice are all used to make decisions in design, not formalized algorithms. Although these workflows ensure high quality results, they are time consuming and not easily scaled especially where the required styles, weights or scripts are many Khorasani et al. (2022).

2.2. Generative Models in Visual Design (GANs, VAEs, Diffusion)

The recent development of generative models has become a central part of the current innovations in visual design, providing data-driven processes of images, patterns and style creation. The concepts of Generative Adversarial Networks (GANs) came up with a competitive learning paradigm where the generator and the discriminator learn together to produce visual realism with an adversarial training regime. GANs have been able to replicate sharp details, which have made them popular in image translation, style transfer, and texture synthesis. Diffusion models are a more recent type of generative method, which is trained to denoise data sequentially with a stochastic process Badini et al. (2023). These models have been shown to be highly stable, very fidelitic and the outputs generated are easily controlled in fine-grained measures. Generative models in visual design applications can be used to quickly explore design spaces, with the system suggested alternatives conditioned on a style, content or semantic prompt. They are probabilistic and, therefore, allow variation and inventiveness not found in rule-based systems. Nevertheless, the application of these models in design-sensitive areas could not be limited to adaptability without putting structures, constraints, and Sikri et al. (2024) readability into consideration. In typography and graphic design, generative models have to be realistic at the visual level, yet be strictly geometrically and functionally guided Jasche et al. (2023). The accumulating content on the subject of generative visual models offers a methodological basis of AI-driven typography, alongside exposing issues concerning control, consistency, and domain adaptation.

2.3. Prior AI-Based Font Generation Studies

Initial studies of AI-generated fonts were mostly concerned with automation and pattern generation and frequently used statistical or rule-based techniques to extrapolate between existing typefaces. As the deep learning emerged, researchers started to use neural networks to produce glyphs directly out of data, usually considering characters as images. Glyphs missing in a font family have been trained on convolutional models to learn structural correspondences between characters Jaruga-Rozdolska (2022). GAN-based methods also enhanced visual realism, and they were able to produce stylistically consistent glyphs using few examples. Other researches used style transfer methods, projecting the style of handwriting or other art forms onto fonts. Although these improvements have been made, most of the earlier work is carried out in limited conditions including single-script alphabets or fixed resolutions. The font generation of cross-linguality and multi-script is a relatively recent area of research, with one of the main reasons being that the variety of various writing systems and the lack of aligned datasets are challenging to work with Saka et al. (2023). Table 1 demonstrates the history of evolution of AI-based systems of generative typography design. Furthermore, a lot of research focuses on the visual similarity measures and ignores such typographic principles like harmony of space or readability. Designer interaction can be little, which restricts its use in practice in professional workflows.

Table 1

|

Table 1 Summary of Related Work on AI and Generative Typography Design |

||||

|

AI Technique Used |

Typography Focus |

Dataset Type |

Key Contributions |

Limitations |

|

CNN + Autoencoder |

Font completion |

Digital glyph images |

Missing glyph synthesis from

few samples |

Limited stylistic diversity |

|

GAN |

Artistic font generation |

Image-based fonts |

High visual realism in

glyphs |

Poor structural consistency |

|

Conditional GAN Rudolph et al. (2023) |

Style transfer in fonts |

Font image pairs |

Style-conditioned glyph

generation |

Requires paired datasets |

|

VAE |

Font interpolation |

Vector glyph data |

Smooth latent-space

interpolation |

Blurred fine details |

|

Neural deformation |

Font morphing |

Parametric fonts |

Interactive font

manipulation |

Not end-to-end generative |

|

GAN |

Few-shot font generation |

Sparse glyph samples |

Reduced data requirement |

Generalization issues |

|

Style-based GAN Brisco et al. (2023) |

Handwriting-to-font |

Handwriting samples |

Personalized font synthesis |

Limited readability

evaluation |

|

Transformer + CNN |

Glyph structure learning |

Raster glyphs |

Improved stroke modeling |

High computational cost |

|

Diffusion Model |

High-fidelity font synthesis |

Large font corpora |

Superior visual quality |

Slow generation speed |

|

Multitask GAN Oppenlaender (2022) |

Font family generation |

Multi-style fonts |

Consistent multi-weight

fonts |

Script-specific only |

|

VAE + GAN |

Multilingual font learning |

Cross-script glyphs |

Early cross-lingual modeling |

Weak style alignment |

|

Diffusion + CLIP Li et al. (2023) |

Text-guided typography |

Prompt-based fonts |

Semantic control via prompts |

Limited typographic rules |

|

GAN + VAE + Diffusion |

End-to-end typography design |

Glyphs + styles +

handwriting |

Style-conditioned,

cross-lingual, scalable fonts |

Requires expert-in-the-loop

validation |

3. Methodology

3.1. Dataset creation: glyph sets, style corpora, handwriting samples

The success of typography generation with the use of AI is largely dependent on the quality and variety of the training data. A complex dataset is developed in this study through a combination of three complementary sources, including structured glyph sets, curated style corpora and handwriting samples. Glyph sets are gathered out of existing un-encrypted licensed and open digital typefaces, including full sets of characters, uppercase and lowercase letters, numerals, punctuation marks and special characters. These glyphs are standardized into uniform vector formats or raster formats, to enable compatibility of the fonts as well as maintain the geometry of the strokes. Style corpora are typefaces, which are classified according to a set of attributes, including serif, sans-serif, script, decorative, weight, contrast, and historical influence. This hierarchical labeling allows learning the variations of style in a supervised manner and conditional generation. To add organic variation and expressiveness that is usually lacking in digitally-built fonts, handwriting samples are added. Such samples are pretreated to eliminate noise, scale standardization and divide names into individual characters without affecting the natural stroke dynamics. In the case of multilingual experiments, more than two scripts are presented, and datasets are thoroughly annotated in order to preserve the correspondence between character structures.

3.2. Model Architecture: GAN/VAE/Diffusion-Based Typography Generator

The proposed typography generator uses a hybrid model based on Generative Adversarial Networks, VariationalAutoencoders and diffusion-based models to balance between realism, control, and diversity. With the help of adversarial learning, the sharpness of visuals is increased and stylistic consistency is imposed on generated glyphs with the help of GAN components. The generator is trained to learn to synthesize characters that are consistent with real-world fonts and the discriminator measures structural and stylistic consistency. The VAEs are added to learn a structured latent space which represents the typographic features of high-level weight, curvature, and contrast. This latent representation allows an easy interpolation between styles and allows manipulation of design parameters controlled. Diffusion models are applied because of their stability and ability to produce finer detail especially in complicated or decorative glyphs. Their noise to structured character refinement uses this iteration to enable fine control of the refinement of strokes. Style conditioning modules take in embeddings or font type, reference glyphs or text instructions which influence generation to produce preferred aesthetics. In the case of multilingual synthesis, the cross-script encoders are trained to acquire cross-script-similar representations and script-specific decoders maintain structural idiomatic knowledge. This modular system facilitates scalability and flexibility whereby a system can be used to produce full font families with the same identity. The architecture can solve the major issues with typography generation such as visual fidelity, stylistic coherence, and controllability by combining the complementary capabilities of GANs, VAEs, and diffusion models.

3.3. Training Protocol, Loss Functions, and Evaluation Pipeline

The training regime will be meant to provide stability, convergence and significant stylistic learning on a wide range of typographic data. Training of models is done in phases whereby it is pretrained on massive glyphs to acquire basic structural features and then fine-tuned on style-specific corpora and handwriting samples. Balanced sampling: The mini-batch training is used to avoid domination of styles, in which fonts categories are balanced. Several loss functions are used that can ensure the visual quality as well as typographic structure. The adversarial loss helps to promote realism and consistency in the GANs and the reconstruction loss of VAEs promotes true encoding and decoding of the glyphs features. Perceptual loss, which is calculated through pretrained vision networks, measures high level visual similarity, in comparison with pixel-wise similarity. Other terms of regularization include continuity, symmetry and consistency of spacing of strokes. In diffusion models, using denoising loss leads to a sequence of refinement steps on glyphs that are generated using noisy images. Assessment is done on a multi-level pipeline that integrates both quantitative and qualitative analysis. Quantitative measures are structural similarity, stroke overlap consistency and diversity scores in generated styles.

4. Proposed Framework: GenType-AI

4.1. System architecture and data representation

The GenType-AI framework proposed will be structured as a modular, end to end system which, incorporates data preprocessing, generative modeling and designer interaction in a single architecture. The system is fundamentally made up of three layers which are data representation, generative intelligence and application output. Typographic input in the data representation layer is coded in both raster and vector formats in order to maintain visual accuracy and structure. The glyphs are standardized by scale, base line and the thickness of strokes, which makes the glyphs consistent throughout the fonts and scripts. Metadata like font class, font weight, contrast, type of script and stylistic features are provided with the visual representations which allow organized learning. The generative intelligence layer is a combination of hybrid GANs, VAEs and diffusion modules that are aligned in shared latent space that encodes high-level typographical features. To model spatial dependencies within glyphs (i.e. stroke intersections and curvature continuity), attention mechanisms are used. Output layer The layer is known as applications output layer and converts glyphs generated into deployable font formats, scaled font families, and design previews. Such a structure is flexible in nature and enables GenType-AI to be shaped to meet various design needs with a sense of typographic integrity and computational efficiency.

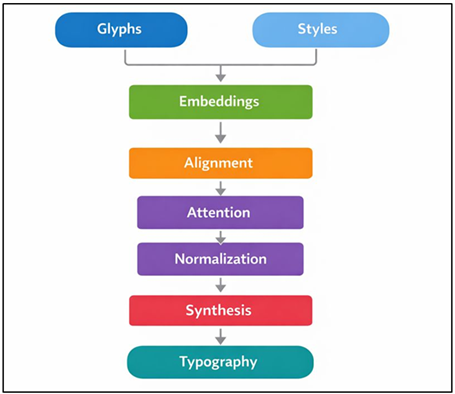

4.2. Style-Conditioned Character Generation

One of the key abilities of the GenType-AI model is style-conditioned character generation, which allows the exact control of the aesthetic features of typography generated. The explicit embeddings are used to encode the typographic features of the presence of serifs, contrast of strokes, smoothness of curves, weight, and historical effects, which are used to condition styles. The style vectors designed by designers during generation may be based on reference fonts, handwriting samples, or semantic prompts, according to which the synthesis of new characters is directed. Figure 2 demonstrates style conditioned pipeline producing typography that has aesthetic and structural consistency under control. The model guarantees that style information is always transferred throughout all the glyphs maintaining consistency within a font family.

Figure 2

Figure 2 Style-Conditioned Typography Generation Pipeline

The mechanisms of normalizing the condition and modulation of attention dynamically regulate the formation of strokes and the distance between them, depending on the chosen style. Such strategy helps in interpolation of styles and allows the development of hybrid typeface, which incorporates many aesthetic influences. Notably, style conditioning is independent of character identity so that one stylistic intent can be actioned on a variety of alphabets or sets of symbols.

4.3. Cross-Lingual Typography Synthesis

Cross-lingual typography synthesis is a response to the increasing use of similar visual identity between different writing systems in the international communication. A common encoder is used to encode glyphs of multiple scripts into a common embedding space, so that the transfer of the stylistic characteristics across languages is possible. The decoders that deal with script rebuild glyphs based on grammatical and geometrical requirements of the writing system. The design enables the expression of one style concept using the same design in Latin, Indic, or other scripts without redesigning. The associating of matching characters or structural elements within the scripts of a script is done by alignment strategies and helps in maintaining consistency of weight, contrast, and proportion. Cultural and functional differences are also considered by the framework that includes script-aware constraints that will not allow the distortion of styles. Cross-lingual synthesis aids in the full-scale creation of fonts as well as the continuous expansion of existing fonts to new scripts. This is of great importance in saving time and expertise needed to create multilingual font families. GenType-AI will facilitate and promote global branding, digital communication, and education, and contribute to making the practice of inclusive design more widespread by allowing developers of typographies to incorporate stylistically matched but structurally precise types across languages.

5. Applications and Use-Cases

5.1. Personalized font creation

The most significant use of generative AI-based typography is the personalization of fonts. The ancient custom font development is time-consuming, expensive and needs special skills, and is not accessible to individual user, teachers and small organisations. GenType-AI allows the user to create personalized typefaces, learning with such minimal inputs as handwriting samples, desired visual styles or reference fonts. The system generates fonts that are personal in terms of identity but typographically consistent and legible because the system encodes individual stroke characteristics, spacing preferences and stylistic tendencies. This is especially useful in the digital communication where individualization increases the emotional appeal and involvement of the user. Fonts may be customized to the requirements of a dyslexic or motor impaired learner in an educational context to assist them in reading with modifications to the font. Another benefit of the typographic features in creative fields is that artists and designers can quickly test different types of unique typographic designs without having to physically redraw whole sets of glyphs. The system also provides the capability of iterative refinement, where the users can modify the parameters that include weight, contrast or curvature in an interactive manner.

5.2. Dynamic Typography for Branding and Advertising

Typography in branding and advertising is being called upon to be adaptive, expressive and responsive to context. Statics tend to be hard to communicate the changing brand identities to the digital platform, campaigns, and audiences. GenType-AI allows dynamic typography generation whereby the stylistic features are dynamically altered under branding rules, tone of content or platform limitations. The system is capable of creating letterforms that complement emotional information by conditioning typography on the input of semantics like brand personality or campaign mood and still creates letterforms that are consistent in visual appearance. Indicatively, having one brand name can be expressed in minor typographic differences in social media, video and print without breaking the familiarity. Dynamic typography in advertising makes it possible to experiment with many visual stories quickly and enables A/B testing and engagement optimization based on the data. Motion and interactive media also enjoy the advantage of adaptive typography which reacts to the user's interaction, or to the surroundings. Another feature of GenType-AI is that it saves time on production through the automation of generating the various font weights, styles, and layouts according to the visual language of a brand. This feature enables marketers and designers to work on strategy and narration instead of creating assets manually. With branding being more and more experiential and personal, dynamic typography enabled by AI can be used as a scalable method to ensure coherence as well as allowing creative variability in different communication systems.

6. Results and Discussion

The experimental analysis of GenType-AI proves that the generative AI may be effectively used to create high-quality typefaces design. Generated fonts are typologically very consistent in glyph sets whilst, at the same time, stylistically varied by conditioning mechanisms. Quantitative values are well-formed structural similarity and coherent strokes, and the opinion of experts proves the existence of aesthetic balance and readability, as opposed to baseline AI font models. The framework is especially efficient in style transfer and multilingual synthesis, generating typefaces of scripts which resemble each other visually. It has also been determined that the GenType-AI has a practical value with decreased design time and improved scalability. In general, the results confirm that with the generative models and typographic knowledge, the typography generation can be reliable, creative, and controllable.

Table 2

|

Table 2 Performance Comparison of Generative Models for Typography Design |

||||

|

Model Type |

Structural Similarity Index

(SSIM) |

Stroke Consistency Score (%) |

Style Fidelity Score (%) |

Glyph Completion Accuracy

(%) |

|

GAN-based Model |

0.86 |

82.4 |

80.1 |

88.6 |

|

VAE-based Model |

0.83 |

79.6 |

77.8 |

86.2 |

|

Diffusion Model |

0.91 |

88.9 |

86.7 |

92.8 |

|

GenType-AI (Proposed) |

0.94 |

92.6 |

91.3 |

95.4 |

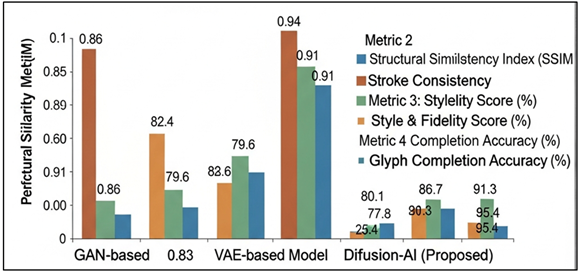

Table 2 includes comparative analysis of various generative models used in typography designing, which shows the benefits of the GenType-AI framework. The GAN-based model attains a moderate SSIM of 0.86 and reasonable stroke consistency (82.4%) and style fidelity (80.1), which means that the generated glyphs are visually plausible yet they vary in their structure significantly. Figure 3 presents results of GAN-based models as being better than VAE-based models in terms of typography generation metrics. The model based on VAEs has the worst performance, especially in style fidelity (77.8%), which is attributed to the nature of VAEs to even out fine-grained typographic patterns.

Figure 3

Figure 3 Performance Comparison of GAN-Based and VAE-Based Generative Models

Diffusion models are significantly better as they have an SSIM of 0.91 and a glyph completion accuracy of 92.8 and their strength as a reliable character generating model is confirmed. Figure 4 demonstrates the intensive metric-related analysis of generative models of stylized glyph reconstruction.

Figure 4

Figure 4 Multi-Metric Evaluation of Generative Models for Stylized Glyph Reconstruction

GenType-AI is the best performing base at all metrics including the largest SSIM (0.94), stroke consistency (92.6%), and style fidelity (91.3). Its ability to produce complete and coherent font sets is shown by the high accuracy of glyph completion (95.4) and its strength. These findings confirm the suitability of combining GAN, VAE, and diffusion paradigm as an integrated and style-conditioned model of advanced typography creation.

Table 3

|

Table 3 Impact of GenType-AI Across Typography Design Applications |

||||

|

Application Scenario |

Design Time Reduction (%) |

Style Customization

Flexibility (%) |

Readability Score (%) |

Cross-Script Consistency (%) |

|

Personalized Fonts |

58.4 |

90.2 |

88.6 |

84.9 |

|

Branding AND Advertising

Typography |

46.7 |

87.5 |

86.3 |

82.1 |

|

Multilingual Type Systems |

52.9 |

85.8 |

87.4 |

91.6 |

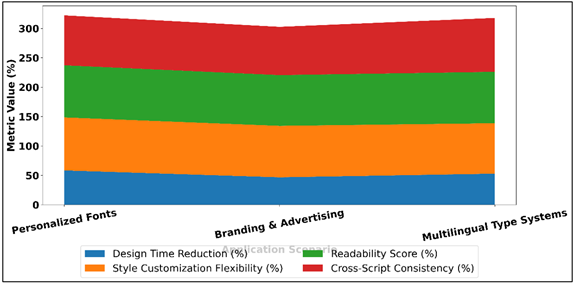

Table 3 shows the application level effects of the GenType-AI in three typography design situations. The framework shows the highest design time optimization (58.4) and the level of style customization (90.2) in the context of personalized font creation because it is efficient in the creation of personalized typefaces quickly, without compromising aesthetic control. Figure 5 reveals that performance of generative typography in different real-life application situations is different.

Figure 5

Figure 5 Performance Metrics Across Typography Application Scenarios

The 88.6% readability score also reflects the fact that personalization does not affect the functional clarity. GenType-AI in branding and advertising typography is capable of reducing design time by 46.7%, and stylistic flexibility (87.50), inclusive of responsive branding between campaigns. Even though the cross-script consistency is relatively low (82.1%), it should be suitable in a situation with mainly single-script branding.

7. Conclusion

The paper is an attempt to reinvent the design of typography using the power of generative artificial intelligence and GenType-AI is a framework that integrates the past principles of typography with the current deep learning algorithms. The framework is capable of overcoming the main limitations of traditional and previous AI-based typography processes, such as scale, style, and multilingual versatility through integrating GANs, VAEs, and diffusion models. The findings indicate that generative AI could go beyond experimental font synthesis to aid its practical and high-quality typographic design to be used in the real world. GenType-AI introduces the designers to create entire stylistically consistent fonts with a small amount of input and without compromising on critical properties (readability, balance, visual rhythm). These additions of style conditioning enable creative exploration and provide control of variation and hybrid typography which is hard to do manually. The cross-lingual synthesis also adds to the relevance of the framework and ensures the inclusion of and visual communication in writing systems all over the world, and thus, globally. Notably, this study does not make AI a substitute of typographic skills, but an intelligent partner in creating. Generative AI liberates designers by automating repetitive time-intensive designs to allow them to concentrate on conceptual intent, narrative, and cultural expression. The platform also keeps the barriers to entry down and democratizes font production to individuals, educators and small organizations. Although it has strong points, the study recognizes that the human judgment will always be needed to provide subtle aesthetic analysis and cultural acuity. Further improvements in GenType-AI may be added by the addition of multimodal inputs, adaptive learning and real time deployments.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Badini, S., Regondi, S., Frontoni, E., and Pugliese, R. (2023). Assessing the Capabilities of ChatGPT to Improve Additive Manufacturing Troubleshooting. Advanced Industrial and Engineering Polymer Research, 6, 278–287. https://doi.org/10.1016/j.aiepr.2023.03.003

Baidoo-Anu, D., and Owusu Ansah, L. (2023). Education in the Era of Generative Artificial Intelligence (AI): Understanding the Potential Benefits of ChatGPT in Promoting Teaching and Learning. Journal of AI, 7, 52–62. https://doi.org/10.61969/jai.1337500

Banh, L., and Strobel, G. (2023). Generative Artificial Intelligence. Electronic Markets, 33, 63. https://doi.org/10.1007/s12525-023-00680-1

Brisco, R., Hay, L., and Dhami, S. (2023). Exploring the Role of Text-to-Image AI in Concept Generation. Proceedings of the Design Society, 3, 1835–1844. https://doi.org/10.1017/pds.2023.184

Feng, Y., Wang, X., Wong, K. K., Wang, S., Lu, Y., Zhu, M., Wang, B., and Chen, W. (2024). PromptMagician: Interactive Prompt Engineering for Text-to-Image Creation. IEEE Transactions on Visualization and Computer Graphics, 30(1), 295–305. https://doi.org/10.1109/TVCG.2023.3327168

Jaruga-Rozdolska, A. (2022). Artificial Intelligence as Part of Future Practices in the Architect’s Work: MidJourney Generative Tool as Part of a Process of Creating an Architectural Form. Architectus, 3, 95–104. https://doi.org/10.37190/arc220310

Jasche, F., Weber, P., Liu, S., and Ludwig, T. (2023). PrintAssist—A Conversational Human–Machine Interface for 3D Printers. i-com, 22, 3–17. https://doi.org/10.1515/icom-2022-0045

Khorasani, M., Ghasemi, A., Rolfe, B., and Gibson, I. (2022). Additive Manufacturing a Powerful Tool for the Aerospace Industry. Rapid Prototyping Journal, 28, 87–100. https://doi.org/10.1108/RPJ-01-2021-0009

Li, Y., Wu, H., Tamir, T. S., Shen, Z., Liu, S., Hu, B., and Xiong, G. (2023). An Efficient Product-Customization Framework Based on Multimodal Data Under the Social Manufacturing Paradigm. Machines, 11, 170. https://doi.org/10.3390/machines11020170

Lo, L. S. (2023). The CLEAR Path: A Framework for Enhancing Information Literacy Through Prompt Engineering. Journal of Academic Librarianship, 49, 102720. https://doi.org/10.1016/j.acalib.2023.102720

Oppenlaender, J. (2022). The Creativity of Text-to-Image Generation. In Proceedings of the 25th International Academic Mindtrek Conference (192–202). https://doi.org/10.1145/3569219.3569352

Rudolph, J., Tan, S., and Tan, S. (2023). War of the Chatbots: Bard, Bing Chat, ChatGPT, Ernie and Beyond. The New AI Gold Rush and Its Impact on Higher Education. Journal of Applied Learning and Teaching, 6, 364–389. https://doi.org/10.37074/jalt.2023.6.1.23

Saka, A. B., Oyedele, L. O., Akanbi, L. A., Ganiyu, S. A., Chan, D. W., and Bello, S. A. (2023). Conversational Artificial Intelligence in the AEC Industry: A Review of Present Status, Challenges and Opportunities. Advanced Engineering Informatics, 55, 101869. https://doi.org/10.1016/j.aei.2022.101869

Sikri, A., Sikri, J., and Gupta, R. (2024). AI-Powered Dentistry: Revolutionizing Oral Care. ShodhAI: Journal of Artificial Intelligence, 1(1), 1–8. https://doi.org/10.29121/shodhai.v1.i1.2024.2

Short, C. E., and Short, J. C. (2023). The Artificially Intelligent Entrepreneur: ChatGPT, Prompt Engineering, and Entrepreneurial Rhetoric Creation. Journal of Business Venturing Insights, 19, e00388. https://doi.org/10.1016/j.jbvi.2023.e00388

Strobelt, H., Webson, A., Sanh, V., Hoover, B., Beyer, J., Pfister, H., and Rush, A. M. (2023). Interactive and Visual Prompt Engineering for Ad-Hoc Task Adaptation With Large Language Models. IEEE Transactions on Visualization and Computer Graphics, 29, 1146–1156. https://doi.org/10.1109/TVCG.2022.3209479

Wang, J., Liu, Z., Zhao, L., Wu, Z., Ma, C., Yu, S., Dai, H., Yang, Q., Liu, Y., Zhang, S., et al. (2023). Review of Large Vision Models and Visual Prompt Engineering. Meta-Radiology, 1, 100047. https://doi.org/10.1016/j.metrad.2023.100047

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.