ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

AI-Based Restoration of Digital Heritage Artworks

Dharmesh Dhabliya

1![]() , Nidhi Tewatia 2

, Nidhi Tewatia 2![]() , Manjeet

Natuk Gajbhiye 3

, Manjeet

Natuk Gajbhiye 3![]() , Shanthi

V 4

, Shanthi

V 4![]() , Vinit

Khetani 5

, Vinit

Khetani 5![]() , Dr.

Gajanan P. Arsalwad 6

, Dr.

Gajanan P. Arsalwad 6![]()

1 Vishwakarma

Institute of Technology, Pune, Maharashtra, India

2 Assistant

Professor, School of Business Management, Noida International University, Greater

Noida 203201, India

3 Department of Mechanical Engineering, Suryodaya

College of Engineering and Technology, Nagpur, Maharashtra, India

4 Professor, Meenakshi College of Arts and Science, Meenakshi Academy of

Higher Education and Research, Chennai, Tamil Nadu 600078, India

5 Researcher Connect Innovation and Impact Pvt. Ltd, Nagpur,

Maharashtra, India

6 Assistant Professor, Department of Computer Engineering Trinity

College of Engineering and Research, Pune, India

|

|

ABSTRACT |

||

|

Digitization

of digital heritage artworks is of high importance to preserve cultural

memory and provide access to historically important visual objects in the

long run. The digitization of heritage materials is often affected by noise,

fading, physical damage and gaps in the materials, all brought about by the

physical decay of original materials and shortcomings in digitization

processes by heritage institutions. The current paper presents a culturally

sensitive AI-based restoration system that improves the visual quality and

does not affect the stylistic integrity and historical authenticity. The

model combines the use of convolutional neural networks to extract local

features, generative models to reconstruct and restore severely damaged or

missing areas and model global context using transformers to achieve

compositional coherence. Cultural limitations and expert-in-the-loop

validation is added to overcome ethical and authenticity issues so as to

minimize the chances of over-restoration and style bashing. The proposed

technique is tested on a variety of digital heritage collections such as

paintings, manuscripts, murals, folk art and archival photographs. The

results of experiments indicate that in comparison with traditional and

baseline AI restoration methods, the experimental methods show consistent

improvements in PSNR, SSIM, and LPIPS. Case analysis and expert evaluation

also affirm that the framework offers an appropriate balance between visual

addition and cultural conformity, which makes it appropriate to scalable and

responsible preservation of digital heritage. |

|||

|

Received 07 September 2025 Accepted 02 December 2025 Published 17 February 2026 Corresponding Author Dharmesh Dhabliya, dharmesh.dhabliya@viit.ac.in DOI 10.29121/shodhkosh.v7.i1s.2026.7073 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download,

reuse, re-print, modify, distribute, and/or copy their contribution. The work

must be properly attributed to its author.

|

|||

|

Keywords: Digital Heritage Restoration, Cultural Heritage

Preservation, AI-Based Restoration, Deep Learning, Generative Models, Image

Inpainting, Cultural Authenticity, Expert-In-The-Loop |

|||

1. INTRODUCTION

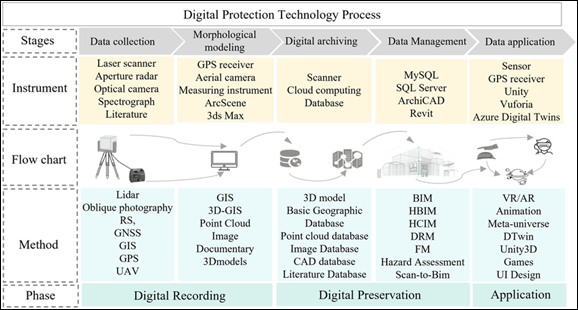

Digital heritage artworks are a priceless part of the world cultural memory, which includes digitized paintings, manuscripts, murals, folk art, photographs, and born-digital creative works that record the artistic, social and historical histories of the civilizations. As museums, archives, libraries, and cultural organizations across the globe have undertaken a rapid process of digitization, digital surrogates of artworks of heritage have become a necessity in terms of preservation, education, research and dissemination to the wider public. Nevertheless, these works of art are not degraded even being in electronic form. Low-resolution digitization, compression artifacts, color fading, noise, missing areas, scanning errors, and damage inherited by the physical originals are common problems that have an adverse effect on the visual quality and readability of digital heritage items Bonazza and Sardella (2023). Consecutive format transfers, storage bandwidth and historical digitization have been further increasing these difficulties, endangering the durability and authenticity of cultural records. Physical or digital restoration of heritage artworks Traditional heritage art restoration (both physical and digital) depends extensively on professional conservators and artists who use manual or semi-mechanized methods in order to mend destruction and increase visual consistency. Although these methods are effective, they are by nature time-consuming, subjective, and hard to apply to extensive digital collections Casillo et al. (2022). Furthermore, the digital restoration methods based on manual means frequently rely on manual rules or local image processing techniques which find it hard to be generalized to a variety of artistic styles, materials, and degradation patterns as shown in Figure 1 Within the framework of massive digitization projects and increasing online heritage archives, these constraints demonstrate the essentiality of additional adaptive, efficient, and intelligent methods of restoration. In the case of digital heritage, AI-driven restoration systems provide the possibility to learn more complex visual patterns directly on the basis of information and allow the automated restoration of damaged areas, the refinement of finer artistic features, and the reproduction of chromatic balance with minimum human intervention De et al. (2024).

Figure 1

Figure 1 Conceptual Overview of AI-Based Restoration of

Digital Heritage Artworks

However, AI in restoring digital heritage is associated with critical issues beyond technical performance. Cultural heritage artworks are entrenched with symbolic meanings, stylistic conventions and historical contexts that should not be lost in the process of restoration. The major ones are over-restoration, stylistic distortion, algorithmic bias, and loss of authenticity in case AI models are trained or deployed without cultural awareness and professional supervision. Restoration systems therefore have to decide between visual enrichment and moral accountability which would make sure that the outputs are restored with the original purpose of the art or historical background.

2. Problem Definition and Research Framework

The goal of the restoration of the digital heritage art is to salvage visually damaged or even to a fraction of the historical context of cultural artifacts, in such a way that it is understood in greater perceptual terms, but without losing historical integrity or artistic intent Goswami et al. (2022). This issue should be defined formally to distinguish between heritage-oriented restoration and generic image enhancement activities and design an adequate AI-based framework. Digital heritage artworks also have distinctive stylistic conventions, symbolic motifs, material peculiarities, which should be brought to light during the restoration process, unlike natural pictures Jiang et al. (2022). As a result, the restoration issue that is considered in this research is limited by the cultural, ethical and contextual factors, besides the technical performance requirements.

2.1. Problem Definition

Where Id refers to a damaged digital heritage work acquired by digitization or archival means, and Ir refers to the desired restored work that represents the original artwork (Io), which can be lost in whole or part. The model of the degradation process is:

Id=D(Io,η)

Where, D(Io, η) is an unknown degradation term that includes noise, blur, fading, compression artifacts, missing regions and digitization errors and ( η ) are stochastic or systematized degradation variables. The restoration problem is to approximate a mapping function (R(⋅)) with the following goal:

Ir=R(Id)

The condition that (Ir) will be loyal to the stylistic, semantic, and cultural features of (Io). This is a vital concern in a heritage setting, where visually plausible reconstructions based on any other idea of historical or symbolic verisimilitude are unacceptable Kharroubi et al. (2022). Thus, it is the restoration problem that is resolved in this work that is not simply the optimization of similarity in pixels, but rather a multi-objective problem related to the balance between visual fidelity, perceptual coherence, and the cultural authenticity.

3. Background and Related Work

Conservation of heritage artworks has traditionally relied on manual conservation methods underpinned by expertise in the domain of art history, material science and aesthetic interpretation. As collections of cultural materials are digitized in large amounts, restoration processes have shifted more to the digital realm, with digital heritage restoration emerging. Artworks preserved digitally, such as digitized paintings, manuscripts, murals, photos, and folk art, and born-digital creative works, are considered digital heritage artworks Kim and Lee (2022). During the process of digitization, the accessibility and preservation can become better, but digitization also brings other issues like noise, color distortion, compression, and scanning errors, and Chindengwike (2024) the distortion of visual detail. Combined with the previous digitization technologies and data restructuring, the problems require efficient and scalable restoration procedures. Initial digital restoration methods were mainly classical image processing methods. These techniques used algorithms that are deterministic like filtering, histogram equalization, edge detection, interpolation and patch-based inpainting [8]. Median or Gaussian filters were often used to reduce noise, colour degradation was reduced either with global adjustment or with manual enhancement rules. The analysis of traditional and AI-based digital restoration techniques is presented by a comparative approach in Table 1, which illustrates the basic differences between automation, scalability, flexibility, and reconstruction features. Conventional systems are generalized by the small scalability, reliance on expert intervention and minimal capability of dealing with complex degradations. Conversely, the AI-based methods are highly scalable, have better reconstruction fidelity and capability to support various heritage datasets Li and Zhang (2025). Nevertheless, the table also highlights a fact that AI-based restoration presents an additional set of problems, such as a rise in computation needs and the risk of over-restoration in case cultural constraints have not been implemented explicitly.

Table 1

|

Table 1 Comparison of Traditional and AI-Based Digital Heritage Restoration Methods |

||

|

Aspect |

Traditional Digital

Restoration Methods |

AI-Based Restoration

Methods |

|

Core Approach Liu et al. (2021) |

Rule-based image

processing and manual editing |

Data-driven deep

learning models |

|

Level of Automation Moualla et al. (2017) |

Low to moderate |

High |

|

Scalability |

Limited for large

archives |

Highly scalable |

|

Handling of Complex

Degradation Noh et al. (2022) |

Ineffective for severe

damage |

Effective

reconstruction via learned patterns |

|

Style Consistency |

Difficult to maintain |

Learned style

representations |

|

Adaptability to Art

Forms Sun et al. (2025) |

Manual reconfiguration

required |

Generalizes across

styles |

|

Missing Region

Reconstruction |

Patch-based

interpolation |

GAN and

diffusion-based inpainting |

|

Global Context

Awareness |

Local neighborhood

processing |

Long-range

dependencies via transformers |

|

Evaluation Strategy Zielke (2020) |

Visual inspection and

heuristics |

Quantitative metrics +

expert review |

|

Cultural Sensitivity |

Strong with expert

guidance |

Requires explicit

constraints |

|

Risk of

Over-Restoration |

Low |

Moderate without

safeguards |

|

Reproducibility |

Low |

High |

|

Suitability for

Large-Scale Projects |

Poor |

Excellent |

The current body of literature also indicates significant gaps despite the great benefits of AI-based restoration approaches. Most of the models are trained using generic photo collections that do not represent the stylistic diversity and the symbolic richness of heritage art. In general, the metrics (e.g. PSNR or SSIM) used in evaluation practice tend to focus on pixels, potentially not perceptually representative or culturally authentic. In addition, moral concerns about authorship, comprehensibility, and historical accuracy are under-researched. These restrictions drive the necessity of restoration structures, which incorporate the latest AI methodology and incorporate cultural knowledge, professional authentication, and ethically sound limitations.

4. Research Framework Overview

The conceptual model of the proposed study views AI-based digital heritage restoration as the organized pipeline, consisting of data collection, preprocessing to consider degradation, deep learning-based restoring, cultural constraint control, and multi-tier assessment. The framework is made to be modular and scalable to accommodate a change in the type of heritage artworks and institutional needs.

Figure 2

Figure 2 Conceptual Overview of AI-Based Digital Heritage

Artwork Restoration

The framework takes as input digitized heritage artworks provided by museums, archives or cultural repositories. These inputs can be very heterogeneous in terms of their resolution and color depth and in their degradation properties. The preprocessing operations are used to normalize the inputs, removal of the noise during acquisition, and detection of the degradation pattern as shown in Figure 2 Instead of purely applying generic preprocessing, the stage applies degradation modeling in order to induce historical wear, fading or scanning artifacts which enhances the strength of the subsequent learning. The main part of the structure is a deep learning-based restoration unit that combines several architectural paradigms. Convolutional neural networks are used to extract local features and represent texture, and fine-grained features, e.g. brush strokes or manuscript fibers. In order to keep the global consistency and the compositional balance, long-range dependencies are modeled throughout the entire artwork using transformer-based components. This hybrid structure enables the framework to deal with the localized damage as well as global stylistic coherence.

5. Proposed AI-Based Restoration Methodology

AI-based restoration can offer a viable solution to the problem; therefore, a proposed methodology is presented below.

Digital heritage artworks are an important part of the global cultural memory, such as digitized paintings, manuscripts, murals, folk art, photographs and born-digital works that museums and cultural institutions preserve. Digital heritage resources are susceptible to destruction due to low-resolution digitizing, compression artifacts, color fading, noise, scanning artifacts, and damage due to a damaged physical original, despite digitization increasing accessibility and long-term preservation. Older digitization technologies, like re-conversion of data, also enhance these problems, making them to be of lower visual quality and interpretability. Conservative restoration methods are based on mainly manual or semi-automatic methods that are supervised by professional restorers. Although powerful, these approaches are laborious, subjective, and cannot be applied to large digital collections, and usually rely on hand-crafted rules that cannot be important across different artistic styles and patterns of degradation. The developments of artificial intelligence have brought new possibilities of the restoration of a digital heritage. CNNs, GANs, transformers, and diffusion models are types of deep learning methods that can automatically perform denoising, in painting, super-resolution, and color restoration, which is why they are suitable in large-scale heritage collections Figure 3.

Figure 3

Figure 3 Restoration Architecture Containing a Hybrid, Multi-Stage Design

The second step includes a Generative Reconstruction Module that takes control of serious degradations like cracks or missing parts or loss of pigments. This module may be implemented either with Generative Adversarial Networks (GANs) or diffusion-based generative models depending on the type of task one aims to accomplish in restoration. The inpainting and color reconstruction using GANs are more effective because they learn realistic texture distributions and diffusion models are better trained and allow more control over the quality of reconstruction by using iterative denoising. This generative phase allows the realistic reconstruction without overly smooth and unnatural results.

Ltotal=λ1Lpixel+λ2Lperceptual+λ3Ladv+λ4Lstyle+λ5Lcultural

In order to achieve a global coherence and compositional consistency, the third stage involves the use of a Global Context Modeling Module that incorporates the transformer architecture. Transformers use self-attention to extract long-range dependencies in the full picture of the artwork, which maintains spatial balance, symmetry, and semantic links between far-away areas. This is particularly important in the case of heritage artifacts in which international composition is frequently symbolic or narrative. Restoration is optimized as a multi-objective, in which none of the loss functions alone is sufficient to represent both visual fidelity and cultural authenticity. It is the pixel-level reconstruction loss (e.g., L1 loss (or L2 loss) that achieves a rudimentary structural similarity between the input and output and specifically, low-frequency parts. Nevertheless, pixel loss is usually not enough to produce over-smooth output and cannot be used to restore heritage.

6. Experimental Setup and Implementation

This section shows the experimental design to be used to prove the suggested AI-based restoration framework of digital heritage artworks. This arrangement focuses on reproducibility, equal benchmarking, and equal judgement of the fidelity of the visual image and cultural authenticity. As heritage datasets are typically heterogeneous in nature and that few perfect reference points are available, the experimental protocol is tailored to address the nature of real-world restoration situations.

Step -1] Datasets and Data Preparation

The experimental assessment is based on pre-selection digital heritage collections that are found in museum archives, digital libraries, and open cultural libraries. Such data sets consist of digital paintings, historical manuscripts, murals, folk and indigenous art and archival photos. They are very diverse in artistic style, resolution, material properties, as well as the degree of degradation, which allows fully evaluating the restoration framework.

Table 2

|

Table 2 Summary of Datasets, Image Resolutions, and Degradation Types Used in Experiments |

||||

|

Dataset Category |

Artwork Type |

Typical Resolution |

Primary Degradation

Types |

Source Type |

|

Museum Painting

Archives |

Oil paintings,

watercolor artworks |

1024×1024 – 4096×4096 |

Color fading, blur,

pigment loss, noise |

Public museum

repositories |

|

Historical Manuscript

Collections |

Handwritten

manuscripts, illuminated texts |

800×1200 – 3000×4000 |

Ink bleed-through,

stains, noise, missing text regions |

Digital libraries |

|

Mural and Fresco

Datasets |

Wall paintings,

architectural art |

1024×2048 – 4096×8192 |

Cracks, erosion,

surface discoloration |

Cultural heritage

archives |

|

Folk and Indigenous

Art Repositories |

Traditional paintings,

textile patterns |

512×512 – 2048×2048 |

Low resolution,

compression artifacts, color distortion |

Open cultural

repositories |

|

Archival Photograph

Collections |

Black-and-white and

color photographs |

512×512 – 2048×2048 |

Grain noise, blur,

fading, scratches |

Historical photo

archives |

|

Synthetic Degradation

Set |

Clean artwork images

(all categories) |

Matched to source

images |

Simulated noise, blur,

fading, cracks, missing regions |

Generated for training |

Since it is difficult to have ground-truth pristine images, a semi-supervised data preparation strategy is taken. Images with minimal degradation are considered pseudo ground-truth and artificial degradations are synthetically created to create paired training samples. The models of degradation provide the simulation of noise, blur, fading, compression artifacts, surface cracks, erosion, and missing parts as the heritage artworks usually have. The dataset, common resolutions, and types of degradation that were used in the experiments are summarized in Table 2 The images would be downsized to a standardized image sizes that fit into the memory limits of the GPU with aspect ratios saved to prevent a geometrical distortion. The use of color normalization takes a conservative approach to preserve heritage artworks that have symbolic color relations. The splits of the datasets are conducted at the artwork level to avoid the information leakage between the training, validation and the testing sets.

Step -2] Implementation Environment

The model is written in Python and the PyTorch deep learning library and trained and inferred with CUDA acceleration. The experiments are performed on systems that have a GPU and have adequate memory to support high-resolution images of artwork. Checkpoints, model configurations, and training logs are systematically logged in order to make sure that they are reproducible.

Step -3] Model Training

The multi-stage strategy leads to model training. During the first stage, the convolutional feature extraction and global context modeling modules are pre-trained on large-scale image restoration in order to acquire generic visual priors. The second phase involves fine-tuning the entire architecture with legacy specific datasets that include synthetic defects that are based on real-world damage patterns. The training is repeated across several epochs and early stopping is done on the basis of validation performance to avoid overfitting. In generative components, the generator and discriminator update are determined in a balanced way, to make adversarial training stable. Table 3 provides the most important hyperparameter values and training specifications that were used in the implementation. These values are determined by validation-based tuning as opposed to the trial-and-error approach that would make them sensitive to different datasets.

Table 3

|

Table 3 Hyperparameter Settings and Training Configuration |

|

|

Parameter |

Setting / Value |

|

Input Image Size |

256×256 / 512×512 |

|

Batch Size |

8 – 16 |

|

Optimizer |

Adam |

|

Initial Learning Rate |

1 × 10⁻⁴ |

|

Learning Rate Schedule |

Step decay |

|

Training Epochs |

100 – 150 |

|

Loss Functions |

L1, Perceptual,

Adversarial, Style, Cultural |

|

Loss Weights |

Tuned via validation |

|

Pretraining Data |

Generic image

restoration datasets |

|

Fine-Tuning Data |

Heritage-specific

datasets |

|

Data Augmentation |

Rotation, scaling,

mild color jitter |

|

Framework |

PyTorch |

|

Hardware |

GPU-enabled system

(CUDA) |

AI restoration has other issues outside technical performance. The cultural symbolism and historical sense are expressed in heritage artworks that need to be maintained. The possibility of over-restoration, distortion of the style and the devaluation of its originality are the risks that indicate the necessity of culturally sensitive models and professional supervision in order to achieve ethically sound and historically accurate results of the restoration.

7. Case Studies on Digital Heritage Artworks

The following are some examples of case studies that indicate the feasibility of the suggested AI-based restoration framework in a variety of types of digital heritage artworks. The analysis is substantiated by the quantitative outcomes with particular cases and the overall results of expert evaluation in place of visual illustration, which is consistent with the dataset-wide performance trends of Table 5 and the results of qualitative assessment of Table 6. All these case studies emphasize the framework as being able to create a balance between visual upgrading and cultural and stylistic faithfulness.

7.1. Case Study I: Digitized Museum Paintings

Digitised museum paintings usually have degradation in the form of fading pigments, noise during scanning and fine brushstroke details. In the case study, an example of an oil painting in a museum archive is discussed. The poor input is characterized by less color saturation and poor texture patterns, especially in dark areas. A summary of the performance of restoration in this case is presented in Table 7. The proposed framework attains high PSNR and SSIM values in comparison to the baseline method, which implies recovery of structural and chroma detail. The high score of the LPIPS also indicates a high perceptual similarity to the reference image.

Table 4

|

Table 4 Case Study Results for Museum Painting Restoration |

|||

|

Method |

PSNR (dB) |

SSIM |

LPIPS |

|

CNN-Based Restoration |

27.85 |

0.86 |

0.2 |

|

GAN-Based Restoration |

29.12 |

0.88 |

0.17 |

|

Proposed Framework |

31.48 |

0.93 |

0.11 |

The reviewers of the experts observed better brushstroke continuity and historical color restoration, which correlates with high ratings of the style consistency as observed in Table 4.

7.2. Case Study II: Historical Manuscript Restoration

Manuscripts especially become exposed to ink fading, bleeding, staining and lost characters. The case study focuses on a paper page as a digital copy of a manuscript with serious ink deterioration and text loss. The visual consistency of fills in traditional inpainting techniques was poor, and GAN-based techniques sometimes generate semantically incorrect characters. The given framework improves the legibility of the text and still maintains the ancient look of the text. Table 5 summarizes the quantitative results showing significant improvements in the similarity of structures and quality of perception.

Table 5

|

Table 5 Case Study Results for Historical Manuscript Restoration |

|||

|

Method |

PSNR (dB) |

SSIM |

LPIPS |

|

Patch-Based Inpainting |

24.62 |

0.78 |

0.31 |

|

GAN-Based Restoration |

27.04 |

0.85 |

0.19 |

|

Proposed Framework |

29.36 |

0.91 |

0.13 |

Domain experts also stressed the fact that the recovered text was readable without a sense that it had been intentionally made to look modern, and this explains the high cultural authenticity scores that were noted in Table 5.

7.3. Case Study III: Murals and Frescoes

The murals and frescoes are often affected by surface cracks, erosion and huge missing areas due to exposure to the environment. In this case study, a fragment of a mural in a digital form, which is widely cracked and discoloured is under consideration. Baseline tools found it difficult to coherently recreate massive damaged areas. As presented in Table 6, the suggested framework is much more efficient than the base methods, especially in SSIM, which indicates a better quality of preserving compositional structure and texture continuity.

Table 6

|

Table 6 Case Study Results for Mural and Fresco Restoration |

|||

|

Method |

PSNR (dB) |

SSIM |

LPIPS |

|

CNN-Based Restoration |

26.18 |

0.82 |

0.24 |

|

GAN-Based Restoration |

28.02 |

0.86 |

0.19 |

|

Proposed Framework |

30.05 |

0.89 |

0.14 |

The capacity of the framework to rebuild the destroyed areas without the introduction of artificial textures was pointed out by experts as evidence of the high scores of visual coherence in Table 6.

7.4. Case Study IV: Folk and Indigenous Art

The folk and indigenous artworks have symbolic motives and culturally defined color schemes, and there is a strong necessity to preserve the authenticity of the artwork. The case study is devoted to a low-resolution digitized folk art object that has compression artifacts and color distortion. Table 7 of quantitative restoration results shows that the suggested framework produces better perceptual quality and is stylistically sound.

Table 7

|

Table 7 Case Study Results for Folk and Indigenous Art Restoration |

|||

|

Method |

PSNR (Db) |

SSIM |

LPIPS |

|

CNN-Based Restoration |

27.94 |

0.85 |

0.22 |

|

GAN-Based Restoration |

29.48 |

0.88 |

0.18 |

|

Proposed Framework |

31.02 |

0.91 |

0.12 |

The fact that the color associations were maintained on a symbolic level was especially appreciated by experts, which cemented the high cultural authenticity scores mentioned above. In all case studies, the suggested AI-based restoration model is always more effective than its counterparts and conventional and baseline AI approaches. The trends noted at the level of the case are highly consistent with the dataset-wise trends presented in Table 5 and expert assessment outcomes presented in Table 6. These results demonstrate that the framework can be generalized to different areas of heritage and at the same time be sensitive to artistic and cultural limitations.

8. Conclusion

This research provides an elaborate AI-enhanced system in the restoration of digital heritage artworks to solve technical degradation problems and the important need of cultural authenticity. The proposed solution incorporates convolutional feature extraction, generative reconstruction, and transformer-based global context modeling into a culturally limited learning paradigm that goes beyond the traditional and generic AI restoration methodologies. The experimental findings in the different heritage fields, such as paintings, manuscripts, murals, and folk art, prove that it is possible to achieve uniform gains in reconstruction fidelity (PSNR), structural preservation (SSIM), and perceptual quality (LPIPS). Analysis of case-studies and professional reviews go even further to validate the proposed framework in that it not only brings about visuality but still upholds stylistic consistency, symbolic integrity and historical feasibility. Cultural restraints and expert-in-the-loop validation, which were incorporated, were vital in reducing over-restoration and stylistic distortion that are major threats to generative restoration systems. In general, the suggested methodology provides a scalable, re-producible and heritage conscious solution that can be applied to large digital collections of museums, libraries and cultural institutions. The framework displays a high level of generalization, but the future research can be dedicated to the models of uncertainty, standardized authenticity measures, multimodal restoration, and more comprehensive expert validation. The results highlight the possibility of the responsible development of AI systems to have a transformative role in the sustainable preservation of the digital cultural heritage in the world.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Bonazza, A., and Sardella, A. (2023). Climate Change and Cultural Heritage: Methods and Approaches for Damage and Risk Assessment. Heritage, 6, 3578-3589. https://doi.org/10.3390/heritage6040190

Chindengwike, J. D. (2024). Application to Various Types of Computer Crimes and Digital Forensics: A Case of McCartney’s Net Worth and Settle. Journal of Digital Security and Forensics, 1(1), 30–33. https://doi.org/10.29121/digisecforensics.v1.i1.2024.20

Casillo, M., Colace, F., Gupta, B. B., Lorusso, A., Marongiu, F., and Santaniello, D. (2022). A Deep Learning Approach to Protecting Cultural Heritage Buildings Through IoT‑Based Systems. In Proceedings of the IEEE International Conference on Smart Computing (SMARTCOMP) (252-256). Helsinki, Finland. https://doi.org/10.1109/SMARTCOMP55677.2022.00063

De Lucia, C., Amaddii, M., and Arrighi, C. (2024). Tangible and Intangible Ex Post Assessment of Flood‑Induced Damage to Cultural Heritage. Natural Hazards and Earth System Sciences, 24, 4317-4339. https://doi.org/10.5194/nhess-24-4317-2024

Goswami, A., Sharma, D., Mathuku, H., Gangadharan, S. M. P., Yadav, C. S., Sahu, S. K., Pradhan, M. K., Singh, J., and Imran, H. (2022). Change Detection in Remote Sensing Image Data Comparing Algebraic and Machine Learning Methods. Electronics, 11(3), 431. https://doi.org/10.3390/electronics11030431

Jiang, T., Gan, X. E., Liang, Z., and Luo, G. (2022). AIDM: Artificial Intelligence for Digital Museum Autonomous Systems with Mixed Reality and Software‑Driven Data Analysis. Automated Software Engineering, 29, 22-45. https://doi.org/10.1007/s10515-021-00315-9

Kharroubi, A., Poux, F., Ballouch, Z., Hajji, R., and Billen, R. (2022). Three‑Dimensional Change Detection Using Point Clouds: A Review. Geomatics, 2(4), 457-485. https://doi.org/10.3390/geomatics2040025

Kim, H., and Lee, H. (2022). Emotions and Colors in a Design Archiving System: Applying AI Technology for Museums. Applied Sciences, 12(3), 1467. https://doi.org/10.3390/app12052467

Kosmas, P., Galanakis, G., Constantinou, V., Drossis, G., Christofi, M., Klironomos, I., Zaphiris, P., Antona, M., and Stephanidis, C. (2020). Enhancing Accessibility in Cultural Heritage Environments: Considerations for social computing. Universal Access in the Information Society, 19, 471-482. https://doi.org/10.1007/s10209-019-00651-4

Li, Y., and Zhang, D. (2025). Toward Efficient Edge Detection Using Integral Image Optimization and Canny Edge Detection. Processes, 13(2), 293. https://doi.org/10.3390/pr13020293

Liu, S., Bovolo, F., Bruzzone, L., Du, Q., and Tong, X. (2021). Unsupervised Change Detection in Multitemporal Remote Sensing Images. In Change Detection and Image Time Series Analysis 1 (1-34). John Wiley & Sons. https://doi.org/10.1002/9781119882268.ch1

Moualla, A., Karaouzene, A., Boucenna, S., Vidal, D., and Gaussier, P. (2017). Readability of the gaze and Expressions Of a Robot Museum Visitor. In Proceedings of the IEEE International Symposium on Robot and Human Interactive Communication (RO‑MAN) (712-719). Lisbon, Portugal. https://doi.org/10.1109/ROMAN.2017.8172381

Noh, H., Ju, J., Seo, M., Park, J., and Choi, D. G. (2022). Unsupervised Change Detection based on Image Reconstruction Loss. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW) (1351-1360). New Orleans, LA, USA. https://doi.org/10.1109/CVPRW56347.2022.00141

Sun, J., Khalid, K. A. T., and Kay, C. S. (2025). Deep Learning Models for Cultural Pattern Recognition to Preserve Intangible Heritage. Future Technologies, 4, 119-137. https://doi.org/10.55670/fpll.futech.4.3.12

Zielke, T. (2020). Is Artificial Intelligence Ready for Standardization? In Proceedings of the European Conference on Systems, Software and Services Process Improvement (EuroSPI). Düsseldorf, Germany. https://doi.org/10.1007/978-3-030-56441-4_19

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.